How to Reason Better and Think More Clearly

Putting It All Together: A Reasoner's Toolkit and Path Forward

You've made it to the final section of this course, and here's the thing nobody says out loud when you finish: the real work is just beginning. You can now name the fallacies, you understand the difference between validity and soundness, you can spot cognitive biases operating in real time, and you recognize when someone's trying to manipulate you with rhetoric. That's genuine progress. But knowing something and being able to do something under pressure are two entirely different creatures, and doing it automatically — the way you tie your shoes without thinking — is something else entirely.

What follows is two things at once. First, it's a unified map of everything in this course — how arguments, deduction, induction, abduction, fallacies, biases, rhetoric, and evidence all lock together into a single coherent system you can actually use when you're thinking fast. Second, it's honest talk about what comes next: what deliberate practice looks like, where people tend to stumble after they've learned the theory, and how to keep developing your reasoning over years, not just weeks.

The architecture comes first. Let's build it.

The Integrated Picture: How It All Fits

Every piece of this course has been about what happens inside a single process: you encounter a claim, you reason about whether to believe it, and in the background — underneath all of your conscious deliberation — your cognitive wiring, your emotional stakes, the people around you, and the broader incentive structures are all trying to shortcut or distort that process.

The whole course has mapped what happens at each node.

Arguments (Section 5) are the basic unit. Everything else is about how to build them well, recognize them being built badly, or understand why our minds resist doing either. An argument is just a set of premises offered in support of a conclusion. Simple. But here's where it gets interesting: what actually makes an argument good.

Deductive reasoning (Section 6) promises something magnificent — certainty, when it works. A valid argument with true premises guarantees a true conclusion. The catch? Most of the real questions you actually care about don't submit to purely deductive treatment. Logic tells you what follows from what; it doesn't tell you what's true in the world. You still have to bring your premises from somewhere, and that somewhere is almost always the messy, uncertain realm of experience.

Inductive reasoning (Section 7) is where most actual knowledge-building happens. You observe patterns, you generalize from them, you test those generalizations, you revise based on what you find. The distinction between deductive and inductive reasoning goes back to Aristotle, and it's stuck around for centuries because it captures something real: some arguments guarantee their conclusions, others merely support them with varying degrees of probability, and confusing the two — expecting certainty from evidence that can only give you likelihood — is one of the most common reasoning mistakes there is.

Abductive reasoning (Section 8) handles the third mode: you have observations and you need to figure out the best explanation for them. It's how diagnosis works, how investigation works, how scientific theories get built. The practical question is always the same: have you generated enough rival hypotheses? Have you tested them fairly against each other?

Fallacies (Section 9) are the named failure modes — ad hominem, straw man, false dilemma, appeal to authority, slippery slope, begging the question. These patterns keep showing up because they're exploiting genuine features of how cognition actually works. We do pay attention to who's speaking, we do reason from examples, we do default to binary thinking under pressure. Fallacies are persuasive because they map onto real mental tendencies.

Cognitive biases (Section 10) operate one level deeper. These aren't the same as fallacies. Fallacies are errors in how an argument is structured; biases are errors in the reasoning process itself — often unconscious, often systematic, baked into the hardware. Confirmation bias, availability heuristic, anchoring, motivated reasoning, the Dunning-Kruger effect — these are the reason critical thinking requires both abilities and dispositions. You can have the ability to reason well and still not apply it, because your biases are pulling you the other direction.

Rhetoric (Section 11) is the social layer — how arguments actually move through human communities, how persuasion works, where the line is between legitimate influence and manipulation. Understanding rhetoric doesn't immunize you against it. But it gives you something crucial: a moment of recognition. Oh, that's what's happening right now. That moment of recognition is the gap between manipulation landing cleanly and your reasoning actually getting a chance to fight back.

Evidence evaluation (Section 12) is where abstract logic meets the real, complicated world. How do you actually assess a study? When is a sample size adequate? What's the real difference between correlation and causation, and how do you spot the mechanism? Why does scientific consensus matter, and why can you also question it? Science is the most powerful tool for reasoning about the world that humans have ever built — and understanding why it works is what keeps you from being either paralyzed when it's contested or naive when you accept it uncritically.

Media and misinformation (Section 13) took all of this and applied it to the specific information landscape we're actually living in — one drowning in deliberately misleading content, where the business model of attention incentivizes outrage over accuracy, and where the reasoning skills that used to be optional are now basically mandatory for navigating everyday life.

That's the whole picture. Now let's talk about how you actually use it.

A Compact Reference: The Essentials at Your Fingertips

You can't consult a manual in every conversation. But you can internalize a small set of distinctions and quick tests that cover most situations. Here's what's worth carrying with you:

For any argument:

- What is the conclusion? (State it precisely. Vague conclusions are like ghosts — hard to grab hold of.)

- What are the premises? (Separate them from the background assumptions hiding in the background.)

- Does the conclusion actually follow from the premises? (Validity)

- Are the premises themselves true? (Soundness)

- What kind of reasoning is being used? (Deductive, inductive, abductive — each has different standards of evidence.)

For evaluating evidence:

- What's the source, and what do they gain if you believe them?

- Is this a single anecdote or a pattern observed across a representative sample?

- Is the relationship causal or just correlational? What's the actual mechanism?

- What would disprove this claim, and has anyone tested for it?

- What do the actual domain experts say — and where do they disagree?

For spotting fallacies:

- Is the argument attacking the person instead of the claim? (Ad hominem)

- Is the opposing position being fairly represented, or is it a caricature? (Straw man)

- Is an authority being quoted in a domain where they actually have expertise? (Appeal to authority)

- Is the argument pretending only two options exist when others clearly do? (False dilemma)

- Is the argument assuming the conclusion it's trying to prove? (Begging the question)

For examining your own reasoning:

- Am I emotionally invested in reaching a particular conclusion? (Check for motivated reasoning)

- Have I actually sought out serious arguments against my view, or just dismissed them? (Confirmation bias)

- Am I more confident than the evidence really justifies? (Calibration check)

- What would it take to change my mind? (If the answer is "nothing," that's a problem.)

For intellectual standards, the Paul-Elder critical thinking framework offers a checklist that serious practitioners come back to regularly: Is this thinking clear, accurate, precise, relevant, deep, broad, logical, significant, and fair? These aren't just abstract ideals — they're working prompts you can apply. "Could you give me an example?" addresses clarity. "How would we verify that?" addresses accuracy. Running through these quickly will catch a surprising number of arguments that sound plausible on the surface.

Tip: Write out that five-question argument checklist and put it somewhere you'll actually see it. Not because you'll consult it constantly — eventually you won't need to — but because the physical reminder keeps the habit alive during that fragile early period when you're still building it.

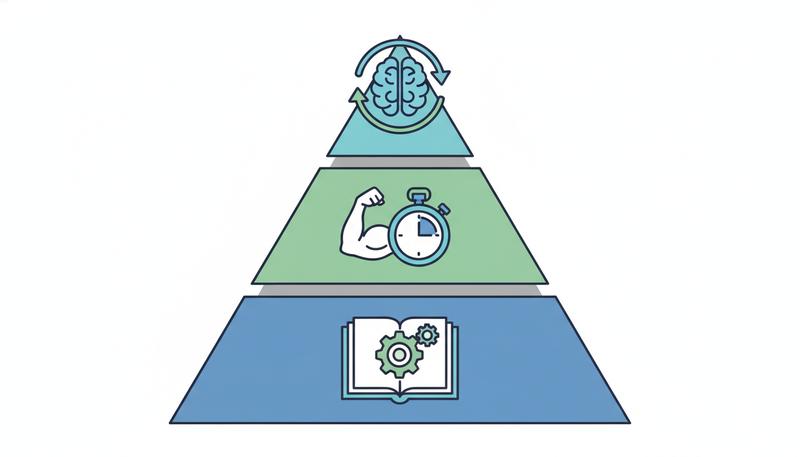

The Three Levels of Mastery

There's a real difference between knowing something intellectually, being able to apply it when things are moving fast, and having internalized it so thoroughly that it happens automatically. Most training — including this course — gets you to the first level. Getting to the other two takes time, repetition, and honest effort.

Level 1: Knowing the Rules

At this stage, you can identify a fallacy when you're reading slowly with time to think. You can analyze an argument's structure when it's laid out neatly in front of you. You understand validity versus soundness. You know what a double-blind trial is and why it matters. You'd pass a test on this material.

That's genuinely valuable. But there's a hidden trap: the illusion of competence. People at Level 1 often feel more confident in their reasoning than they did before — because now they have vocabulary. The names feel like understanding. But confidence and actual ability aren't the same thing, especially not yet.

Level 2: Applying the Rules Under Pressure

Real arguments don't arrive neatly labeled. They show up embedded in rhetoric, delivered with authority or emotional weight, mixed with true claims and false ones side by side, and they're coming at you in real time while you're also tracking the social landscape of the room. Your boss is the one arguing. Or the conclusion is one you desperately want to be true. Or you're tired and the argument sounds reasonable enough and you just want to agree and move on.

Getting to Level 2 means being able to apply your tools when it actually costs something. This requires practice in real contexts: actual conversations, actual news stories, actual decisions with actual stakes. The technique that works is retrospective analysis. After any significant argument or decision, replay it: What kind of reasoning was being used? Were the premises actually established, or just asserted? Was any fallacy lurking? Did I feel the pull of motivated reasoning and give in? This is slower than in-the-moment application, but it's how you build the pattern recognition that makes Level 2 possible.

Level 3: Habit

Level 3 is what the Paul-Elder framework calls intellectual traits — intellectual humility, intellectual courage, intellectual empathy, intellectual integrity. At this level, careful reasoning isn't a technique you switch on; it's how your mind naturally moves. You notice vagueness before you consciously run the clarity check. You feel the tug of confirmation bias as a kind of resistance. You catch yourself starting to believe something just because it fits your worldview, and you pause.

Most people who reach Level 3 in anything spent years at Level 2 first. There are no real shortcuts. What you can do is create conditions that speed things up: seek out smart people who disagree with you, read things that make you uncomfortable, ask yourself regularly "what would prove me wrong," and find communities where reasoning is valued and practiced out loud.

The Disposition Problem, Revisited

We came back to this early in the course: critical thinking skills and critical thinking dispositions are not the same thing. Skills are things you can do. Dispositions are things you're inclined to do, and that's where most people hit the wall. John Dewey called it reflective thinking — the active, persistent, careful consideration of your own beliefs — and he knew that education systems kept focusing on the first part and forgetting about the second.

You can memorize every fallacy in existence and still commit them all, because when it's costly to apply what you know, you won't. Intellectual honesty — actually following the argument where it goes, even when you hate where it's pointing — is a character trait, not just a cognitive skill.

The real outcome of this course isn't the skills themselves (though those are real). It's a shift in what you're like as a thinker. Specifically:

More intellectually humble. You've studied how reasoning breaks down, including your own. You hold conclusions loosely, proportional to evidence, and you actually change your mind when the evidence shifts. You don't confuse "I believe this passionately" with "this is probably true."

More intellectually honest. You apply your critical tools symmetrically — to arguments you like as much as arguments you dislike. You don't deploy skepticism like a weapon against opponents and then lay your guard down for allies.

More persistent. You don't escape to "it's all subjective anyway" when questions get hard. Some questions really do lack clean answers, but intellectual persistence means engaging with the difficulty rather than walking away.

More curious than defensive. When someone challenges your view, your gut response is interest, not threat. You ask what they know that you don't, rather than planning your rebuttal.

These aren't fixed personality traits. They're habits of mind, built through deliberate practice. Every time you catch yourself mid-fallacy and stop, you're building one. Every time you update a belief because of evidence you didn't want to find, you're building another.

Remember: The goal here is not to make you a better debater — it's to make you a better thinker. Those aren't the same thing. A good debater wins. A good thinker finds the truth.

Common Regressions: Where Training Can Go Wrong

Here's the part that most critical thinking courses skip over: getting some education in logic can make you worse in specific, predictable ways before it makes you better. Call it the Level 1 trap.

Fallacy labeling as a conversation-ender. The most common one. Someone learns the fallacy names and starts using them as a club. "That's an ad hominem." "You're begging the question." Case closed. The problem is that naming a fallacy without explaining why something is that fallacy — without addressing the underlying argument — is just a different rhetorical move. It performs sophistication without demonstrating it.

The rule: naming a fallacy is the beginning of your analysis, not the end. Show the work. Explain why the argument fails and what a better version would look like.

Demanding certainty before making any move. Logic training makes you sensitive to weak evidence, which is good. But overcalibrated skepticism can turn into decision paralysis — or, worse, motivated paralysis, where you selectively apply the "insufficient evidence" standard to conclusions you're avoiding. Most real decisions happen in the realm of probability, not certainty. The question isn't "is this proven beyond all doubt?" It's "does this evidence support this conclusion better than the alternatives?"

Becoming cynical instead of discerning. There's a crucial difference. A skeptic examines claims carefully and believes in proportion to evidence. A cynic rejects everything, which is actually intellectually lazy — it's cheaper to dismiss universally than to evaluate each thing on its merits. If training in fallacies and biases leaves you thinking "every argument is manipulated and you can't trust any source," that's not sophisticated reasoning. It's a different kind of intellectual failure, and it's one that bad-faith operators actively work to create in their targets because it's paralyzing.

Using logic as social signaling. Critical thinking, done right, is collaborative — the goal is better answers together. But it can degrade into a status game where people perform rigor rather than practice it. You see this in online communities where "logic and reason" get invoked constantly and genuinely sloppy reasoning goes unchallenged as long as it produces the right conclusions.

Warning: Being more interested in winning arguments than finding truth is the single biggest obstacle to growing as a reasoner. It's also brutally hard to notice in yourself. Do periodic check-ins: When did I last genuinely change my mind because of an argument? When did I last publicly acknowledge that someone else's point was better than mine?

Resources for Continued Learning

This course has given you a foundation. Here's where to go next, depending on which direction pulls you.

For the philosophy and formal logic track:

- An Introduction to Logic by Patrick Hurley — the standard undergraduate text, comprehensive and challenging

- Language, Proof and Logic by Jon Barwise and John Etchemendy — takes you into symbolic logic properly

- The Internet Encyclopedia of Philosophy (iep.utm.edu) and the Stanford Encyclopedia of Philosophy (plato.stanford.edu) — both free, peer-reviewed, and excellent entry points for going deeper on any topic from this course

For the cognitive science and decision-making track:

- Thinking, Fast and Slow by Daniel Kahneman — the definitive synthesis of research on cognitive biases

- The Undoing Project by Michael Lewis — the human story of the Kahneman-Tversky collaboration

- Noise: A Flaw in Human Judgment by Kahneman, Sibony, and Sunstein — the sequel, focused on judgment variability that gets overlooked when we focus only on bias

- Superforecasting: The Art and Science of Prediction by Philip Tetlock and Dan Gardner — empirically grounded guidance on calibrating beliefs and making better predictions

For the statistics and scientific reasoning track:

- The Art of Statistics by David Spiegelhalter — the best general-audience introduction to statistical thinking available

- Statistics Done Wrong by Alex Reinhart (also free at statisticsdoneWrong.com) — a practical guide to the most common statistical errors in the scientific literature

- How to Think About Weird Things by Theodore Schick and Lewis Vaughn — critical thinking applied to scientific claims, including the fringe stuff

For rhetoric and argumentation:

- Thank You for Arguing by Jay Heinrichs — genuinely fun and useful guide to classical rhetoric in contemporary contexts

- The Elements of Style by Strunk and White — still the best short text on clear writing, which is inseparable from clear thinking

- Arguing Well by John Shand — accessible and practical treatment of argumentation

For staying current:

- Julia Galef's work, including The Scout Mindset, which frames the disposition problem beautifully

- Follow the ongoing Replication Crisis conversation in psychology — search "Replication Crisis" and watch what holds up in the scientific literature

Ongoing practice: The single best exercise is reading serious journalism and commentary in domains where you're genuinely uncertain, then doing deliberate analysis. Pick three pieces a week, identify the main arguments, ask if they hold up. Keep a reasoning journal — write down your beliefs on contested questions along with your confidence level, then revisit them in six months. The act of committing predictions to writing, before you know how things turn out, is one of the most reliable ways to calibrate your judgment.

The Honest Bottom Line

Here's something this entire course has been building toward, and it's worth saying plainly at the end.

Even with everything you've learned here, you will make reasoning errors. Skilled rhetoricians will manipulate you. You will believe things that turn out to be false. You will hold onto conclusions longer than evidence justifies. You will fail to apply your critical tools precisely when the stakes are highest — because that's exactly when motivated reasoning is strongest.

This is not pessimism. It's the shape of a realistic assessment.

The alternative — believing that the right education produces reliable, unbiased reasoners — is itself a well-documented cognitive mistake. The research on debiasing is sobering: most interventions that improve critical thinking abilities show modest, context-dependent effects. The improvements are real, but they're limited. Education makes you better, not immune.

What this course has given you is better tools, not better immunity. And tools matter — they don't guarantee good outcomes, but they substantially shift the odds in your favor. Over thousands of decisions, across a lifetime of thinking, those odds compound. You'll be wrong less often. When you are wrong, you'll know how you went wrong, which means you can correct faster.

The core claim of this entire course has been that clear thinking is a learnable craft — not something you're born with or lack, not a side effect of raw intelligence, but a set of specific, nameable skills and intellectual habits that can be deliberately developed through practice. That's true. The skills are real. The habits take time. And the ongoing humility about your own limitations isn't a defeat — it's actually part of what good reasoning looks like.

The best reasoners you'll encounter are not the most certain. They're the ones who are most precisely calibrated, most genuinely curious about where they're wrong, most willing to follow evidence into uncomfortable places. They've absorbed at a deep level what Socrates understood: that wisdom begins with knowing what you don't know.

That's not a destination. It's a direction. Point yourself at it, and keep walking.

The tools you've built here are durable and real. Use them generously — on your own thinking first, on the world's arguments second. The order matters.

Only visible to you

Sign in to take notes.