SQL vs NoSQL: Which Database Type to Choose

By this point in the course, you've developed genuine fluency with relational databases. You know how tables relate to each other, you can write a JOIN without flinching, you understand why normalization matters, and you've got a reasonable feel for what makes a query fast or slow. You've also learned the most important lesson: measure first, decide second. Don't add indexes because someone told you to. Don't normalize a schema to five levels because a textbook says so. Do what the data and the actual queries demand.

But here's the thing nobody tells you when you're learning SQL: the database world isn't monolithic. There's an entire category of databases that reject the relational model entirely — sometimes for good reasons, sometimes out of frustration, sometimes because a specific use case genuinely does work better with a different architecture. These are called NoSQL databases, and they've created a lot of confusion in the industry. Some people treat them as the future that makes SQL obsolete. Others dismiss them as a fad that's caused more problems than it solved. The truth is more practical: each approach solves different problems well, and knowing which one to reach for is a skill that'll make you dangerous in any data conversation.

This section will give you a clear framework for understanding when SQL is the right tool and when NoSQL actually is. Not vendor cheerleading in either direction — just a mental model you can use when someone asks "should we use Postgres or MongoDB for this?"

Where NoSQL Came From (And Why That Matters)

Here's something that gets lost in the abstract debate: NoSQL wasn't born from a theoretical argument about data models. It was born from companies hitting real, specific scaling problems around 2006.

Google was serving billions of searches. Amazon was running a massive e-commerce operation. Facebook was growing exponentially. These companies needed to store staggering amounts of data and serve it fast across distributed clusters of commodity servers. Traditional relational databases — designed back when a single powerful server was the norm — worked brilliantly until they hit the wall. You can only make one server so big before costs explode and you hit physical limits.

So Google built Bigtable. Amazon built Dynamo. Facebook built Cassandra (and later open-sourced it). These weren't "NoSQL is theoretically superior" projects. They were "here's how we solve our specific problem" engineering. And once those systems were described in papers and made available, other companies building similar problems borrowed the ideas.

The term "NoSQL" — actually coined casually at a meetup in 2009 and not even intended as a formal label — stuck. IBM notes that "NoSQL" is sometimes better understood as "Not Only SQL," since many NoSQL databases actually support some SQL-like query capabilities.

So when you're evaluating whether NoSQL is right for your problem, context matters. You're probably not serving 2 billion Facebook users. You might be, but the odds are different. The scaling problems that drove these tools into existence are real, but they're not universal.

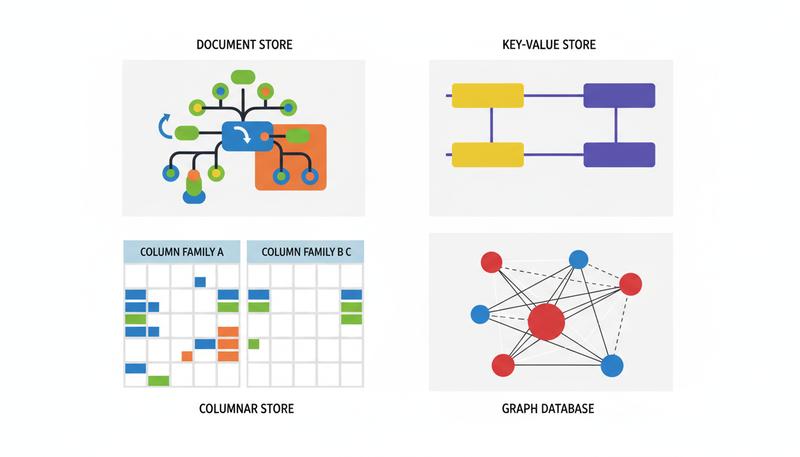

The Four NoSQL Data Models

Here's the first and most important misconception to clear up: "NoSQL" isn't one thing. It's a family of completely different approaches to storing data, each optimized for wildly different problems. People talk about "NoSQL vs. SQL" as if NoSQL is a single unified alternative, when it's really four distinct paradigms that happen to share a label.

Document Stores (e.g., MongoDB, CouchDB)

Document databases store data as self-contained documents — usually JSON or BSON objects. Each document can have its own structure. Documents can nest other documents inside them. Think of it as database objects that look like the objects in your application code.

Remember Mario's pizza restaurant from earlier in the course? In a relational database, an order gets spread across three tables: orders, order_items, and maybe order_customizations. You have to JOIN them back together to understand a complete order.

In a document database, the entire order — header, line items, special instructions, delivery address, everything — lives in one document:

{

"order_id": "ORD-2847",

"customer": "Sofia Chen",

"placed_at": "2024-01-15T19:23:00Z",

"items": [

{

"name": "Margherita",

"size": "large",

"quantity": 1,

"customizations": ["extra basil", "thin crust"]

},

{

"name": "Garlic bread",

"quantity": 2

}

],

"delivery_address": {

"street": "42 Oak Avenue",

"city": "Portland"

},

"total": 34.50

}

This is how most application developers already think about data. The document maps naturally to the object in your code. You don't have to JOIN five tables to render an order receipt — you just retrieve the document and you've got everything.

MongoDB describes this as the "document model format that developers use in their application code," and that alignment between storage format and application logic is genuinely valuable when your primary use case is reading and writing entire self-contained records to an application.

When document stores shine: Content management systems (blog posts, product catalogs), user profiles with variable attributes, e-commerce orders, mobile app backends. Anywhere you're primarily reading and writing complete, self-contained records.

When they struggle: This is where it gets awkward — when you need complex relationships across document types. The query "find all customers who ordered a Margherita in the last 30 days" is straightforward in SQL and requires more gymnastics in MongoDB.

Key-Value Stores (e.g., Redis, DynamoDB, Memcached)

The simplest NoSQL model, and probably the one you'll actually use in real life. A key-value store is essentially a giant, highly optimized dictionary. You store a value under a key, and you retrieve it by that key. That's the entire interface.

user:session:abc123 → { "user_id": 44, "cart": [...], "expires": 1705348800 }

rate_limit:ip:192.168.1.1 → 47

product:price:SKU-8847 → 24.99

You're not querying by attributes within the value. You're not doing joins. You look things up by key, and you get the value back, usually in microseconds.

When key-value stores shine: Session management, caching (this is where Redis is genuinely spectacular — it keeps everything in memory and serves lookups faster than you'd think possible), rate limiting, leaderboards, real-time features where microsecond response times matter.

When they struggle: The moment you need to query by anything other than the key. "Find all users whose session expires in the next hour" isn't what key-value stores are for.

Here's something important that surprised me when I first learned it: Redis, the most popular key-value store, isn't usually meant to replace a relational database. It's meant to work alongside one. The relational database is your source of truth; Redis is the hot cache sitting in front of it, serving the 99% of requests that don't need to hit the database. They're not competitors — they're teammates.

Column-Family Stores (e.g., Apache Cassandra, HBase)

This one is the conceptually trickiest, and honestly, the name doesn't help clarify it. Column-family stores aren't just "relational databases with columns" — they're a fundamentally different architecture that happens to organize data around columns.

In a column-family database, data is organized into rows identified by a key, but each row can have completely different columns. Columns are grouped into "families." The whole design is optimized for writing and reading massive volumes of time-series or sequential data across distributed clusters with no single point of failure.

Cassandra was designed by Facebook for powering their messaging/inbox system and handling massive write throughput from their message platform, not specifically for inbox search — they needed to handle billions of writes from millions of users simultaneously, and traditional databases just couldn't keep up. Netflix uses it to track what you've watched and when. Apple uses it. The pattern is always the same: companies operating at massive scale where data is written once and read many times in sequential patterns.

When column-family stores shine: IoT sensor data (millions of writes per second), time-series data (logs, metrics, financial tick data), activity feeds, any workload where you're writing enormous volumes of sequential data and need it distributed across many servers without data loss.

When they struggle: Ad-hoc queries, complex relationships, anything that requires the flexibility of a rich query language. Cassandra's query model is deliberately constrained — you design your data model around the specific queries you'll run, and changing that later is painful. This is intentional; it's how they achieve the write throughput they're famous for.

Graph Databases (e.g., Neo4j, Amazon Neptune)

Graph databases store data as nodes (entities) and edges (relationships between entities). If you've ever been modeling a social network in relational terms and thought "this feels wrong," graph databases are the answer.

In SQL, you'd represent "Sofia is friends with Marcus, and Marcus follows Elena, and Elena works at TechCorp which partners with..." as multiple tables with foreign keys pointing at each other. To answer "find all friends-of-friends who live in Portland," you'd write nested queries or multiple JOINs.

In a graph database, that network of connections is stored as it naturally exists — as a graph. Querying "find all friends-of-friends who live in Portland" is a graph traversal, and graph databases are engineered specifically to do that efficiently.

According to AWS, graph databases "use nodes to store data entities and edges to store relationships between entities" and are "purpose-built to store and navigate relationships" — which relational databases represent with foreign keys but weren't really optimized to traverse at scale.

When graph databases shine: Social networks, fraud detection (fraudsters often form suspicious connection patterns), recommendation engines ("people who liked this also liked..."), knowledge graphs, network and IT infrastructure analysis.

When they struggle: Graph databases are specialized tools. They're phenomenal at what they do and awkward at everything else. If you're not genuinely doing relationship traversal as your core workload, you probably don't need one.

graph TD

A[Your Data Problem] --> B{What's the core pattern?}

B --> C[Structured records with relationships]

B --> D[Self-contained documents]

B --> E[Simple lookup by key]

B --> F[Massive sequential writes]

B --> G[Relationship traversal]

C --> H[Relational / SQL]

D --> I[Document Store e.g. MongoDB]

E --> J[Key-Value Store e.g. Redis]

F --> K[Column-Family e.g. Cassandra]

G --> L[Graph DB e.g. Neo4j]

ACID vs. BASE: The Trade-off That Defined a Generation

Back in Section 10, we covered ACID — the four properties that make relational transactions reliable: Atomicity, Consistency, Isolation, Durability. If you transfer money from checking to savings, ACID guarantees the entire transaction succeeds or nothing happens. No half-transfers. No mystery money disappearing into the ether.

Early NoSQL systems made a deliberate trade-off: they relaxed some ACID guarantees in exchange for speed and the ability to scale horizontally. This philosophical shift got described with a (somewhat strained) acronym: BASE.

- Basically Available — the system responds to requests, even if the response isn't perfectly current

- Soft state — the system's state can change over time, even without new input, as distributed nodes sync up

- Eventually consistent — the system will eventually reach a consistent state, but not necessarily immediately

"Eventually consistent" sounds terrifying if you're used to ACID. But consider your actual use case. If you post a photo on Instagram and your friend in another country sees it 200 milliseconds later than someone in your city — does that matter? Not really. The system is eventually consistent, and "eventually" here means fractions of a second. For social media feeds, caches, and activity streams, eventual consistency is a perfectly reasonable trade-off for massive scalability.

For a bank transfer? No. You need ACID.

Remember: The BASE vs. ACID distinction isn't about which is "better" — it's about which trade-off your application can live with. The right answer depends entirely on what you're building.

Here's the 2024 update, though: the lines have blurred considerably. Many NoSQL databases have added ACID-compliant transactions in recent years. MongoDB added multi-document ACID transactions. AWS DynamoDB has transaction support. The old framing of "NoSQL = eventual consistency, SQL = ACID" is now an oversimplification. IBM notes that some NoSQL databases — including MongoDB and CouchDB — can integrate and follow ACID rules. The ecosystem has matured, and the trade-offs are now more subtle.

CAP Theorem: Why Distributed Systems Have to Make Hard Choices

No discussion of NoSQL is complete without at least a brief encounter with CAP theorem. It sounds scarier than it actually is, but it's genuinely useful for understanding why different databases make the architectural choices they do.

CAP theorem, proven by Eric Brewer and later formalized by Gilbert and Lynch, states that a distributed data system can only guarantee two of the following three properties at any given time:

- Consistency (C) — every read returns the most recent write (or an error). All nodes see the same data at the same time.

- Availability (A) — every request gets a response (not necessarily the most recent data, but a response). The system never refuses a request.

- Partition Tolerance (P) — the system keeps working even if messages between nodes get lost or delayed (i.e., the network experiences a "partition").

The reason you can only have two of the three is that partition tolerance is essentially non-negotiable in any real distributed system — networks fail, packets get lost, and a database cluster that collapses entirely whenever there's any network hiccup is useless. So the practical choice is really between CP (consistent but might become unavailable during a network failure) and AP (always available but might serve stale data during a network failure).

CP databases: HBase, Zookeeper, some configurations of MongoDB. When a network partition happens, they refuse to serve potentially stale data — they return an error instead. Consistent but sometimes unavailable.

AP databases: Cassandra, CouchDB, DynamoDB (in its default configuration). When a partition happens, they keep responding — but might serve you data that's not fully up-to-date. Available but not always consistent.

Relational databases traditionally didn't worry much about partition tolerance because they weren't designed to be distributed across multiple nodes — they lived on a single server. A single-server Postgres doesn't need to worry about network partitions between nodes. As relational databases have added clustering and replication features, they've had to grapple with the same CAP trade-offs.

You don't need to memorize the detailed theory. Knowing that "this database prioritizes availability over strict consistency" is a real architectural statement — not a bug, not a failure — is the important part.

Horizontal vs. Vertical Scaling: Why It Matters

One of the most concrete differences between traditional SQL and NoSQL databases is how they grow when you need more capacity.

Vertical scaling means making your single server more powerful — more CPU cores, more RAM, faster storage. It's simple (just pay for a bigger machine) but has limits. Eventually you hit the ceiling of what any single machine can do, and that ceiling gets very expensive very fast at the high end.

Horizontal scaling means adding more servers to a cluster. Instead of one powerful server, you have ten, fifty, or a thousand commodity servers working together. This is how Google stores your Gmail — not on one giant computer, but spread across thousands of ordinary servers.

NoSQL databases were designed with horizontal scaling as the primary architecture. Cassandra treats every node in a cluster as equal — there's no "primary" server that everything flows through. You can add a new node to a Cassandra cluster while it's running in production and it automatically starts taking its share of the load. That's elegant horizontal scaling.

Traditional relational databases scale vertically well and horizontally awkwardly. Sharding a Postgres database — splitting your data across multiple servers — is doable but requires significant engineering effort and introduces complexity. It's not Postgres's native strength.

Tip: If you're a small or medium-sized business, you'll almost certainly never need to worry about horizontal scaling as a deciding factor. The vast majority of organizations run entire businesses on a single well-tuned Postgres instance. Don't let the fact that Netflix uses Cassandra influence your decision about storing a client database.

The Honest Assessment: The Gap Has Narrowed

Here's what a lot of NoSQL evangelism from the 2010s conveniently ignores: relational databases didn't sit still.

While MongoDB and Cassandra were adding features and reliability, PostgreSQL was adding native JSON column types, full-text search, JSON path queries, and enough flexibility that you can store and query semi-structured data reasonably well inside a relational database. MySQL added JSON support. SQL Server added document-style features. Relational databases grew more flexible and capable.

Meanwhile, NoSQL databases added ACID transactions, better query languages, and more sophisticated tooling. They grew more structured.

AWS describes relational databases as efficient, flexible, and "easily accessed by any application," while noting that NoSQL databases are "optimized for applications that require large data volume, low latency, and flexible data models." The honest truth is that both categories have been borrowing features from each other for a decade.

This doesn't mean the distinction doesn't matter — it does. But it means the decision isn't as binary as the old "SQL vs. NoSQL" discourse implied. You're not choosing between a dinosaur and the future. You're choosing between mature tools with different default strengths.

Warning: Be suspicious of any recommendation to switch from SQL to NoSQL because "NoSQL is more modern" or "SQL doesn't scale." These are not complete arguments. SQL scales fine for most workloads, and "more modern" is not an engineering criterion.

The Decision Framework: When to Use Which

Let's get concrete. Here's an honest guide to which database type fits which workload:

Choose a relational database (PostgreSQL, MySQL, SQLite) when:

- Your data has clear, stable relationships between entities

- You need strict ACID compliance — financial transactions, anything where half-complete writes are unacceptable

- Your schema is relatively stable, or you can afford the discipline of migrations

- You need powerful ad-hoc querying and analytics (SQL's expressiveness is still unmatched)

- Your team knows SQL well and your NoSQL expertise is thin

- You're a small-to-medium application that doesn't face Google-scale traffic

- You need joins across multiple entity types as a core operation

Choose a document database (MongoDB) when:

- Your data is naturally hierarchical and self-contained (product catalogs, user profiles, blog posts)

- Your schema changes frequently and you can't predict its shape well in advance

- You're building a backend for a mobile or web app where you read/write complete records

- Your team is JavaScript-heavy and the JSON-native model feels natural

- You don't need complex cross-document joins as a primary operation

Choose a key-value store (Redis, DynamoDB) when:

- You need caching — full stop, Redis is spectacular at this

- You're managing user sessions, rate limiting, or real-time counters

- Your access pattern is "look up X by its ID" with no complex querying

- You need sub-millisecond response times

Choose a column-family store (Cassandra) when:

- You're writing billions of events — IoT telemetry, logs, metrics

- You need to distribute writes across many data centers with no single point of failure

- Your data is time-series or sequential in nature

- You're at genuine internet scale (Cassandra is engineering-intensive — don't choose it unless you need what only it provides)

Choose a graph database (Neo4j) when:

- Your core business logic involves traversing relationships — social networks, recommendations, fraud detection

- The relationship between entities is as important as the entities themselves

- You're running pathfinding or connectivity queries that would require many recursive JOINs in SQL

Here's that framework as a quick reference table:

| Use Case | Best Fit | Why |

|---|---|---|

| Financial transactions | Relational (SQL) | ACID compliance is non-negotiable |

| User profiles with variable attributes | Document (MongoDB) | Flexible schema, hierarchical data |

| Session management, caching | Key-Value (Redis) | Microsecond lookups, TTL support |

| IoT sensor data, time-series | Column-Family (Cassandra) | High write throughput, horizontal scale |

| Social network friend graphs | Graph (Neo4j) | Relationship traversal is the workload |

| E-commerce orders and inventory | Relational or Document | Depends on relationship complexity |

| Real-time leaderboards | Key-Value (Redis) | Sorted sets, in-memory speed |

| Content management system | Document or Relational | Both work; schema stability is the tiebreaker |

| Analytics and reporting | Relational (SQL) | SQL expressiveness, JOINs, GROUP BY |

| Fraud detection patterns | Graph (Neo4j) | Connection pattern analysis |

The "Use What Your Team Knows" Factor

Here's something the technical literature usually skips over because it feels too human to mention in an engineering context: organizational knowledge is a legitimate technical constraint.

If your entire team has five years of PostgreSQL experience and zero production experience with Cassandra, choosing Cassandra for a new project isn't a bold technical decision — it's a risk you're taking with your team's ability to debug at 3 a.m. when something goes wrong. The best database for your use case is often the one your team can actually operate, tune, monitor, and recover from when disaster strikes.

This isn't an excuse to never learn new things. It's a recognition that expertise has real value, and switching database paradigms mid-project is one of the most expensive technical decisions you can make. There are plenty of companies running successful products on "boring" PostgreSQL because they know PostgreSQL cold, and that operational confidence is worth more than any theoretical advantage of a trendier alternative.

IBM explicitly acknowledges this as a SQL advantage: "Because SQL databases have a long history now, they have huge communities, and many examples of their stable codebases online. There are many experts available to support SQL." Community size and available expertise aren't soft factors — they translate directly into how fast you can solve problems and how many options you have when things break.

The flip side: if you're starting a new team or project from scratch and you have equal access to expertise in both worlds, then the technical merits of the use case should drive the decision.

Putting It Together

The SQL vs. NoSQL framing has always been a little bit of a false war. The question was never "which is better" — it was always "which is better for this specific situation."

Relational databases are still the right default for the vast majority of applications. They're mature, expressive, well-understood, and far more flexible than their critics gave them credit for. If you're not sure what database to use for something new, starting with PostgreSQL and switching later if you hit a genuine limitation is almost always the right call.

NoSQL databases are excellent solutions to specific, well-defined problems. Document stores genuinely shine for hierarchical, schema-flexible data. Redis genuinely is the best tool for caching and real-time data structures. Cassandra genuinely handles internet-scale write throughput in ways PostgreSQL doesn't. These aren't marketing claims — they're the outcome of these tools being designed from the ground up for specific workloads.

The skill — and this is one of those genuinely human judgment calls that no tool makes for you — is knowing which problem you actually have. Which is exactly what the rest of this course has been equipping you to figure out.

Only visible to you

Sign in to take notes.