How Regular Computers Work and Why It Matters

Everything Starts with a Switch

So let's look at that wall. Here's the most important sentence to understanding why quantum computers matter: everything your computer does — every video, every email, every spreadsheet, every game — ultimately comes down to flipping tiny switches. That's it. That's the secret. The entire digital world, all of it, is built on top of an almost absurdly simple idea.

These switches are called bits, short for binary digits. Each bit can be in one of exactly two states: 0 or 1. Off or on. No or yes. False or true. The simplest possible choice a machine can make. In modern computers, these switches are physical devices called transistors — microscopic components etched into silicon chips, each one capable of being switched on or off by an electrical signal. A single bit is useless by itself — it can only tell you one thing: is this switch on or off? But chain enough bits together, and something remarkable happens. Eight bits give you 256 possible combinations. Thirty-two bits give you over four billion. By the time you're working with the billions of bits packed inside a modern processor, you can represent anything — a photograph, a symphony, a legal contract, the moves in a chess game.

Here's the beautiful part: once you can represent numbers, you can represent everything. Letters become numbers (the letter "A" is traditionally stored as the number 65). Colors become numbers (a particular shade of blue is a combination of three numbers representing red, green, and blue values). Sound becomes numbers (a rapid sequence of numbers representing the amplitude of a sound wave at each moment in time). Instructions telling the computer what to do? Also numbers.

It's numbers all the way down. And numbers are built from bits. And bits are just switches.

Logic Gates: Where Bits Learn to Think

Storing information is one thing. Actually computing something with it is another. This is where logic gates come in.

A logic gate is a circuit that takes one or two bits as input and produces one bit as output, following simple rules. The AND gate, for example, outputs a 1 only if both its inputs are 1. The OR gate outputs a 1 if either input is 1. The NOT gate simply flips its input: 0 becomes 1, and 1 becomes 0.

These seem almost laughably simple to be useful. But combine millions of them in the right patterns, and you get circuits that can add numbers, compare values, remember information, and execute instructions. The entire mathematical capability of a modern processor — its ability to render 3D graphics, simulate physics, run machine learning algorithms — emerges from vast networks of these elementary gates, each one making a tiny, dumb decision about two bits.

graph LR

A[Bit Input 1] --> G[AND Gate]

B[Bit Input 2] --> G

G --> C[Output: 1 only if both inputs are 1]

D[Bit Input] --> H[NOT Gate]

H --> E[Output: Flipped bit]

F[Bit Input 1] --> I[OR Gate]

J[Bit Input 2] --> I

I --> K[Output: 1 if either input is 1]

When you add up enough of these gates — and modern chips have billions of transistors, each one participating in gate operations — you get a machine capable of executing billions of operations per second. A modern laptop can perform around a hundred billion simple operations every second. That's genuinely staggering when you think about what each operation actually is: a tiny switch being checked or flipped.

Moore's Law: Sixty Years of Getting Faster

For most of computing's history, speed has been almost free. Build the chip, make the transistors smaller, pack more of them onto the silicon, and suddenly the computer gets faster and cheaper. This pattern was famously described by Intel co-founder Gordon Moore in 1965: the number of transistors on a chip would double approximately every two years, leading to regular doublings in computing power.

This trend held remarkably well for decades, driving the transformation from room-sized computers that cost millions of dollars to smartphones more powerful than the machines that sent humans to the Moon. It's one of the most sustained technological improvements in human history.

But there's a problem. Transistors can only get so small. We're now making them just a few atoms wide — and at that scale, something unsettling starts to happen. In classical physics, a particle that doesn't have enough energy to cross a barrier simply can't cross it. But in quantum physics, particles can do something that has no classical equivalent: they can tunnel straight through barriers that should be impassable. This is called quantum tunneling, and it's completely real. When your transistor's barrier is only a handful of atoms thick, electrons stop behaving like obedient switches and start teleporting through the walls you're trying to use to contain them. The switch stops being a reliable switch. (There's a pleasing irony here: the quantum weirdness we're about to harness for computing is the same quantum weirdness that's breaking our classical computers at small scales.)

Heat becomes a serious engineering challenge at these sizes too. And taken together, the laws of physics are starting to push back hard.

More importantly, there's a different kind of wall — not a hardware wall, but a mathematical one. And this wall doesn't bend for better manufacturing techniques.

The Wall That Hardware Can't Fix

Here's where things get interesting. And humbling.

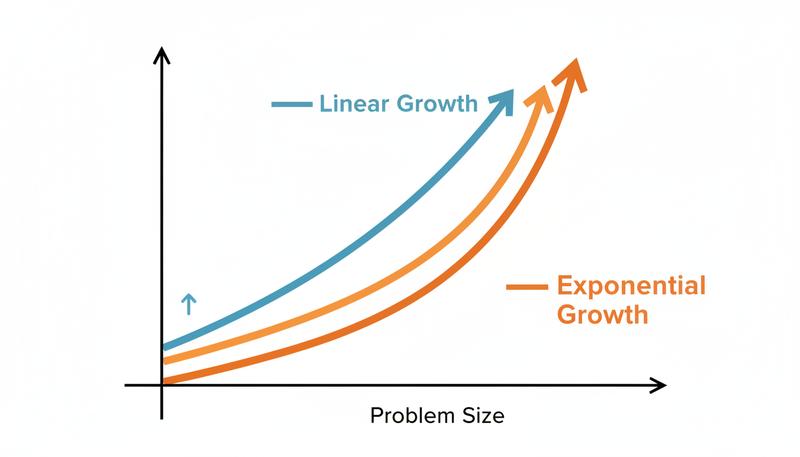

Some problems aren't just hard for computers — they're hard in a very specific, mathematical way. The difficulty doesn't scale the way you'd expect. It doesn't just get worse as the problem gets bigger. It explodes.

Take a simple example: you have a list of cities and you want to find the shortest route that visits all of them exactly once. The classic traveling salesman problem. With 5 cities, there are only a handful of possible routes to check. With 20 cities, there are over two quintillion possible routes. With 65 cities, the number of possible routes exceeds the number of atoms in the observable universe.

How long would a classical supercomputer take to check them all? The answer isn't "a really long time" — it's "longer than the current age of the universe." And that's not because supercomputers are slow. It's because the number of possibilities grows exponentially with the size of the problem, while the computer's speed grows only incrementally.

This class of problems — where the number of possible answers grows exponentially — is where classical computers hit a wall that no amount of better hardware can overcome. You can make the computer twice as fast, and it will still take more than the age of the universe. Make it a thousand times faster, and the problem barely notices.

Let me make this real. Cryptography — the system that keeps your banking details, your messages, and most of the internet secure — relies on a specific version of this exponential explosion. Breaking most encryption requires finding the prime factors of a very large number. For a number with 600 digits, a classical computer would take longer than the age of the universe to factor it using the best known classical algorithms. This isn't a bug in the security system — it's the feature. It's why encryption works at all.

The Pattern Hiding in the Problem

Let's step back for a moment and look at the pattern here. Classical computers are incredibly fast at doing things sequentially — checking one possibility, then the next, then the next. They're basically very fast, very reliable counting machines. For problems where the number of possibilities grows manageably, this works beautifully. Addition, word processing, video compression, web browsing — classical computers handle all of this with ease.

But for problems where the possibilities grow exponentially — drug molecule simulation, breaking encryption, optimizing vast logistics networks, simulating quantum systems in physics — the sequential approach hits a wall. Not a temporary wall that better chips will eventually break through. A permanent, mathematical wall.

The reason is almost philosophical: classical computers, no matter how fast, are still forced to check possibilities one at a time. Or at best, if you have multiple processors, a handful at a time in parallel. But if there are 2^300 possible answers (a number with ninety digits), having a million processors doesn't really help. You're still nowhere close.

graph TD

A[Problem with many possibilities] --> B{Classical Computer Approach}

B --> C[Check possibility 1]

C --> D[Check possibility 2]

D --> E[Check possibility 3]

E --> F[... continues for billions of years ...]

F --> G[❌ Still not done]

A --> H{Different approach needed}

H --> I[What if we could explore many possibilities at once?]

I --> J[This is what quantum computing promises]

The Setup for Something New

So here's where we land at the end of our classical computer story.

The computers we have today are marvels. Truly. Billions of tiny transistors, flipping billions of times per second, following the logic of AND and OR and NOT, performing calculations that would have taken rooms full of mathematicians years to complete. They have transformed human civilization in ways that would have seemed like magic to people born just a few generations ago.

And yet. There are problems they simply cannot solve — not because we haven't built the right computer yet, but because the math itself doesn't cooperate with the sequential, one-bit-at-a-time approach.

This is the gap that quantum computing is trying to fill. Not by being a faster classical computer. Not by adding more transistors. But by using a completely different kind of switch — one that doesn't have to be just 0 or just 1. One that can, in a very real and very strange way, be something else entirely while you're not looking at it.

Quantum computers are not meant to replace classical computers — your laptop isn't going to become a quantum machine any time soon. Rather, as the Perimeter Institute describes it, they're designed to attack a specific class of problems that classical computers fundamentally struggle with: the exponentially explosive ones.

To understand how they do it, we need to understand the weirdest thing in all of science: quantum physics. Which is exactly where we're headed next.

But before we go there, hold onto this picture: billions of tiny transistors, flipping on and off, one at a time, running out of time before the universe ends. That's the problem. Now we're going to meet the solution.

Only visible to you

Sign in to take notes.