Quantum Supremacy Explained: What It Means and Where We Are Now

The Milestone That Changed Everything: Quantum Supremacy and Where We Stand

Let's do something a bit different here. Instead of jumping straight to the cutting edge, let's trace the actual path that got us here — the milestones that separate real progress from hype. You already know that quantum computers will likely work best as specialized tools inside hybrid systems, solving problems that classical computers hit a wall on. But how do we know this is actually possible? That knowledge comes from history.

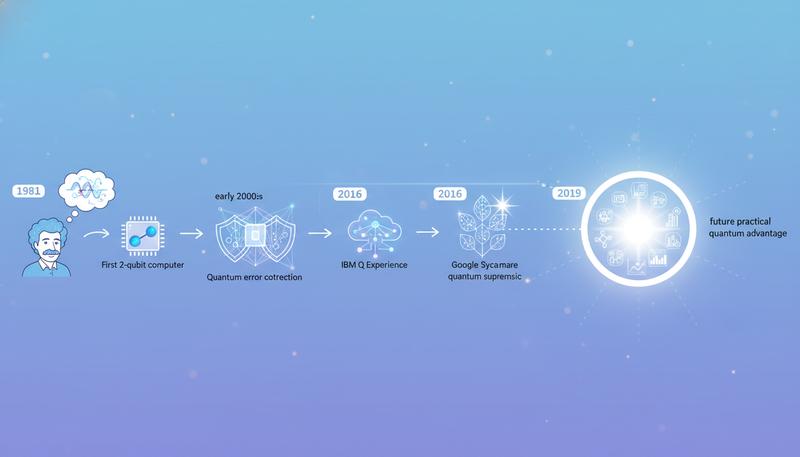

Quantum computing didn't emerge fully formed from some lab in 2019. It grew out of a theoretical insight in 1981 that gradually became real, working demonstrations. And here's the thing: understanding those milestones is crucial, because every few months there's a new announcement — a breakthrough, a processor, a milestone reached. It's easy to get lost in whether quantum computers are about to revolutionize everything, or whether they're just the flying cars of the 21st century, forever one decade away.

The answer requires knowing the actual journey from theory to machine, and what the experiments that succeeded actually tell us. So let's trace that path.

From Theory to Machine: The First Decades

The transistor went from theoretical concept to working device in a handful of years. Quantum computers took decades longer — nearly two of them, from Feynman's 1981 insight to the first actual machine in 1998. That's not a failure. It's a measure of how genuinely hard this is.

In 1998, researchers at Oxford and Berkeley did something nobody had quite managed before: they got two quantum bits to perform a simple computation. Two qubits. A modern classical computer does operations on billions of transistors simultaneously, so two qubits sounds almost comically small. But that's the wrong way to think about it. Those two qubits proved that the whole idea wasn't just mathematically sound — it could actually be built.

Through the 2000s and 2010s, progress kept moving, though not always in ways the outside world noticed. Researchers cracked the problem of quantum error correction, a crucial breakthrough because qubits are absurdly fragile. They're prone to mistakes. They're sensitive to vibrations, electromagnetic interference, temperature fluctuations. Making them reliable became the central engineering problem. Teams built processors with 5 qubits, then 10, then 50. Each step meant solving problems that had never been solved before — keeping systems colder than outer space, manipulating qubits precisely enough, connecting them reliably, detecting when things went wrong and correcting them.

Then in 2016, IBM did something that mattered just as much as any technical breakthrough: they opened up access. The IBM Q Experience put a small quantum processor on the internet. Anyone with a web browser could log in and run actual quantum calculations. This wasn't just generous — it accelerated research globally. Students, startups, researchers in countries without billion-dollar quantum labs could suddenly experiment. IBM's quantum computing work represents one of the most sustained corporate bets in the field, and opening the doors was a genuine turning point.

graph LR

A[1981: Feynman proposes quantum computing] --> B[1994: Shor's algorithm invented]

B --> C[1998: First 2-qubit quantum computer]

C --> D[2000s: Quantum error correction advances]

D --> E[2016: IBM Q Experience launches]

E --> F[2019: Google claims quantum supremacy]

F --> G[2020s: NISQ era — noisy but real machines]

G --> H[Future: Fault-tolerant quantum computing]

The Proof-of-Concept Algorithm

One milestone in this timeline deserves special attention: Shor's algorithm, invented in 1994 by mathematician Peter Shor. He proved mathematically that a quantum computer could factor large numbers exponentially faster than any known classical algorithm. Much faster. Exponentially is a big word, and Shor's algorithm made the field sit up and pay attention.

This wasn't a machine. It was a mathematical proof about what a future machine could do. But it electrified the field in a way that pure physics never had. More importantly, it alarmed the security community, because factoring large numbers is the mathematical foundation of most internet encryption. (We'll dig into this in a later section — it gets wild.) The point here is that Shor's algorithm gave quantum computing a clear, obvious killer app that made governments and corporations stop dismissing it as academic curiosity.

The October 2019 Moment

On October 23, 2019, Google published a paper in Nature that made headlines around the world. Their Sycamore quantum processor had completed a specific calculation in 200 seconds. A classical supercomputer, Google claimed, would need approximately 10,000 years to finish the same task.

Ten thousand years. That's longer than all of recorded history. That's the kind of number that stops people mid-scroll.

The calculation itself was somewhat abstract — sampling the output of a random quantum circuit. Not exactly something with real-world applications. But the point was to demonstrate something fundamental: there existed at least one problem where a quantum computer could do something a classical computer simply could not do. Google called this "quantum supremacy," a deliberately bold phrase coined by physicist John Preskill, and the technology world responded appropriately. Quantum computing was real. It had crossed a threshold.

The Sycamore chip used 53 qubits arranged in a specific architecture. Getting those qubits to work together coherently — without errors overwhelming the computation — had required years of engineering work. It was a legitimate milestone.

IBM's Inconvenient Response

IBM did not celebrate quietly. Within days, IBM researchers published a response — not in a peer-reviewed journal, but on the IBM Research blog. In quantum computing controversy, apparently, that's where battles are fought.

Their argument was technical but important. Google's estimate of "10,000 years for a classical supercomputer" assumed a particular, unoptimized approach to the classical simulation. If you gave a classical computer enough disk storage (around 2.5 petabytes — basically unlimited disk space), you could store enough intermediate information to shortcut the calculation dramatically. IBM's revised estimate: the same problem that took Google's quantum computer 200 seconds would take a well-optimized classical supercomputer about 2.5 days.

That's a very different number than 10,000 years.

So who was right? Honestly, both.

Google had indeed underestimated what a cleverly engineered classical simulation could do. The 10,000-year figure wasn't based on rigorous simulation — more like an educated guess assuming standard approaches. IBM correctly pointed out that standard assumptions can be broken.

But Google also had a point. A 200-second computation versus a 2.5-day computation is still a speedup of over 1,000 times. And IBM's solution required absolutely enormous storage — resources that don't exist in most labs. The spirit of what Google was claiming — that a quantum processor could handle something a classical machine would seriously struggle with — remained intact even if the specific numbers were contested.

The debate also revealed something important about the whole field: the boundary between "what a quantum computer can do" and "what a classical computer can do" isn't fixed. It shifts. It moves as people get cleverer about classical algorithms, as hardware improves. This is a recurring theme in quantum computing, and it matters.

What Quantum Supremacy Actually Means (And Doesn't)

Here's where popular coverage mostly went off the rails. Quantum supremacy does not mean:

- That quantum computers now beat classical computers at everything

- That your laptop is about to become obsolete

- That we've solved any of the real-world problems quantum computers are supposed to tackle

Quantum supremacy means, very precisely: a quantum computer has outperformed a classical computer on at least one specific task. That's the definition. The task Google chose was a mathematical stress test — something designed to be hard for classical computers and relatively natural for quantum systems. It was chosen specifically because it would be hard to simulate classically. Nothing about it corresponds to anything you'd actually want to compute in the real world.

Think of it this way: imagine spending decades developing a new kind of vehicle. To prove your vehicle has an advantage, you test it on a course specifically designed to favor your vehicle's unique capabilities — maybe a course full of rivers and swamps that your amphibious vehicle can cross while conventional cars have to take long detours. Winning that test proves something real: your vehicle genuinely can do something conventional vehicles cannot. But it doesn't mean your amphibious vehicle is better for the daily commute.

Quantum supremacy was the amphibious vehicle test. Impressive. Meaningful. But not the whole story.

The Distinction That Actually Matters

There's a crucial difference between two related terms, and physicists are particular about it:

Quantum supremacy: A quantum computer outperforms a classical computer on any task, even a contrived or artificial one.

Quantum advantage: A quantum computer outperforms a classical computer on a task that is genuinely useful — something people actually care about computing, like designing medicines, optimizing financial portfolios, simulating molecules, discovering materials.

We have achieved quantum supremacy (depending on how you view IBM's objections). We have not yet achieved broad quantum advantage on problems that matter in the real world.

This distinction is everything for managing expectations. When someone says "quantum computers will revolutionize medicine," they're talking about quantum advantage — simulating molecular interactions, exploring protein folding, optimizing clinical trial designs. That's a much harder bar to clear than winning an artificial benchmark. IBM's framework for quantum computing explicitly states that today's machines are useful for research and exploration, but the transformative applications require hardware that doesn't yet exist.

Here's an honest analogy: the Wright Brothers' flight at Kitty Hawk in 1903 proved that powered, controlled human flight was possible. Genuine, world-historic milestone. But that flight covered 120 feet and lasted 12 seconds. Commercial aviation — the world-changing application — took another few decades of engineering. Quantum supremacy is Kitty Hawk. We haven't built the Boeing 747 yet.

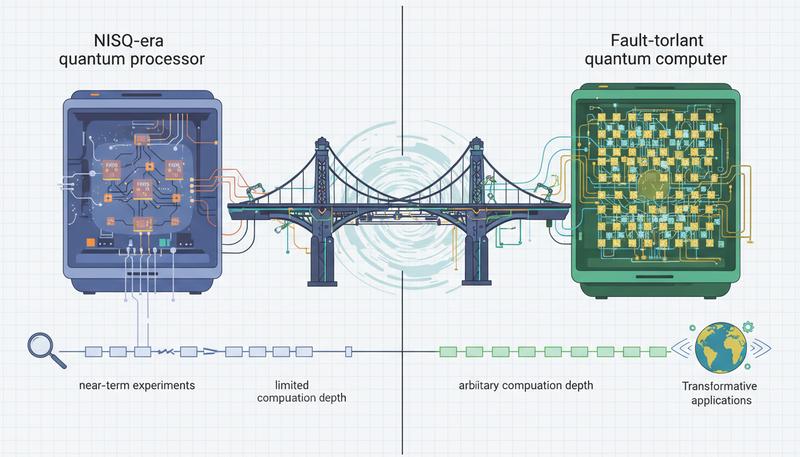

Where We Actually Are: The NISQ Era

The current period in quantum computing has a name: the NISQ era, standing for Noisy Intermediate-Scale Quantum. It's a perfectly unglamorous name for a perfectly unglamorous but genuinely important phase.

"Noisy" means that current qubits make errors — lots of them. Every quantum gate operation has some probability of failing. Every qubit gradually loses its quantum state to the environment (a process called decoherence). Today's machines don't have enough error correction built in to fully compensate for this noise, which severely limits how long and complex a calculation can be before errors pile up and ruin the result.

"Intermediate-scale" means today's machines have enough qubits to do something interesting — dozens to a few hundred qubits — but nowhere near enough for the truly transformative applications, which would require thousands of logical qubits. Logical qubits are what you actually compute with — error-corrected, stable units. The gap between today's physical qubits (the actual hardware) and the logical qubits we need is like the difference between raw iron ore and finished steel. You need a lot of ore to make useful amounts of steel.

"Quantum" is the unambiguously good part.

So what can you actually do with NISQ machines? A few directions are showing genuine promise:

Quantum simulation: Using a quantum system to simulate other quantum systems — which was Feynman's original vision. This is already yielding interesting results for studying chemical reactions and materials.

Quantum machine learning: Exploring whether quantum computers can offer speedups on certain machine learning tasks. The results here are mixed and actively debated among researchers.

Optimization experiments: Testing whether quantum algorithms can find better solutions to complex optimization problems faster than classical approaches. Some early results look encouraging, but classical algorithms remain competitive.

None of these constitute quantum advantage yet. But they're the proving ground where it might first emerge.

The Timeline Nobody Wants to Admit

No one actually knows when practical quantum computers will arrive at scale. Anyone giving you a confident date is either guessing or marketing something.

That said, the research community has developed a rough consensus, and it's worth knowing. The actual history of quantum computing progress shows that the field has consistently taken longer than optimists predicted — but also delivered more than pessimists expected. Most serious researchers now estimate:

- Near-term (3-5 years): Continued NISQ improvements, first demonstrations of quantum advantage in narrow scientific domains like chemistry simulation, growth in quantum software and algorithms

- Medium-term (5-15 years): Early fault-tolerant quantum processors, first commercially useful applications in drug discovery or materials science, major progress on error correction

- Long-term (15+ years): Fully fault-tolerant large-scale quantum computers capable of the transformative applications — breaking encryption, revolutionizing AI, solving massive optimization problems

The range is wide because genuine uncertainties exist. Error correction is a hard engineering problem that hasn't been completely solved. Getting from a few hundred noisy physical qubits to thousands of reliable logical qubits requires technical overhead that is, frankly, daunting.

Here's something worth considering: the people who work in this field daily tend to be more cautious than the headlines. A quantum hardware engineer at a major lab put it to me this way: "We know what needs to happen. We know how to make it happen. We just don't know how long the engineering will take." That's a very different situation from 1981, when people weren't even sure quantum computing was physically possible. The unknowns are now engineering unknowns, not physics unknowns. That's real progress.

Why This Milestone Matters Anyway

Here's what matters about Kitty Hawk: even though that first flight lasted 12 seconds, it mattered. Not because it carried passengers across the Atlantic, but because it proved the physics worked. Once you know something is physically possible, the engineering becomes a question of when, not whether.

That's exactly what quantum supremacy established. A quantum computer actually beat a classical computer at something. The hardware crossed a threshold. The physics works. The engineering challenges that remain are immense — but they're engineering challenges, not fundamental physics mysteries.

That changes everything about the nature of the work. We are no longer asking whether quantum computers can be more powerful than classical computers. We are asking how to build them reliably, at large enough scale, to do something useful. Still an enormous challenge. But a different kind of challenge — the kind that engineering has historically been quite good at solving, given enough time, money, and smart people.

Given the amount of time, money, and smart people currently being thrown at quantum computing — and we'll look at the global quantum race in a later section — the trajectory is clear, even if the timeline remains uncertain.

We are not at the destination. But we have left the station, and the train is moving.

Only visible to you

Sign in to take notes.