How Quantum Computers Work Inside

In the previous section, we promised to look at what quantum computers actually look like — and we're going to deliver on that promise. But here's the first surprise: if someone showed you a photo of a cutting-edge quantum computer without telling you what it was, you might guess it was an art installation. Or maybe a very expensive chandelier. Or possibly something a Bond villain would build to look intimidating during a monologue. You would not guess it was a computer. And that gap — between what we imagine a computer looks like and what a quantum computer actually is — tells you something crucial: the extreme engineering challenges we just explored don't just exist in theory. They've shaped every single physical aspect of these machines.

Quantum computers aren't just smaller or faster versions of the machine on your desk. They're built on completely different physical principles, which means they look radically different, they live in radically different conditions, and they face completely different engineering challenges. Remember that analogy about doing precision surgery while wearing oven mitts, blindfolded, in Antarctica? Well, the "oven mitts" and "Antarctica" part are real — and they're built into the machine itself. Getting a vivid, concrete picture of the actual hardware is one of the best ways to understand just how serious researchers are about solving the decoherence problem we discussed. So let's go inside and see what that solution actually looks like.

The Decoherence Problem: Why Everything Has to Be So Cold

Here's the core challenge that shapes the entire design of a quantum computer: qubits are fragile. Absurdly fragile.

When a qubit is balanced in that strange quantum state we talked about — superposition, that quantum limbo between 0 and 1 — almost any disturbance is catastrophic. A stray photon, a vibration in the floor, a fluctuating electromagnetic field, even the random thermal jiggling of nearby atoms can knock the qubit out of its quantum state and force it to collapse into a definite classical answer. This is decoherence, and it's the single biggest engineering challenge in quantum computing today.

Think of it this way: imagine you're trying to balance a perfectly symmetrical coin on its edge. Any breath of air, any table vibration, any tiny nudge, and it falls. Now imagine trying to do that while someone is running a jackhammer nearby. That's what it's like trying to maintain quantum superposition at room temperature. The dilution refrigerator essentially puts the coin in a sealed room and removes every possible source of disturbance — vibrations, electromagnetic fields, thermal noise — until the environment is so quiet and cold that the quantum state can persist long enough to be useful.

graph TD

A[Room Temperature Environment] -->|Heat & Vibration| B[Qubit Exposed to Noise]

B -->|Quantum State Collapses| C[Decoherence — Information Lost]

D[Dilution Refrigerator] -->|Removes thermal energy| E[Near Absolute Zero: 15 mK]

E -->|Quantum state preserved| F[Qubit Maintains Superposition]

F -->|Quantum computation possible| G[Useful Computation]

At 15 millikelvin — that's 15 thousandths of a degree above absolute zero — atomic motion slows to a near-standstill. The superconducting circuits that form the qubits in IBM's and Google's machines enter a special quantum state of matter where electrons flow without any resistance whatsoever. That combination — near-zero temperature, near-zero resistance — is what allows quantum behavior to emerge at a scale engineers can actually work with. No amount of careful design can fix this without extreme cold. It's not negotiable.

The Main Qubit Technologies: A Tale of Three Approaches

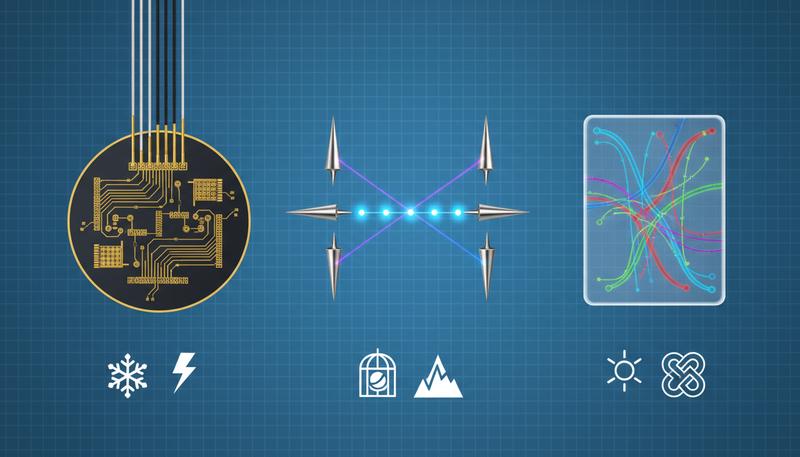

Not all quantum computers look like IBM's golden chandeliers, because not all quantum computers use the same type of qubit. Right now, three main technologies are competing for dominance, and they differ dramatically in how they're built, what they're good at, and what problems they still need to solve.

Superconducting Qubits: The Current Frontrunner

This is the approach used by IBM, Google, and Rigetti Computing. IBM has invested heavily in superconducting qubit technology and operates the largest publicly accessible fleet of quantum computers in the world.

In this approach, qubits are made from tiny loops of superconducting material — usually a niobium or aluminum alloy — connected to a small capacitor. When cooled to near absolute zero, the circuit enters a quantum regime where electrical current can flow as a single coherent quantum wave. The qubit's 0 and 1 states correspond to different energy levels of this quantum circuit, and you can manipulate the qubit by zapping it with precisely calibrated microwave pulses.

The big advantage: superconducting qubits use techniques borrowed from semiconductor manufacturing. That means chip fabrication know-how, photolithography, the entire industrial ecosystem of silicon technology — it can all be adapted. The qubit chips themselves are about the size of a fingernail and look like intricate circuit boards under a microscope.

The big challenge: superconducting qubits are delicate, and their coherence times — the period during which they maintain their quantum state before decoherence scrambles them — are measured in microseconds to milliseconds. That sounds short because it is short. Running a complex quantum algorithm requires completing all your operations before the qubits forget what they were doing. It's like working against a ticking clock.

Trapped Ion Qubits: Slow but Remarkably Precise

Companies like IonQ and Quantinuum take a completely different approach. Instead of engineering a quantum system from circuit components, they use actual atoms — specifically ions (atoms that have been stripped of an electron and given an electric charge) — as their qubits.

These ions are suspended in a vacuum chamber by electromagnetic fields, literally floating in midair, completely isolated from everything else. The qubit states correspond to different energy levels of the electron structure within the ion itself. You read and write information by hitting the ions with precisely tuned laser pulses.

The advantages are significant. Trapped ion systems offer much longer coherence times than superconducting qubits — sometimes by several orders of magnitude — and their gate fidelities (the accuracy of quantum operations) tend to be higher. When IonQ reports 99.99% two-qubit gate fidelity, they're describing something genuinely impressive.

The downsides: trapped ion systems are slower. Laser operations take longer than microwave pulses. They also don't scale as naturally — keeping more and more ions perfectly isolated and individually addressable gets very hard, very fast. And the systems require impressive laser and vacuum equipment that doesn't miniaturize as easily as semiconductor chips.

Other Approaches on the Horizon

The two approaches above dominate today's headlines, but the quantum hardware race has other serious contenders:

-

Photonic qubits (used by PsiQuantum and Xanadu): these use individual photons — particles of light — as qubits. The appeal is enormous: photons travel at light speed, don't interact much with their environment (great for coherence), and could potentially operate at room temperature. The challenge is that photons are notoriously hard to entangle reliably, and detecting them without destroying them is genuinely difficult physics.

-

Topological qubits (Microsoft's long bet): Microsoft has spent years pursuing a fundamentally different qubit design based on exotic quasi-particles called Majorana fermions. The theory is that topological qubits would be inherently protected against certain kinds of errors — essentially, decoherence-resistant by design. Microsoft announced in 2025 that they'd achieved a key milestone with their topological qubit approach. Whether this path will pay off remains one of the most watched bets in quantum hardware.

-

Neutral atoms: Companies like QuEra and Pasqal use neutral atoms (not charged ions) trapped in optical tweezers — essentially tiny lattices of laser light. This approach has shown surprising recent progress and may offer advantages in scalability.

Decoherence: The Central Drama of Quantum Engineering

Let's dig deeper into decoherence, because it's truly the central drama of quantum hardware engineering — the reason quantum computing is hard, the reason it took decades longer than pioneers like Richard Feynman imagined, and the reason the field is still grappling with fundamental challenges.

Here's a way to feel its weight. Imagine you have a perfect quantum computer running Shor's algorithm to factor a large number — the calculation that would crack modern encryption. You need thousands of logical qubits operating coherently for an extended computation. But each physical qubit you have decoheres after a few microseconds. Every moment that passes, some of your qubits are forgetting their quantum state, introducing errors, turning your careful calculation into noise.

This is a real problem that real engineers confront every single day. The qubit's quantum state is fragile in a way that has absolutely no analog in classical computing. A classical bit is either 0 or 1 — if something bumps it, it stays 0 or 1. It's stable. A qubit in superposition is a subtle, continuous quantum state described by a probability amplitude, and almost anything can perturb it: stray electromagnetic fields, cosmic rays, vibrations transmitted through the floor of the building, even a technician breathing nearby. Environmental factors of all kinds cause decoherence, and defending against them requires layers of shielding and isolation that make modern quantum computers feel more like precision scientific instruments than like any computer you've ever seen.

The entire architecture of the dilution refrigerator — those nested cylinders, each one colder than the one outside it — is essentially a decoherence shield. The outermost shell sits at 4 Kelvin. The next ring is at 1 Kelvin. Then 100 millikelvin. Then 15 millikelvin at the core where the qubits live. Each layer reduces thermal noise by another order of magnitude, buying the qubits more quiet time.

Quantum Error Correction: The Mathematics of Staying Sane

Given that decoherence is inevitable, quantum engineers have developed a remarkable strategy: don't try to make qubits perfect. Instead, spread information across many physical qubits and use clever mathematics to detect and correct errors before they ruin your computation.

This is called quantum error correction (QEC), and it's both one of the most brilliant ideas in modern computer science and one of the most expensive problems in engineering.

The basic idea goes like this: instead of storing one logical qubit of information in one physical qubit, you spread it across many physical qubits in a carefully entangled pattern. If any one of those physical qubits gets disturbed by decoherence, the error shows up as a detectable inconsistency in the pattern — which you can measure without actually looking at (and thus collapsing) the quantum state directly. Then you apply a correction.

The catch is the overhead. Current estimates suggest that one logical qubit — a qubit that's truly error-protected and reliable enough for serious computation — might require 1,000 or more physical qubits to implement, depending on your hardware's error rate and the sophistication of your error correction code. Some estimates push that ratio even higher for fault-tolerant computation.

So when IBM announces a processor with 1,000 physical qubits, we're not talking about 1,000 reliable logical qubits for computation. We might be talking about enough hardware to run one well-protected logical qubit — or a handful of them — with the rest providing error correction scaffolding. It's like announcing you have 1,000 people to build a house, only to discover that 990 of them are there to catch mistakes, not to build.

This is a sobering but important thing to understand. Headlines that trumpet raw qubit counts are often misleading. What matters is not how many qubits you have but how good those qubits are and how effectively they can be protected from errors.

graph TD

A[1 Logical Qubit of Information] -->|Requires error correction| B[~1,000 Physical Qubits]

B --> C[Some qubits store information]

B --> D[Most qubits detect & correct errors]

D --> E[Error Syndromes Measured]

E -->|Detected error| F[Correction Applied]

F --> G[Logical Qubit Preserved]

C --> G

The field of quantum error correction codes — the mathematical schemes for encoding information in these protected ways — is a rich and active area of research. You'll hear names like the surface code, the Shor code, and more recently the color code. Each has different trade-offs in error tolerance, overhead, and implementation complexity. Microsoft's topological qubit approach is actually motivated by this problem — the hope is to build a qubit that's physically protected against errors in the first place, reducing the overhead needed.

Welcome to the NISQ Era

This brings us to an important acronym you'll encounter constantly if you follow quantum computing news: NISQ, which stands for Noisy Intermediate-Scale Quantum.

Physicist John Preskill coined the term to describe the era we're in right now. "Noisy" means our qubits are imperfect — they decohere, they suffer from gate errors, they're not fully error-corrected. "Intermediate-scale" means we have tens to a few hundred (or in some cases thousands) of physical qubits — more than early demonstrations, but nowhere near the millions of high-quality qubits that would be needed for the most transformative applications like breaking encryption or simulating large molecules.

The NISQ era is a genuinely important moment in quantum history. These machines are not toys. They are genuinely novel physical systems capable of computation that no classical machine can easily reproduce. Google's 2019 demonstration of quantum supremacy — which we'll explore in detail in the next section — was performed on a NISQ device. IBM's quantum systems, which run millions of experiments every day for researchers around the world, are NISQ devices.

But NISQ devices are also not yet the quantum computers of science-fiction power. They're more like the Wright Flyer than a modern 747. They prove the principle works and demonstrate the potential, but they're too noisy and fragile for the heavy-lifting tasks that will ultimately change medicine and cryptography. The gap between where we are and where we need to go is the central challenge of the next decade.

Some researchers are optimistic that clever NISQ algorithms can extract useful results from noisy qubits even without full error correction. Others argue that the NISQ era is more of a transitional research phase, valuable for learning but limited in practical impact until fault-tolerant quantum computing arrives. Honestly, both views have merit, and the truth is that we don't yet know exactly where the boundary lies.

The Qubit Quality Problem: Why More Isn't Always Better

Let's make concrete something that trips up even people who follow this space carefully: raw qubit count is almost meaningless without knowing qubit quality.

Imagine I told you I was building an orchestra. I could tell you "we have 1,000 musicians." But if 900 of them are randomly playing the wrong notes half the time, your concert is going to be noise, not music. What matters is how many musicians are playing accurately and in concert with each other.

Qubits are exactly like this. A quantum processor with 100 very high-fidelity, well-connected qubits might accomplish more useful computation than one with 1,000 noisy, poorly entangled qubits. The key metrics engineers actually care about are:

- Gate fidelity: What fraction of quantum operations are performed correctly?

- Coherence time: How long does a qubit maintain its quantum state?

- Connectivity: Which qubits can directly interact with which other qubits?

- Qubit count: How many qubits does the system have?

All four matter. A system that excels at one while lagging on others has real limitations. This is part of why the race between superconducting qubits and trapped ions is so interesting — superconducting systems currently win on qubit count and speed, while trapped ion systems often win on fidelity and coherence time. Neither approach dominates across all dimensions.

You Can Use a Quantum Computer Right Now

Here's something that surprises most people: you don't need to build a dilution refrigerator in your garage to use a quantum computer. You don't even need access to a major research university. You can access real, working quantum hardware through the internet, right now, completely free.

IBM Quantum offers cloud access to a fleet of quantum processors through a platform called IBM Quantum Experience. Anyone can create an account, write quantum circuits in Python using a library called Qiskit, and run them on real quantum hardware — not a simulation, but an actual superconducting quantum processor sitting in a cryogenically cooled lab. IBM's machines collectively run millions of jobs per year from researchers and students around the world.

Amazon has entered this space too with Amazon Braket, a cloud service that lets you access multiple types of quantum hardware — from different vendors including IonQ and Rigetti — and also run quantum circuit simulations on classical hardware. Google offers quantum computing access through Google Cloud. Microsoft's Azure Quantum platform connects developers with hardware from multiple quantum companies.

This is genuinely remarkable. For most of computing history, the most powerful machines were physically inaccessible to ordinary people. ENIAC filled a room at the University of Pennsylvania. Early supercomputers were guarded resources at national labs. Now you can write a quantum program on your laptop and execute it on hardware cooled to near absolute zero, receive the results a few minutes later, and pay nothing for the privilege.

The practical barrier for a student or researcher wanting to experiment with quantum computing is not the hardware — it's the learning curve. Quantum programming requires a genuinely different way of thinking about computation, which is why courses like this one exist. But the hardware itself? It's surprisingly accessible.

The Lab Behind the Magic

Let's paint the full picture of what a quantum computing lab actually looks like, because it's a scene worth appreciating.

Imagine a university physics lab or a corporate research facility. The room is white, clean, quiet. In the center, suspended from a ceiling-mounted frame, hangs the dilution refrigerator — that golden chandelier structure, roughly the size of a mini-fridge but taller, surrounded by a maze of tubes and wires. Multiple layers of electromagnetic shielding surround it — Faraday cages and mu-metal shields blocking stray radio waves and magnetic fields.

Coming off the refrigerator are dozens of coaxial cables carrying microwave pulses down into the cold heart of the system, where the qubit chip waits at 15 millikelvin. Those cables are carefully engineered to prevent heat from leaking into the cold stage — they're attenuated, filtered, and thermally anchored at each temperature stage.

Surrounding the refrigerator is a rack of classical electronics: microwave generators, signal analyzers, field-programmable gate arrays (FPGAs) for real-time pulse control, dilution refrigerator controllers. The actual quantum computation happens in microseconds deep inside the cold stage, but the classical electronics required to control it and read it out fill a rack the size of a wardrobe.

And then there's the team: physicists, materials scientists, electrical engineers, software developers, all working on different pieces of this impossibly complex system. A broken qubit chip requires warming the entire refrigerator back to room temperature — a process that takes days — before it can be accessed, modified, and cooled down again. Making progress in quantum hardware is slow, painstaking work.

This is why the companies and research groups making real progress deserve enormous respect. They're not just writing clever code. They're operating at the edge of what's physically possible.

The quantum computer is not a faster laptop. It's a fundamentally different kind of machine, operating at the edge of physical possibility, maintained at temperatures that don't naturally exist anywhere else in the solar system, fighting constantly against the universe's tendency to scramble delicate quantum states. The fact that these machines exist at all — that you can log in from your kitchen table and run a quantum circuit on hardware colder than deep space — is, if you pause to really appreciate it, one of the more extraordinary facts about the moment in history we're living through.

And we're just getting started. In the next section, we'll tackle a question that gets muddled constantly in media coverage: what are quantum computers actually good at? Because the answer might surprise you — not everything is quantum-enhanced, and being precise about the real advantages is crucial to understanding why this technology matters.

Only visible to you

Sign in to take notes.