Dependency Injection: Writing Code You Can Actually Test

Here's a confession that will resonate with anyone who has inherited a Python codebase: the first sign that something has gone architecturally wrong is when you open a test file and see unittest.mock.patch stacked four levels deep. Each patch is a little scar where a hard-coded dependency once lived. The tests still pass, but they feel fragile—brittle assertions about internal implementation details rather than confident checks on observable behaviour. Dependency injection is the technique that eliminates most of those patches before they are ever needed.

This section is about understanding why DI works, not just how to use a framework. The framework is just a convenience; the principle is what changes how you think.

The Problem: Hard-Coded Dependencies

Let's start with a pathologically simple example, because the pathology is real—I have seen this exact shape in production services handling millions of requests.

import os

import smtplib

class UserNotifier:

def notify(self, user_email: str, message: str) -> None:

client = smtplib.SMTP("smtp.gmail.com", 587)

client.starttls()

client.login(os.getenv("EMAIL_USER"), os.getenv("EMAIL_PASS"))

client.sendmail("[email protected]", user_email, message)

client.quit()

What is wrong here? The UserNotifier class creates its own collaborator (smtplib.SMTP) rather than receiving one. This one decision has cascading consequences:

- Testability: You cannot test

UserNotifier.notify()without either sending real emails or monkey-patchingsmtplib.SMTP. Both are nightmares. - Flexibility: Switching to a different email provider, or to a queue-based notification system, requires modifying

UserNotifieritself. - Observability: There is no seam to insert logging, retry logic, or circuit-breaking without touching the class.

- Configuration: The class reaches into the environment directly, mixing concerns that belong at the application boundary.

Every call to smtplib.SMTP(...) inside the class is a weld. The class and its collaborator are fused together. The Dependency Injector documentation describes this state well: "High coupling. If the coupling is high it's like using superglue or welding. No easy way to disassemble."

The antidote is straightforward: stop creating collaborators inside classes and start receiving them from outside. That is dependency injection, in its entirety. Everything else in this section is elaboration.

Inversion of Control: The Mental Model Shift

Before jumping to implementation patterns, it's worth spending a moment on the underlying principle: Inversion of Control (IoC).

In traditional imperative code, a class controls its own destiny. It decides what collaborators it needs, creates them, configures them, and disposes of them. Control flows inward.

IoC inverts this. The class declares what it needs but leaves the responsibility of creating and configuring those dependencies to some external authority. Control flows outward, to a "composition root"—a place at the application boundary where the object graph is assembled.

graph TD

A[Composition Root] -->|creates & injects| B[Service]

A -->|creates & injects| C[Repository]

A -->|creates & injects| D[ApiClient]

B -->|uses| C

B -->|uses| D

E[Application Entry Point] -->|calls| A

The key insight is that the application code (your business logic) should never call SomeClass() for anything that has external state—databases, file systems, network calls, clocks, random number generators. Those are all dependencies that need to be injectable. Business logic classes should be pure in the sense that they operate only on what they are given.

This connects directly to the Dependency Inversion Principle from earlier sections on SOLID: high-level modules should not depend on low-level modules; both should depend on abstractions. DI is the mechanical practice that makes that principle actually true rather than aspirational. Real Python's coverage of SOLID principles frames it this way: "Dependency Inversion by making your classes depend on abstractions rather than on details."

Constructor Injection: The Cleanest Form

The most explicit and most recommended form of DI in Python is constructor injection—passing dependencies into __init__. Let's refactor our UserNotifier:

from typing import Protocol

class EmailClient(Protocol):

def send(self, to: str, subject: str, body: str) -> None:

...

class UserNotifier:

def __init__(self, email_client: EmailClient) -> None:

self._email_client = email_client

def notify(self, user_email: str, message: str) -> None:

self._email_client.send(

to=user_email,

subject="Notification",

body=message,

)

Notice what happened. UserNotifier now:

- Has no idea how email is sent

- Has no idea what configuration is used

- Has no knowledge of SMTP, Gmail, or environment variables

- Depends only on an abstraction (

EmailClientProtocol) rather than a concrete implementation

In production, you inject an SMTP-backed client. In tests, you inject a simple in-memory fake:

class FakeEmailClient:

def __init__(self):

self.sent: list[dict] = []

def send(self, to: str, subject: str, body: str) -> None:

self.sent.append({"to": to, "subject": subject, "body": body})

def test_notifier_sends_email():

fake = FakeEmailClient()

notifier = UserNotifier(email_client=fake)

notifier.notify("[email protected]", "Hello!")

assert len(fake.sent) == 1

assert fake.sent[0]["to"] == "[email protected]"

No patch. No mocks. No environmental side effects. The test is deterministic, fast, and actually readable by a human being who might not share your intuitions about which internal attributes to assert on. This is what "testable by design" means in practice.

Constructor injection also has a wonderful diagnostic property: when a class's constructor takes five or six dependencies, that is a code smell telling you something is wrong. It tells you the class probably has too many responsibilities. The pain of wiring many dependencies is the architecture telling you something. Listen to it.

Function-Level Injection: Passing Collaborators as Arguments

Not everything lives in a class, and Python is refreshingly honest about that. When your unit of work is a function rather than an object, inject dependencies as function arguments:

from datetime import datetime

def record_purchase(

user_id: int,

product_id: int,

repository: PurchaseRepository,

clock: Callable[[], datetime],

) -> Purchase:

purchase = Purchase(

user_id=user_id,

product_id=product_id,

timestamp=clock(),

)

repository.save(purchase)

return purchase

The clock parameter is particularly useful. Real time is a dependency. If record_purchase called datetime.now() directly, you could not test that the timestamp was recorded correctly without either mocking datetime (which is fiddly) or accepting that the test might be slightly non-deterministic. By injecting clock, you can pass lambda: datetime(2024, 1, 15, 12, 0, 0) in tests and know exactly what to assert.

This pattern is especially useful for data-pipeline-style code where a sequence of transformations flows through a pipeline, each step receiving its collaborators from the outside.

Default Argument Injection: Python's Lightweight Approach

Python has an idiom that gives you lightweight DI without any ceremony: default argument values. This is sometimes called "poor man's dependency injection," though I prefer to think of it as "the right tool for the right job":

import smtplib

from typing import Callable

def send_welcome_email(

user_email: str,

smtp_factory: Callable[[], smtplib.SMTP] = lambda: smtplib.SMTP("smtp.gmail.com", 587),

) -> None:

client = smtp_factory()

# ... send email

The production caller uses the default. The test caller passes a lambda returning a fake. This is idiomatic Python and perfectly acceptable for simpler cases where you don't want to build full class hierarchies.

A subtler version uses None as the sentinel and builds the default lazily:

def send_welcome_email(

user_email: str,

smtp_factory: Callable[[], smtplib.SMTP] | None = None,

) -> None:

if smtp_factory is None:

smtp_factory = lambda: smtplib.SMTP("smtp.gmail.com", 587)

client = smtp_factory()

# ...

This pattern avoids the mutable default argument trap that catches beginners, and it reads clearly. The downside is that it can obscure the dependency—someone reading the function signature might not immediately grasp that smtp_factory is a significant collaborator, not an optional configuration detail. For anything more complex than a single external dependency, constructor injection communicates intent more clearly.

Manual DI: The Composition Root

Once you have constructor injection in place, the question becomes: who assembles the object graph? The answer is a composition root—a single place in the application, typically near the entry point, where all dependencies are wired together.

Here is a realistic example for a small web service:

# composition_root.py

import os

from myapp.clients import SmtpEmailClient, PostgresUserRepository

from myapp.services import UserService, UserNotifier

from myapp.web import create_app

def build_app():

# Infrastructure

email_client = SmtpEmailClient(

host=os.environ["SMTP_HOST"],

port=int(os.environ["SMTP_PORT"]),

username=os.environ["SMTP_USER"],

password=os.environ["SMTP_PASS"],

)

user_repo = PostgresUserRepository(

dsn=os.environ["DATABASE_URL"],

)

# Services

notifier = UserNotifier(email_client=email_client)

user_service = UserService(

repository=user_repo,

notifier=notifier,

)

# Application

return create_app(user_service=user_service)

app = build_app()

This is sometimes dismissed as "boilerplate," but I would argue it is one of the most valuable files in the entire codebase. It is a complete, readable description of how your application is assembled. When a new developer joins the team and asks "how does this thing fit together?", this file answers that question without requiring them to trace through inheritance hierarchies or configuration systems. It is architecture made explicit.

There is a real engineering tradeoff here, though. As the Dependency Injector documentation notes, "The assembly code might get duplicated and it'll become harder to change the application structure." For small services with stable structures, manual DI is excellent. As the object graph grows—dozens of services, multiple environments, complex scoping rules—manual wiring becomes tedious and error-prone. That is the tipping point for a framework.

graph LR

subgraph "Production Composition Root"

A[SmtpEmailClient] --> C[UserNotifier]

B[PostgresUserRepository] --> D[UserService]

C --> D

end

subgraph "Test Composition Root"

E[FakeEmailClient] --> G[UserNotifier]

F[InMemoryUserRepository] --> H[UserService]

G --> H

end

When to Reach for a DI Framework

The honest answer to "when should I use a DI framework?" is: later than you think, but earlier than you feel comfortable with.

Manual DI is sufficient when:

- The application has fewer than roughly 15-20 distinct services

- The object graph is relatively stable (not many conditional wiring scenarios)

- You only have one or two deployment environments

- You are not doing scoped lifecycle management (per-request dependencies, for example)

A framework starts earning its keep when:

- You have complex lifecycle management needs (some objects are singletons, some are per-request, some are factory-created)

- You have multiple environments or profiles that require different concrete implementations

- The composition root file is getting long enough that it has its own bugs

- You want to reuse partial graphs (e.g., the same database connection logic in multiple containers)

The most popular Python DI library is Dependency Injector, which provides a clean, declarative approach. Let's dig into it properly.

The Dependency Injector Library: Containers and Providers

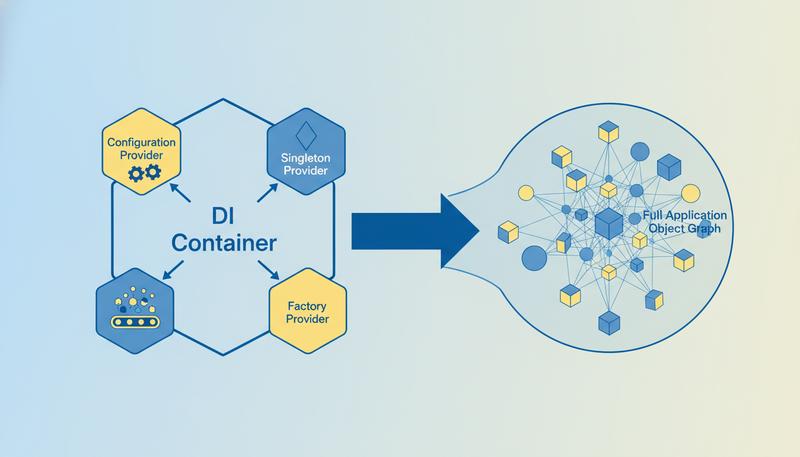

The central concept in Dependency Injector is the Container—a class that declares how to build your object graph. Each entry in the container is a Provider that knows how to create (or retrieve) a specific object.

Here is our previous example rewritten using the library:

from dependency_injector import containers, providers

from dependency_injector.wiring import Provide, inject

from myapp.clients import SmtpEmailClient, PostgresUserRepository

from myapp.services import UserService, UserNotifier

class Container(containers.DeclarativeContainer):

config = providers.Configuration()

email_client = providers.Singleton(

SmtpEmailClient,

host=config.smtp.host,

port=config.smtp.port,

username=config.smtp.username,

password=config.smtp.password,

)

user_repository = providers.Singleton(

PostgresUserRepository,

dsn=config.database.url,

)

notifier = providers.Factory(

UserNotifier,

email_client=email_client,

)

user_service = providers.Factory(

UserService,

repository=user_repository,

notifier=notifier,

)

Three provider types deserve understanding:

providers.Singleton creates the object once and returns the same instance on every subsequent call. Use this for stateful shared resources: database connection pools, HTTP clients, configuration objects.

providers.Factory creates a new instance on every call. Use this for services that should be fresh per-request, or that hold request-scoped state.

providers.Configuration is a special provider that loads configuration from files, environment variables, or dictionaries. It supports dot-notation access and lazy loading.

To use the container in your application code with automatic wiring:

@inject

def get_user_handler(

user_id: int,

user_service: UserService = Provide[Container.user_service],

) -> User:

return user_service.get_user(user_id)

The @inject decorator and Provide[Container.user_service] marker tell the framework to look up user_service in the container at call time. The Provide marker reads as documentation: it makes the dependency explicit in the function signature while letting the framework handle the plumbing.

DI and Testing: Swapping Dependencies Without Monkey-Patching

This is where Dependency Injector shines over manual DI for larger projects. The library provides a first-class override mechanism:

def test_user_notification(container: Container):

fake_email_client = FakeEmailClient()

with container.email_client.override(fake_email_client):

# Within this block, any request for email_client

# returns our fake—including deep in the object graph

service = container.user_service()

service.register_user("[email protected]")

assert len(fake_email_client.sent) == 1

assert fake_email_client.sent[0]["to"] == "[email protected]"

Notice what we did not do: we did not call unittest.mock.patch('myapp.clients.SmtpEmailClient'). We did not inspect internal attributes. We did not assert that some constructor was called with specific arguments. We just ran the code and checked the observable outcome.

This is the design goal made real. The Dependency Injector documentation explicitly calls out the difference: "demonstrates the usage of the Dependency Injector framework... The example shows how to use providers' overriding feature of Dependency Injector for testing or re-configuring a project in different environments and explains why it's better than monkey-patching."

Monkey-patching works by reaching into a module's namespace and replacing a name with a fake during the test, then restoring it afterward. This is fragile because:

- It depends on knowing how the dependency is imported, not just what it does

- It creates test ordering dependencies if restoration fails

- It reveals and tests implementation details rather than behaviour

The DI approach replaces the dependency at the composition layer, which is the only place that should know about concrete implementations. The business logic code never changes; only the object handed to it changes.

A practical pytest pattern using the library:

import pytest

from myapp.container import Container

@pytest.fixture

def container():

c = Container()

c.config.from_dict({

"smtp": {"host": "localhost", "port": 25, "username": "", "password": ""},

"database": {"url": "sqlite:///:memory:"},

})

return c

@pytest.fixture

def fake_email(container):

fake = FakeEmailClient()

with container.email_client.override(fake):

yield fake

With these fixtures, any test that needs to verify email behaviour just declares fake_email as a parameter. The container handles everything else.

The Service Locator Anti-Pattern

There is a pattern that looks superficially like DI but is its philosophical opposite: the Service Locator. Understanding why it fails is as important as understanding why DI succeeds.

A Service Locator is a global registry that any component can query to retrieve its dependencies:

# service_locator.py (do not do this)

_registry: dict[str, Any] = {}

def register(name: str, service: Any) -> None:

_registry[name] = service

def get(name: str) -> Any:

if name not in _registry:

raise KeyError(f"No service registered for '{name}'")

return _registry[name]

# Used inside a class (this is the problem)

class UserNotifier:

def notify(self, user_email: str, message: str) -> None:

email_client = service_locator.get("email_client") # Hidden dependency!

email_client.send(to=user_email, body=message)

The Service Locator violates the principle that dependencies should be declared, not discovered. UserNotifier's constructor signature tells you nothing about what it needs. A developer reading the class has no idea that it requires an email_client until they either run it or read the entire body. The dependency is hidden.

Contrast with constructor injection:

class UserNotifier:

def __init__(self, email_client: EmailClient) -> None:

... # The dependency is RIGHT HERE, in the signature

With constructor injection, the class is self-documenting. With a Service Locator, you need to hunt. The Service Locator also makes testing harder in a subtle way: you must configure the global registry before each test, and you must remember to clean it up. Fail to clean up and tests bleed state into each other—one of the most insidious test bugs.

The deeper problem is that the Service Locator moves the dependency problem rather than solving it. Instead of depending on SmtpEmailClient, UserNotifier now depends on service_locator and the string "email_client". You have traded a concrete dependency for a global mutable dictionary. That is not an improvement.

graph TD

subgraph "Service Locator (anti-pattern)"

A[UserNotifier] -->|"get('email_client')"| B[Global Registry]

B --> C[SmtpEmailClient]

style B fill:#ffcccc,stroke:#cc0000

end

subgraph "Dependency Injection (correct)"

D[UserNotifier] -->|declared dep| E[EmailClient Protocol]

F[Composition Root] -->|injects| D

F -->|creates| G[SmtpEmailClient]

G -.implements.-> E

style F fill:#ccffcc,stroke:#006600

end

The clearest heuristic: if your class calls something to get a dependency, it is a Service Locator. If the dependency arrives at the class (via constructor, function argument, or property), it is dependency injection. Dependencies should push in, not be pulled out.

Putting It Together: A Worked Example

Let me show you a before/after at a slightly larger scale, to make the transformation visceral rather than abstract.

Before: tightly coupled, untestable

import os

import psycopg2

import smtplib

class UserRegistrationService:

def register(self, email: str, password: str) -> int:

conn = psycopg2.connect(os.getenv("DATABASE_URL"))

cursor = conn.cursor()

cursor.execute(

"INSERT INTO users (email, password_hash) VALUES (%s, crypt(%s, gen_salt('bf'))) RETURNING id",

(email, password)

)

user_id = cursor.fetchone()[0]

conn.commit()

# Send welcome email

smtp = smtplib.SMTP("smtp.gmail.com", 587)

smtp.starttls()

smtp.login(os.getenv("EMAIL_USER"), os.getenv("EMAIL_PASS"))

smtp.sendmail("[email protected]", email, f"Welcome, {email}!")

smtp.quit()

return user_id

To test this, you need a real database, a real SMTP server, and real environment variables. This kind of code is the reason integration test suites take 20 minutes to run.

After: loosely coupled, testable

from typing import Protocol

class UserRepository(Protocol):

def create_user(self, email: str, password: str) -> int: ...

class Mailer(Protocol):

def send_welcome(self, email: str) -> None: ...

class UserRegistrationService:

def __init__(self, repository: UserRepository, mailer: Mailer) -> None:

self._repository = repository

self._mailer = mailer

def register(self, email: str, password: str) -> int:

user_id = self._repository.create_user(email, password)

self._mailer.send_welcome(email)

return user_id

The service now expresses pure business logic: create a user, send a welcome email, return the ID. The how of creating users and sending emails is no longer its concern. Testing is now trivial:

class InMemoryUserRepository:

def __init__(self):

self._users: dict[int, dict] = {}

self._next_id = 1

def create_user(self, email: str, password: str) -> int:

user_id = self._next_id

self._users[user_id] = {"email": email, "password": password}

self._next_id += 1

return user_id

class CapturingMailer:

def __init__(self):

self.sent_to: list[str] = []

def send_welcome(self, email: str) -> None:

self.sent_to.append(email)

def test_registration_creates_user_and_sends_welcome():

repo = InMemoryUserRepository()

mailer = CapturingMailer()

service = UserRegistrationService(repository=repo, mailer=mailer)

user_id = service.register("[email protected]", "s3cret")

assert user_id == 1

assert "[email protected]" in mailer.sent_to

This test runs in milliseconds, requires no external infrastructure, and will never have a flaky day due to network timeouts. It tests exactly one thing: the registration logic. The database adapter and the SMTP adapter are tested separately, in integration tests that actually hit those systems. That separation—unit tests for logic, integration tests for adapters—is only possible when dependencies are injectable.

Summary: The DI Mindset

Dependency injection is not primarily a technique; it is a way of thinking about who is responsible for what. Components should not decide how their collaborators are built. They should declare what they need, receive it from the outside, and get on with their actual work.

In Python specifically, the progression looks like this:

- Start with function arguments and constructor parameters. Ninety percent of the time, this is enough.

- Add a composition root as soon as the object graph becomes non-trivial. Even a simple

build_app()function is better than scattered construction. - Reach for a framework like Dependency Injector when lifecycle management, configuration-driven wiring, or environment profiles create meaningful complexity.

The payoff is not just testability, though that alone would justify the practice. It is also changeability. When you want to swap your PostgreSQL repository for a MongoDB one, or your synchronous email sender for an async queue-based one, you change one line in the composition root. The business logic is untouched. That is the promise of low coupling, and dependency injection is how you cash the cheque.

Only visible to you

Sign in to take notes.