Testing as an Architecture Concern

Here's something uncomfortable that most testing courses skip over: if your code is hard to test, your architecture is broken. Not "could use improvement." Broken. Testability isn't something you bolt onto a finished system—it's a direct reflection of the structural choices you made (or failed to make) along the way.

Everything we've covered in this course—Protocols, dependency injection, layered architecture, hexagonal ports and adapters, a clean domain model—was built with testability in mind. This section is where those threads actually tie together. We'll look at how clean architecture creates a fast, trustworthy test suite, how to write tests that tell you something real when they fail, and how to spot the subtle trap of mocking yourself into a false sense of security.

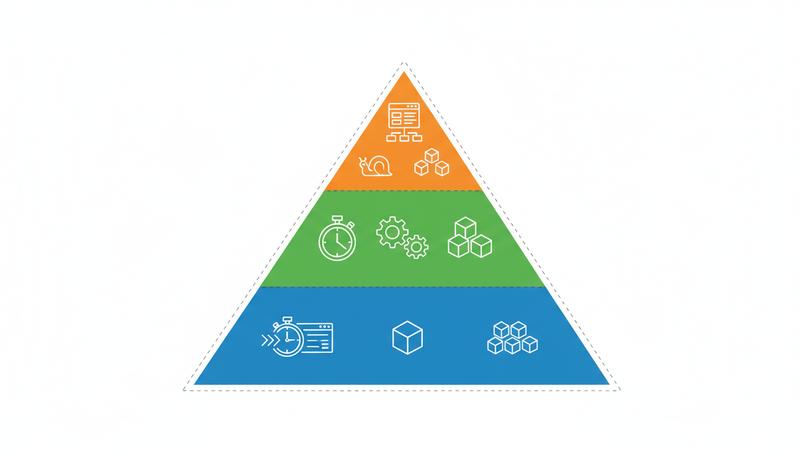

The Test Pyramid: Not Just a Pretty Triangle

The test pyramid has been around long enough that it's easy to glance past. But it's worth understanding properly in a Python context, because the forces it describes are real and the trade-offs matter.

The pyramid breaks down into three test layers, each with its own job:

Unit tests exercise a single unit—a function, a class, a domain aggregate—completely isolated from the outside world. They run in milliseconds. You should have hundreds or thousands of them. They're your main feedback loop while you're developing.

Integration tests verify that your code actually works with real external systems—a database, a message broker, an HTTP API you're calling. They're slower (network round-trips, filesystem I/O), more fragile, and costlier to maintain. You need enough to trust your adapters, but not so many that your CI becomes a twenty-minute wait.

End-to-end tests run the whole stack from the outside—an HTTP client hitting your API, checking the response. They're your final confidence check, but they're slow, flaky (race conditions, test data contamination, environment drift), and when they fail, they often don't tell you why. Save these for your most critical user journeys.

The pyramid's real insight is about proportion. Most tests should be unit tests, fewer should be integration tests, and just a handful should be end-to-end. Teams that invert this—lots of E2E tests, almost no unit tests—end up with slow feedback, fragile CI pipelines, and a codebase everyone fears changing because they can't know if something broke until the pipeline finishes forty minutes later.

Martin Fowler's original test pyramid analysis is the definitive take on this. What he calls the "ice cream cone anti-pattern"—mostly manual and E2E tests, barely any unit tests—is still frustratingly common in Python web projects.

Testability Is an Architectural Quality

Let's be precise about what testability actually means, because it gets confused with "has tests."

A system is testable when you can exercise any meaningful behavior in isolation, quickly, without spinning up real infrastructure. That's the whole thing. And whether that's possible depends almost entirely on your architectural decisions:

- Does your domain logic live in classes that take their dependencies as constructor arguments, or does it directly reach into databases, environment variables, config files?

- Are your I/O operations hidden behind interfaces (Protocols, in Python terms) that you can swap out for in-memory fakes?

- Is your business logic tangled together with HTTP routing, ORM queries, email sending?

Write a function that directly instantiates a database connection, and you've guaranteed that you can't test it without a running database. That's not a testing problem. That's a design problem. The test is just showing you where it breaks.

graph LR

A[Untestable Code] --> B[Hard-coded Dependencies]

A --> C[Global State]

A --> D[Mixed Concerns]

E[Testable Code] --> F[Injected Dependencies]

E --> G[Pure Functions / Value Objects]

E --> H[Separated Layers]

F --> I[Swappable Fakes]

G --> J[Fast Unit Tests]

H --> K[Targeted Tests Per Layer]

The Python Dependency Injector documentation spells this out directly: "Low coupling brings flexibility. Your code becomes easier to change and test." Coupling and testability move in opposite directions. When you swap out hard-coded dependencies for injected ones, testability comes along automatically.

This is why the architecture decisions from earlier sections weren't theoretical exercises. They were structural requirements.

Seams: Where Tests Get Their Grip

A seam is a place in the code where you can change behavior without modifying that code. Michael Feathers coined the term in Working Effectively With Legacy Code, and it's the most useful lens for understanding why some code feels easy to test and other code is a nightmare.

In Python, seams come in a few flavors:

Constructor injection is the clearest one. If a class receives its collaborators through __init__, you can pass anything—the real implementation in production, a fake in tests.

# No seam: dependency is locked in

class OrderService:

def __init__(self):

self.repo = PostgresOrderRepository() # stuck with Postgres

# Seam: dependency is injected

class OrderService:

def __init__(self, repo: OrderRepository):

self.repo = repo # tests can pass InMemoryOrderRepository

Protocol boundaries are seams defined by structure rather than inheritance. If OrderService depends on anything that satisfies the OrderRepository Protocol, you can satisfy it with a fake that never touches a database. We covered this in the Protocols section, but the real value shows up here.

Function arguments are the simplest seams. A pure function that takes all its inputs as arguments and returns a value is trivially testable because the inputs and outputs are the entire surface you interact with. No mocking, no patching, no setup choreography. This is why a well-designed domain layer—full of pure functions and value objects—produces a test suite that runs in under a second.

Test Doubles in Python: A Practical Taxonomy

"Mock" has become a catch-all term, which muddies the water because it conflates several different tools with different strengths and failure modes. Here's the vocabulary that actually matters:

Fakes are working implementations with simplified behavior. An in-memory repository that stores orders in a dictionary is a fake. It actually works—you can add an order, retrieve it by ID, list them all—but it never touches a database. Fakes are powerful because they're realistic. They often reveal design problems (if your fake is getting complicated, your interface is probably too broad).

Stubs return canned answers. They don't verify anything; they just stand in for a collaborator that would otherwise need real infrastructure. A stub EmailSender that records calls but doesn't actually send email, or a stub PaymentGateway that always returns a successful charge—that's a stub.

Mocks are stubs with assertions built in. You configure expectations upfront ("this method should be called exactly once with these arguments"), and the test fails if those expectations aren't met. Python's unittest.mock.Mock is a mock in this sense, though it's flexible enough to do stub work too.

Spies record calls and let you verify them after the fact instead of setting expectations upfront. Python's MagicMock acts like a spy: you call it however you want, then inspect .call_args, .call_count, etc.

from unittest.mock import MagicMock, call

def test_order_service_calls_repository_with_new_order():

repo = MagicMock()

service = OrderService(repo=repo)

order = service.place_order(customer_id="c-1", items=[...])

repo.save.assert_called_once()

saved_order = repo.save.call_args[0][0]

assert saved_order.customer_id == "c-1"

When should you reach for which? A rough guide: use fakes for stateful collaborators (repositories, caches), use stubs for external services where you don't care about call patterns, and use mocks sparingly—only when the interaction itself is what you're actually testing.

The danger with mocks is that they test implementation rather than behavior. If your test asserts that repo.save was called with exactly these keyword arguments in exactly this order, you've built a test that breaks every time you refactor the implementation, even if the behavior hasn't changed. That's a test that costs more than it's worth.

pytest as a Design-Aware Framework

pytest is the obvious choice for Python testing, and it's not just because of the clean syntax. pytest's fixture system is, if you look at it sideways, a dependency injection mechanism. Understanding it that way makes you write better tests.

A pytest fixture is a function that provides a value to tests that request it as a parameter. Fixtures can depend on other fixtures. They have scopes (function-level, module-level, session-level). They can yield, letting you handle setup and cleanup in one function. This is genuinely elegant.

import pytest

from myapp.repositories import InMemoryOrderRepository

from myapp.services import OrderService

from myapp.domain import Customer

@pytest.fixture

def order_repo():

return InMemoryOrderRepository()

@pytest.fixture

def order_service(order_repo):

return OrderService(repo=order_repo)

@pytest.fixture

def existing_customer():

return Customer(id="c-1", name="Alice", email="[email protected]")

def test_place_order_increases_order_count(order_service, order_repo, existing_customer):

order_service.place_order(customer=existing_customer, items=["item-a"])

assert len(order_repo.all()) == 1

Watch what happens: order_service needs order_repo, and pytest wires that automatically. This mirrors how your production DI container (or manual wiring) works. The test reads naturally, the setup is reusable, and writing a new test that needs an OrderService is one line.

The scope feature is especially valuable for integration tests where creating a real database connection for each test would be prohibitively slow:

@pytest.fixture(scope="session")

def db_connection():

conn = create_test_database()

yield conn

conn.close()

drop_test_database()

Session-scoped fixtures run once for the entire session. Combined with a transactional fixture that rolls back after each test, you get a fast, isolated integration test suite that uses a real database without rebuilding it for every test case.

Testing the Domain Layer in Isolation

Your domain layer—entities, value objects, aggregates, domain services—should be the easiest thing in your codebase to test. If it isn't, something is pulling domain logic into places it shouldn't be.

A well-designed domain test looks like this:

from myapp.domain import Order, OrderItem, InsufficientStockError

from decimal import Decimal

def test_order_total_includes_all_items():

order = Order.create(customer_id="c-1")

order.add_item(OrderItem(sku="WIDGET-1", quantity=2, unit_price=Decimal("9.99")))

order.add_item(OrderItem(sku="WIDGET-2", quantity=1, unit_price=Decimal("4.50")))

assert order.total == Decimal("24.48")

def test_cannot_add_item_with_zero_quantity():

order = Order.create(customer_id="c-1")

with pytest.raises(ValueError, match="Quantity must be positive"):

order.add_item(OrderItem(sku="WIDGET-1", quantity=0, unit_price=Decimal("9.99")))

def test_cancel_fulfilled_order_raises_domain_error():

order = Order.create(customer_id="c-1")

order.mark_fulfilled()

with pytest.raises(InvalidOrderStateError):

order.cancel()

No fixtures, no mocks, no database, no HTTP. These run in microseconds and they test the rules that actually matter: the invariants that protect your business logic. A thousand tests like this will finish in under two seconds on any decent hardware.

This is what you get from isolating your domain layer, which we covered in the Domain Layer section. When your domain objects are pure Python—no SQLAlchemy models, no Pydantic HTTP schemas, no framework trickery—they become trivially testable.

One subtlety: test behavior, not internal structure. Don't assert that order._items has two elements; assert that order.total returns the right value. Testing internal state directly means your tests will break every time you reorganize the internals, even though nothing observable changed.

Testing Application Services: The Fake Repository Pattern

Application services orchestrate the domain—they load entities from repositories, invoke domain operations, and persist the results. They're a step harder to test than pure domain logic because they depend on repositories, but they're still much easier than testing HTTP or database layers.

The key tool here is the fake repository. Instead of mocking a repository with MagicMock, write a simple in-memory version:

from typing import Dict, Optional, List

from myapp.domain import Order

from myapp.ports import OrderRepository

class InMemoryOrderRepository:

"""A working in-memory implementation for use in tests."""

def __init__(self):

self._store: Dict[str, Order] = {}

def get(self, order_id: str) -> Optional[Order]:

return self._store.get(order_id)

def save(self, order: Order) -> None:

self._store[order.id] = order

def all(self) -> List[Order]:

return list(self._store.values())

def find_by_customer(self, customer_id: str) -> List[Order]:

return [o for o in self._store.values() if o.customer_id == customer_id]

Twenty lines of code that eliminate the need for a database in any application service test. More importantly, it actually works—unlike a mock that just records calls, a fake repository genuinely satisfies the interface. If you add an order and then retrieve it by ID, you get that order back. This makes your tests more realistic without slowing them down.

def test_cancel_order_changes_status_to_cancelled(order_service, order_repo):

# Arrange

customer = Customer(id="c-1", name="Alice", email="[email protected]")

order = order_service.place_order(customer=customer, items=["item-a"])

# Act

order_service.cancel_order(order_id=order.id, reason="Customer request")

# Assert

retrieved = order_repo.get(order.id)

assert retrieved.status == OrderStatus.CANCELLED

assert retrieved.cancellation_reason == "Customer request"

The test verifies end-to-end application behavior—placing an order, cancelling it, retrieving it—without touching a real database, HTTP client, or infrastructure. It runs in milliseconds and is completely deterministic.

This approach mirrors what dependency injection gives you at the framework level: the ability to "provide a stub or other compatible object" in place of the real thing. A fake repository is that compatible object, built fully rather than mocked lazily.

Integration Testing Adapters: Test at the Boundary

Integration tests have a specific purpose: verify that your adapters work correctly against the real external systems they wrap. The key phrase is at the boundary. You're not re-testing domain logic. You're verifying that your SQLAlchemy repository actually persists and retrieves data correctly, that your HTTP client handles rate limiting as expected, that your S3 adapter correctly writes and reads objects.

Integration tests should be narrow: one adapter against one real (or real-ish, via Docker) external system, not the whole stack.

# tests/integration/test_postgres_order_repository.py

import pytest

from myapp.adapters.repositories import PostgresOrderRepository

from myapp.domain import Order, Customer

@pytest.fixture(scope="module")

def postgres_repo(postgres_connection):

return PostgresOrderRepository(connection=postgres_connection)

def test_save_and_retrieve_order(postgres_repo):

order = Order.create(customer_id="c-1")

postgres_repo.save(order)

retrieved = postgres_repo.get(order.id)

assert retrieved is not None

assert retrieved.id == order.id

assert retrieved.customer_id == "c-1"

def test_find_by_customer_returns_only_matching_orders(postgres_repo):

order_a = Order.create(customer_id="c-1")

order_b = Order.create(customer_id="c-2")

postgres_repo.save(order_a)

postgres_repo.save(order_b)

results = postgres_repo.find_by_customer("c-1")

assert len(results) == 1

assert results[0].id == order_a.id

A few things worth noticing here:

- The

postgres_connectionfixture lives inconftest.pyand handles the database lifecycle (schema creation, transaction rollback, etc.) - These tests verify the adapter's behavior, not domain behavior—no order totals, no state machines

- They're in a separate directory (

tests/integration/) so you can run them separately from the fast unit suite (pytest tests/unit/)

That separation matters in practice. Your unit tests should finish in under five seconds on any developer's laptop. Your integration tests might take a couple of minutes with real network I/O or database round-trips. Keeping them separate means developers can run the fast suite constantly during development and the full suite before pushing.

The Over-Mocking Trap

Here's one of the sneakiest ways to build a test suite that creates false confidence: mock everything.

# This test is nearly worthless

def test_place_order(mocker):

mock_repo = mocker.patch("myapp.services.order_repo")

mock_validator = mocker.patch("myapp.services.validate_order")

mock_notifier = mocker.patch("myapp.services.send_notification")

mock_repo.save.return_value = None

mock_validator.return_value = True

service = OrderService()

service.place_order(customer_id="c-1", items=[])

mock_repo.save.assert_called_once()

What have we actually tested here? That OrderService.place_order calls repo.save. That's genuinely it. The test passes even if the domain logic is completely broken, even if the data being saved is garbage, even if the validator is being called with the wrong arguments. Every collaborator has been replaced with a dumb recording device.

This pathology prompted the software testing community to distinguish between mockists and classicists. Classicists (the pragmatic group) prefer real objects and fakes; mockists use mocks for everything. The classicist position has mostly won in practice, because heavy mocking creates tests tightly coupled to implementation details and provides weak confidence about correctness.

The giveaway sign you've over-mocked: the test passes, but when you run the application manually or run the integration tests, something breaks. The mocks were satisfying expectations that the real implementation never satisfies.

A useful heuristic: mock across architectural boundaries, not within them. Mock the HTTP client that calls a third-party API (you don't control that API and don't want tests making real network calls). Don't mock your domain objects, your repository interface, or anything internal to the layer you're testing.

graph TD

A[Application Service Test] --> B{What to mock?}

B --> C[External I/O boundary: Yes, use stub or fake]

B --> D[Domain objects: No, use real ones]

B --> E[Repository: Use InMemory fake]

B --> F[Other app services: Probably not]

C --> G[Email sender stub]

C --> H[Payment gateway stub]

E --> I[InMemoryOrderRepository]

Architecture-Driven Test Organisation

How you organize your tests is itself an architectural decision, and the best approach mirrors your source tree. If your application looks like this:

src/

myapp/

domain/

orders.py

customers.py

application/

order_service.py

customer_service.py

adapters/

repositories/

postgres_order_repo.py

http/

order_api.py

Then your test tree should look like this:

tests/

unit/

domain/

test_orders.py

test_customers.py

application/

test_order_service.py

test_customer_service.py

integration/

adapters/

repositories/

test_postgres_order_repo.py

http/

test_order_api.py

e2e/

test_place_order_flow.py

This structure has practical advantages. Finding the test for a module is mechanical: swap src/myapp/ for tests/unit/ and prepend test_ to the filename. CI pipelines can easily run just tests/unit/ for the fast check and tests/integration/ separately in a slower job. Code review for orders.py naturally includes test_orders.py in the diff.

It also acts as a forcing function for architectural clarity. If you can't find a natural home for a test, it often signals that the code under test belongs to multiple concerns and needs decomposition. The test structure reveals the system's shape.

One practical tip on conftest.py: pytest auto-discovers these files and makes their fixtures available to all tests in the same directory and below. Put shared fixtures at the appropriate level—database fixtures in tests/integration/conftest.py, domain helpers in tests/unit/domain/conftest.py. Don't cram everything into a single root-level conftest.py or it becomes a sprawling fixture dump that nobody wants to dig through.

Connecting It Back: Why Architecture Enables This

Step back and notice the pattern running through everything we've covered in this section.

Every testing technique that actually works—domain tests with no infrastructure, application service tests with fake repositories, integration tests that are narrow and targeted—works because of earlier architectural decisions. Protocols make fakes possible. Dependency injection makes swapping collaborators trivial. Layered architecture means you can test each layer without dragging in the layers above or below.

The testdriven.io guidance on clean code states it directly: clean code is "easier to maintain, scale, debug, and refactor." Testing is the mechanism that makes those qualities observable and verifiable. Without tests, "easy to refactor" is just wishful thinking. With tests, it becomes measurable: can I change this code and stay confident that nothing broke?

Clean architecture doesn't just make testing easier—it makes the right kinds of tests the natural, obvious choice. When your domain is pure Python objects with no infrastructure tangled in, the first test you write is a unit test. When your adapters are thin and well-defined, integration testing them feels lightweight. When your application services accept fakes as easily as real implementations, you don't need to stand up a whole environment to verify business logic.

That's the real win. Not a test suite that impresses people. A test suite that gives you the confidence to ship on a Friday afternoon.

Only visible to you

Sign in to take notes.