Cooperation and Competition in Game Design

Cooperation, Competition, and Everything In Between

You've now seen how individual rational choices can trap us in bad outcomes — the Prisoner's Dilemma. But here's what made that scenario work: it was a two-player, one-shot interaction. The structure was simple and severe, which is exactly why it was such a clean illustration of Nash Equilibrium.

But almost no interesting game stops there. The moment you add a third player to the Prisoner's Dilemma setup — or play the game more than once — the whole dynamic shifts. Suddenly alliances form. Threats get made. Someone decides to help their neighbor beat the leader even though it doesn't directly help them. The clean, two-player world we just explored starts to look like a very special case rather than the default.

This section is about what happens when you move beyond that special case. We're going to look at the full spectrum from pure competition (where one player's gain is literally another player's loss) through the messy middle ground (where cooperation and competition tangle together) all the way to full cooperation (where players genuinely win or lose together). At every point along that spectrum, we'll ask: what equilibria does the structure create? And crucially — are those equilibria actually fun?

The Shadow of the Future: Why Repetition Changes Everything

Here's something that trips up a lot of people when they first learn about the Prisoner's Dilemma: in a one-shot game, defection is the dominant strategy even though mutual cooperation would leave both players better off.

But play the same game twice, and everything changes.

This is one of the most profound results in game theory, and it has direct implications for how you design multiplayer games. The key concept is called the shadow of the future — the idea that when players expect to interact again, that anticipated future shapes their behavior in the present.

Think about it from a purely self-interested perspective. If I betray you today, you might retaliate tomorrow. And tomorrow. And the day after. If I'm going to be playing against you in ten more rounds, the cost of your retaliation probably outweighs whatever I gained by defecting. Suddenly, cooperation starts to look like the smart play — not because I'm altruistic, but because I'm calculating.

Robert Axelrod, a political scientist at the University of Michigan, ran a famous computer tournament in the 1980s to figure out what strategy wins in repeated Prisoner's Dilemmas. He invited experts to submit strategies, then ran every strategy against every other strategy over hundreds of rounds. The results were astonishing: the winning strategy wasn't some elaborate algorithmic scheme. It was a four-line strategy called Tit for Tat:

- Start by cooperating

- On every subsequent round, do whatever your opponent did last round

- That's it

Tit for Tat is nice (starts with cooperation), retaliatory (punishes defection immediately), forgiving (returns to cooperation after you cooperate), and clear (easy to understand your partner's strategy). The genius of Axelrod's finding is that these properties matter precisely because players need to be able to read each other's strategies in order to coordinate on cooperation.

The shadow of the future explains a lot of behavior we see in actual games. In a long campaign of Dungeons & Dragons, players develop reputations and relationship histories that shape how they interact. In a game of Diplomacy that's going into its third hour, your history of deals kept and broken is your most important strategic asset. In a game of Terraforming Mars, you might let a competitor take a suboptimal card simply because you want to maintain goodwill for the resource trade you're planning next turn.

Design implication: The length of your game and whether players expect to play together again fundamentally changes player behavior. A short, one-shot game encourages aggressive play. A long, repeated-interaction game encourages the emergence of social norms, alliances, and reputation management. Neither is better — they're different experiences. Choose consciously.

Trust and Reputation: The Social Technology of Cooperation

The shadow of the future works through a mechanism: reputation. If I know you'll cooperate as long as I cooperate, and defect the moment I defect, your reputation for playing Tit for Tat is exactly what keeps me in line.

In real games, reputation becomes extraordinarily complex. Players develop reads on each other: "She always bluffs when she has a strong hand." "He's willing to take a bad deal to maintain an alliance." "They never attack unless they're sure they can win." These mental models that players build of each other are a form of social technology — they allow coordination without explicit communication.

This is why seasoned board game groups often play very differently from groups of strangers. The strangers don't have reputation information. They're effectively playing one-shot games, which pushes them toward defensive, individualistic strategies. The regular group has years of accumulated trust data, which allows more nuanced and interesting play.

Mixed-Motive Games: Where Almost Everything Interesting Happens

Here's the design space that separates truly compelling games from boring ones: the mixed-motive situation.

A mixed-motive game is one where players have some aligned interests and some conflicting interests simultaneously. The name comes from the idea that your motivations are genuinely mixed — you want to cooperate and compete, and figuring out the right balance is the interesting strategic challenge.

In game theory terms, these are variable-sum games where the optimal outcomes depend on whether players manage to coordinate at all. Think about a labor-management negotiation: both sides would rather reach a deal than have a strike, but within that deal, each side wants terms that favor them. They're simultaneously cooperating (trying to reach any agreement) and competing (trying to get a better agreement for themselves).

Sound familiar? This is Settlers of Catan every single game. You need to trade resources with other players to build efficiently — so you need cooperation. But you're also racing to 10 victory points — so you're competing. The magic of Catan is that you genuinely cannot win without trading, but every trade you make also potentially helps your opponent. Every interaction is simultaneously a cooperative and competitive act.

This is also Ticket to Ride, where laying track is competitive (you might block someone's route), but the game only produces interesting decisions because you're all building toward destinations the other players can see and potentially route around. It's 7 Wonders, where the card you draft affects not just you but the player you pass the rest of the pack to. It's almost every Euro game worth playing.

The insight for designers: pure competition is actually easier to design than mixed-motive tension, and it produces shallower experiences. In a pure competition, you optimize. In a mixed-motive game, every decision requires reading your opponents, evaluating relationships, and making judgment calls about when to cooperate and when to defect. That's where the richness comes from.

The Multiplayer Problem: When Three's a Crowd (of Chaos)

Everything gets more complicated with more than two players. Let me illustrate with a simple scenario.

Imagine three players — Alice, Bob, and Charlie — playing a game where the leader wins unless the other two gang up on them. Alice is currently in the lead. In a two-player game, Bob would simply try to beat Alice directly. But with Charlie in the picture, Bob has options. He can:

- Attack Alice directly

- Try to convince Charlie to attack Alice while Bob builds his own position

- Let Charlie attack Alice while Bob takes a different route to victory

- Attack Charlie to eliminate a competitor, hoping Alice doesn't win before he does

None of these is obviously correct. The "right" answer depends on what Bob believes Charlie will do, what Charlie believes Bob will do, what both believe Alice will do in response, and so on. This is the recursive, interdependent decision-making that game theory was designed to analyze — but with three players, it becomes exponentially more complex.

Multiplayer games introduce several dynamics that two-player games don't have:

Kingmaking: This is the nightmare scenario for game designers. Kingmaking happens when a player who cannot win for themselves gets to decide who wins by choosing whom to attack. In the endgame of many multiplayer games, the second-place player's choices effectively crown the winner. Some designers consider this a fatal flaw. Others (including the designers of Diplomacy) consider it an unavoidable and even interesting feature — it makes every player's choices matter until the end.

The Bully Problem / Leader Gets Attacked: In most multiplayer games, the leading player gets dog-piled by everyone else. This creates a feedback mechanism (we'll discuss feedback loops extensively in the Systems Thinking section) that actually compresses the score — leaders get attacked, so leads shrink. This can prevent runaway leader situations, but it can also feel punishing in a way that discourages players from taking early risks.

Alliance Formation: When players can communicate (even if informally), alliances form. Two players can gang up on a third. Alliances shift. This is where multiplayer games develop real political texture, but it also raises fairness questions. If Alice and Bob form an alliance against Charlie, is that a game design problem, a social problem, or just... the game working as intended?

Hidden Information and Signaling: In multiplayer games with hidden information, players have to signal their intentions without revealing too much. Choosing to attack a weaker player rather than the leader sends a message. Passing on a powerful card sends a message. Every action is simultaneously a strategic choice and a communication.

graph TD

A["Player 1 (Leader)"]

B["Player 2"]

C["Player 3"]

D["Player 4"]

B -->|"Attacks leader"| A

C -->|"Forms alliance with P2"| B

D -->|"Builds quietly"| D

A -->|"Responds/Defends"| B

C -.->|"Will betray P2 at the right moment"| A

Asymmetric Roles: Giving Players Different Toys

One powerful solution to the "everyone does the same thing" sameness of many multiplayer games is asymmetric player roles — giving different players different abilities, goals, or resources.

Think about Root, the asymmetric woodland warfare game. The Cats play like a resource-efficient engine builder. The Eagles play like a political area-control game. The Woodland Alliance plays like an insurgency, spreading sympathy tokens and igniting rebellion. The Vagabond plays like a character-driven RPG within the board game. All four factions are fighting on the same board — but they're essentially playing different games.

This asymmetry creates several interesting effects:

Differentiated strategic complexity: Different roles have different skill ceilings, meaning experienced players can take the harder roles while newer players take simpler ones. This is great for mixed-experience groups.

Natural counter-play: If each faction has genuine strengths and weaknesses, the interesting question becomes "which faction matches up well against which others?" This is the design space of competitive games like StarCraft, where the matchup matrix (Terran vs. Zerg, Protoss vs. Terran, etc.) is as much a design object as any individual unit.

Narrative differentiation: When players have different roles, the game naturally generates different stories from the same session. The Vagabond's story of adventure and the Cats' story of industrial expansion are happening in parallel — and occasionally intersecting dramatically.

The balance challenge: Asymmetric roles are extraordinarily hard to balance, because you're not balancing a single design space — you're balancing many different design spaces against each other. The question "is this faction too strong?" is never just about that faction; it's about how that faction performs against all other factions, across all game states, with players of varying skill. This is why games like Root require years of play-testing and iteration, and why the competitive community still debates faction balance.

The key design insight: asymmetric roles convert the question "how well did I optimize?" into "how well did I read my opponents and adapt?" That's a much richer question.

Case Study: Diplomacy, the Most Psychologically Interesting Game Ever Made

If you want to understand everything we've been talking about — mixed motives, reputation, the shadow of the future, alliance formation, asymmetric information, kingmaking — there is no better case study than Diplomacy.

Designed by Allan Calhamer and published in 1959, Diplomacy puts 7 players in control of the major European powers before World War I: England, France, Germany, Austria-Hungary, Italy, Russia, and Turkey. The goal is to control 18 of the 34 supply centers on the map. Militarily, all units are equal — there are no dice, no special powers, no technological advantages. Every unit in the game has exactly the same attack and defense value.

The entire game is decided by negotiation.

Here's how a typical turn works: There's a negotiation phase, usually 15-30 minutes, where players can talk to anyone privately or in groups. Then simultaneously, all players submit written orders. Then all orders resolve simultaneously. Then the negotiation phase begins again.

The catch: no agreements are binding. There is no mechanism in the game to enforce any promise you make. If you promise France that you'll support their attack on Germany, and then secretly order your units to attack France instead, the game literally cannot stop you. Your word is worth exactly what your reputation makes it worth — and nothing else.

This creates the richest possible laboratory for everything game theory studies. Repeated game dynamics are central, because you'll need cooperation from these same players next turn. Reputation is your most valuable asset, because without the ability to make binding agreements, players can only rely on track records. The shadow of the future is always present — betraying an ally now poisons your ability to form alliances for the rest of the game.

But here's what makes Diplomacy truly special: the game is designed so that no player can win alone. The supply center math is structured so that you always need alliances to make progress. You genuinely cannot reach 18 centers from any starting position by attacking everyone simultaneously. You need friends. And the cruel irony is that anyone who gets close to winning needs to be betrayed by their current allies, because a solo win by any player means everyone else loses.

The game forces you to:

- Make alliances you know you'll eventually have to break

- Decide when to break alliances you currently benefit from

- Trust people who are rationally incentivized to betray you at some point

- Read whether someone is telling you the truth when the only thing separating truth from lies is your read of their character

It's no wonder that Diplomacy has been used in diplomatic training programs, social psychology research, and MBA programs studying negotiation. Kissinger played Diplomacy and described it as his favorite game. The psychological complexity that emerges from these simple mechanics — just a map, some tokens, and the instruction "no binding agreements" — is a masterclass in how the right structural constraints generate emergent complexity.

What Diplomacy teaches designers: You don't need complex mechanics to create deep strategic play. You need the right structural constraints that force players into genuinely interesting dilemmas. The most interesting dilemmas involve situations where the rational choice and the ethical choice and the socially beneficial choice are all different — exactly what the Prisoner's Dilemma structure creates.

Cooperative Games: Everyone Wins Together (Or Nobody Does)

At the far end of the spectrum from pure competition sits the fully cooperative game: one where all players win together or lose together, competing against the game itself rather than each other.

The modern cooperative game genre was arguably kicked off by Pandemic (2008), where players work together to cure four diseases before they spiral out of control. Everyone wins if all diseases are cured. Everyone loses if any of several defeat conditions triggers. There's no competitive element between players at all.

Cooperative games solve several persistent problems with competitive multiplayer:

They eliminate kingmaking. If nobody is competing against each other, there's no possibility of one player's choices unfairly deciding who wins.

They scale more smoothly. Adding a player to a cooperative game doesn't add a complex new competitive relationship — it adds a collaborator, which usually just makes the problem more manageable (with more hands on deck) or harder (more things to coordinate).

They work better for mixed-experience groups. An experienced player can help a newer player without "throwing" their own game. They're both working toward the same outcome.

But cooperative games introduce their own problems, most notably the alpha player problem: the situation where one experienced or dominant player effectively makes decisions for everyone. "No no, move here. No, you should use that card. No wait, actually—" The game stops being cooperative and becomes one person directing the others. This isn't a player behavior problem; it's a design problem. The game failed to create enough individual information or decision space to prevent a single player from encompassing the whole picture.

Solutions to the alpha player problem include:

Hidden information: If each player has cards or information only they can see, the alpha player can't optimize for them. Hanabi (a card game where you hold your cards facing away from yourself) is built almost entirely around this mechanic.

Time pressure: Real-time cooperative games like Pandemic: Hot Zone or Space Alert create cognitive load that makes it impossible for one person to direct everyone.

Individual objectives: Semi-cooperative hybrids like Dead of Winter give players shared goals AND secret personal objectives, creating mixed motives even within a cooperative framework.

Traitor mechanics: Games like Battlestar Galactica: The Board Game or The Resistance put a (possibly) defecting player in the midst of the cooperators, reintroducing competitive tension and uncertainty.

Choosing the Right Competitive Structure: A Design Decision Framework

Here's the practical question: when you're designing your own game, how do you decide where on this spectrum to land?

Start with the social experience you want to create, and work backward to the mechanics.

Full competition is the right choice when you want a game that's clean, decisive, and where skill differences should be clearly revealed. Pure competition means nothing muddies the result — the better player (or luckier player, if you want randomness) wins. Chess, Go, and most abstract strategy games live here. The downside: full competition creates losers who know they lost because they weren't as good. That's fine for competitive contexts; it's rough for family game night.

Cooperative is right when you want the social experience of working together — when the joy is in the problem-solving process and the shared narrative of the attempt. Cooperative games are fantastic for onboarding new players, for groups that include kids or mixed experience levels, and for times when people really don't want to "beat" each other. The design challenge is the alpha problem and (less intuitively) the fact that cooperative games can feel too similar from session to session if the randomness isn't calibrated well.

Mixed-motive is right for most situations where you want a game that generates interesting table talk, shifting alliances, and a sense of drama. The negotiation, the betrayals, the "I can't believe you just did that" moments — all of this comes from mixed-motive structure. This is the hardest to design well, but it produces the richest social experience.

Semi-cooperative (hidden traitor or individual objectives within a cooperative framework) is right when you want the social benefits of cooperation but worry about the alpha player problem, and when the group enjoys a moderate level of interpersonal tension.

Here's a rough decision matrix:

| I want players to feel... | Competitive Structure |

|---|---|

| "I earned this victory" | Pure competition |

| "We solved it together" | Full cooperation |

| "I can't trust anyone" | Semi-cooperative/traitor |

| "Everything is a negotiation" | Mixed-motive |

| "I'm playing a different game than you" | Asymmetric roles |

The honest truth is that most experienced designers end up in the mixed-motive space not because they planned to, but because they found that pure competition felt flat and pure cooperation felt too easily solved. Mixed-motive games are messier to design, but they're messier to play in exactly the right way — the kind of messy that generates stories.

The Bigger Picture

Step back for a moment and look at what we've covered. We started with the Prisoner's Dilemma — a two-player, one-shot, completely adversarial scenario where the rational strategy produces the worst collective outcome. And we've ended up at games like Diplomacy, where seven players navigate an endlessly complex web of cooperation and competition, where the "rational strategy" is almost impossible to calculate because it depends on social reads, historical trust, and the anticipation of future interactions.

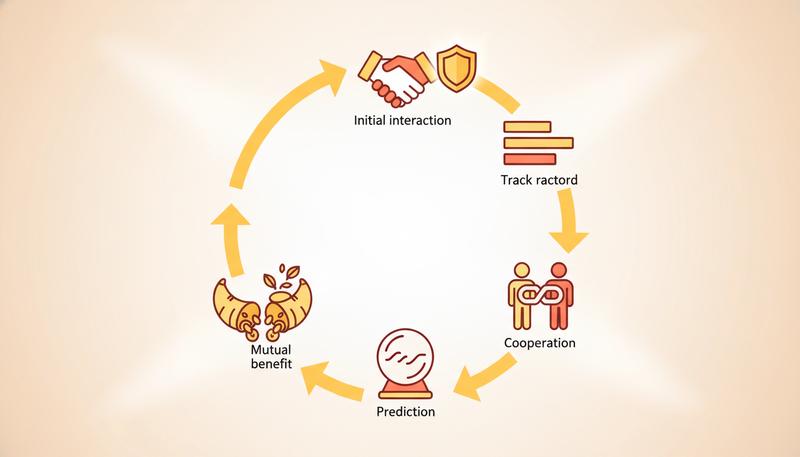

The path from one to the other was: repetition creates reputation, reputation enables trust, trust enables cooperation, and cooperation creates the possibility of win-win outcomes that pure competition destroys.

This is the heart of what makes multiplayer games fascinating, and it's also — not coincidentally — a decent model for how human societies function. We've built legal systems, contracts, and social norms precisely to solve the problems that Diplomacy explores through gameplay: how do you make cooperation stable when individuals are incentivized to defect?

Game theory has been used to understand coalition formation, negotiation dynamics, and voting systems — all of which are fundamentally about the same question our games are asking. What happens when you have multiple players with mixed interests, the possibility of cooperation, and no guarantee that anyone will keep their promises?

Great games don't answer that question. They let players live inside it for two hours and come out the other side with new intuitions about how people work.

That's not nothing. That's actually quite a lot.

Next up: we're going to zoom in from the big picture of competitive structure to the micro level — the individual mechanics that make a game feel like a game at all. What's a mechanic, how do mechanics create meaning, and what separates a mechanic that works from one that creates a footnote in design postmortems? Let's find out.

Only visible to you

Sign in to take notes.