How Game Theory Helps You Make Better Decisions

Here's a confession: "game theory" is one of the most misleadingly named fields in all of human knowledge. When most people hear it, they picture someone hunched over a chessboard, or maybe a movie villain explaining why he always wins. What they don't picture is a mathematician trying to figure out how nations should set tariffs, or biologists modeling why peacocks grow absurdly impractical tails, or two prisoners deciding whether to rat each other out in separate interrogation rooms. And yet — this is exactly where game design lives. Understanding these strategic situations isn't a detour from making better games. It's the foundation.

Game theory is the science of strategic thinking — of how rational agents make decisions when their outcomes depend not just on their own choices, but on what everyone else decides to do. It's the study of interdependence. And once you see it, you'll find it absolutely everywhere: in negotiating a salary raise, in how animals compete for food, in why traffic jams form even when everyone is individually trying to go faster, and — here's where it gets relevant for us — in why players in games do exactly what they do. This chapter starts with the intellectual history: where game theory came from and why a mathematician became obsessed with poker. By the end of this chapter and the next, you'll have the vocabulary to recognize when a game creates genuinely hard choices for players, and why those choices matter.

But let's start at the beginning.

The Origin Story: Von Neumann, Morgenstern, and the Poker Question

In the 1920s and 1930s, a mathematician named John von Neumann became fixated on a question that most academics would have dismissed as frivolous: how do you win at poker? Not through luck — he knew that wouldn't work. How do you think strategically about a game where your opponent knows you're trying to beat them, and they're trying to beat you, and you both know that you both know that?

This question might seem like an odd place to plant the flag for a serious intellectual field. Why not chess? Chess was already the prestige game of strategy — kings and generals liked to think they played it well. And chess had been studied mathematically for decades.

The answer is that chess, for all its complexity, is a game of perfect information. Every piece is on the board, visible to both players. You know every move that has ever been made. There are no secrets. When you lose at chess, it's because your opponent out-thought you — not because they knew something you didn't.

Poker is completely different. In poker, you cannot see your opponent's cards. You don't know what they're holding. You have to make decisions under uncertainty — using incomplete information, reading behavior, and calculating probabilities. And critically, both players are doing this simultaneously, each trying to infer what the other is hiding while actively concealing their own hand. Chess asks: "What is the best move?" Poker asks: "What is the best move given that I don't know everything, and my opponent is actively trying to deceive me?"

Von Neumann recognized that the poker question was the real question — because most of life looks much more like poker than chess. You rarely know everything. Your opponent is often hiding something. And yet you still have to choose.

This insight led him to develop the concept of the minimax theorem — a principle that says a player should always choose the strategy that minimizes how badly you can be hurt by the other player's best response. Applied to poker, this means that even bluffing isn't about psychology tricks — it's about making your behavior unpredictable enough that your opponent can't exploit it. A minimax poker player bluffs at mathematically calculated frequencies so that, whether you call or fold, you can't do systematically better than if you'd guessed randomly. It was a landmark result, and it kicked off decades of work that would eventually reshape economics, military strategy, evolutionary biology, and political science.

Then, in 1944, von Neumann teamed up with economist Oskar Morgenstern to publish Theory of Games and Economic Behavior — the book that formally launched game theory as a discipline. Von Neumann brought the mathematics; Morgenstern brought the economic intuition and the relentless argument that these ideas needed to escape pure math and grapple with the full messy complexity of human decision-making. Together they established the foundational framework that every game theorist since has built on.

And then in 1950, a graduate student named John Nash turned everything up a notch.

Nash was 22 years old when he submitted his dissertation in 1950. He was born in 1928, making him 21 in 1949, not 1950. Additionally, his dissertation was approximately 28 pages, not 27 pages. that extended von Neumann and Morgenstern's ideas far beyond two-player, zero-sum situations. He showed that even in messy, multi-player, mixed-interest scenarios, there's almost always a stable outcome — a point where no player can do better by changing their strategy unilaterally. We now call this the Nash Equilibrium, and it's one of the most powerful concepts in social science. (Nash's life was dramatized in the film A Beautiful Mind, which you may have seen — though, as this accessible explanation of the Nash Equilibrium notes, the film's famous bar scene actually illustrates the concept incorrectly. Typical Hollywood.)

We'll spend all of the next section on Nash Equilibria. For now, just know that these three ideas — von Neumann's minimax, his and Morgenstern's formal framework, and Nash's equilibrium — are the pillars the whole field rests on.

What Game Theory Actually Studies

Strip away the technical vocabulary and game theory is asking one question: when multiple people are making decisions that affect each other, what will they choose, and why?

According to Britannica's definition, game theory provides "tools for analyzing situations in which parties, called players, make decisions that are interdependent. This interdependence causes each player to consider the other player's possible decisions, or strategies, in formulating strategy."

That word — interdependence — is the key. If you're choosing what to eat for lunch and your choice has no effect on anyone else, that's just a personal optimization problem. Not interesting to game theory. But the moment your choice starts depending on what others do, and their choices start depending on yours, you're in game-theoretic territory.

Notice that game theory covers both the chess case (perfect information, no secrets) and the poker case (imperfect information, uncertainty everywhere). In fact, most of the interesting territory is in the poker zone — decisions under uncertainty, where you're reasoning about what you don't know as much as what you do. We'll come back to this repeatedly, because imperfect information is one of the most powerful tools a game designer has.

Game theory makes some assumptions to keep things tractable (meaning: to make the math actually solvable). The big one is rationality: we assume that players are trying to maximize their own payoff. They have preferences, they know what they want, and they consistently pursue it.

Now, before you say "but people aren't rational!" — you're right, and we'll get to that. But rationality is a useful starting point, like assuming frictionless surfaces in introductory physics. It helps you understand the baseline before you start layering in the messiness of real human psychology.

The Cast of Characters

Every game-theoretic situation has the same basic cast. Think of these as the ingredients — every game you'll ever design or analyze has all of them, whether you're aware of them or not.

Players are the decision-makers. They can be individuals, but they don't have to be. In game theory, a "player" can be a corporation, a country, an evolutionary population, or a team. What matters is that they're an entity with preferences and the ability to choose. In the games you design, players are usually, well, players — but it's worth knowing the concept is broader.

Strategies are the complete set of choices available to each player. Not just one move — a strategy in the formal sense is a full plan: if this happens, I do X; if that happens, I do Y. Chess has more strategies than atoms in the observable universe (roughly 10 to the power of 120, if you're curious). Most real-world games have a more manageable strategy space, but the principle is the same.

Payoffs are what players receive at the end of the game — what they care about. In economics, these are often represented as money. In biology, it's often reproductive fitness. In an actual board game, it might be victory points, or just the feeling of winning. The key insight is that payoffs don't have to be money or even things you can touch — they're whatever the players actually care about, which is why game theory ends up being relevant to any situation where people make choices.

graph TD

A[Any Strategic Situation] --> B[Players<br/>Who is deciding?]

A --> C[Strategies<br/>What can they choose?]

A --> D[Payoffs<br/>What do they get?]

B --> E[Game-Theoretic Analysis]

C --> E

D --> E

E --> F[Predicted Outcome]

When a game designer builds a game, they are — whether they realize it or not — defining players, strategies, and payoffs. The number of units in your starting deck, the resource costs of buildings, the victory point values of different territories — all of these are payoff structures. Change them, and you change how rational players behave. That's the connection we'll be building throughout this entire course.

Zero-Sum vs. Non-Zero-Sum: The Most Important Distinction You'll Learn Today

Let's get concrete with an example, because the abstract stuff only goes so far.

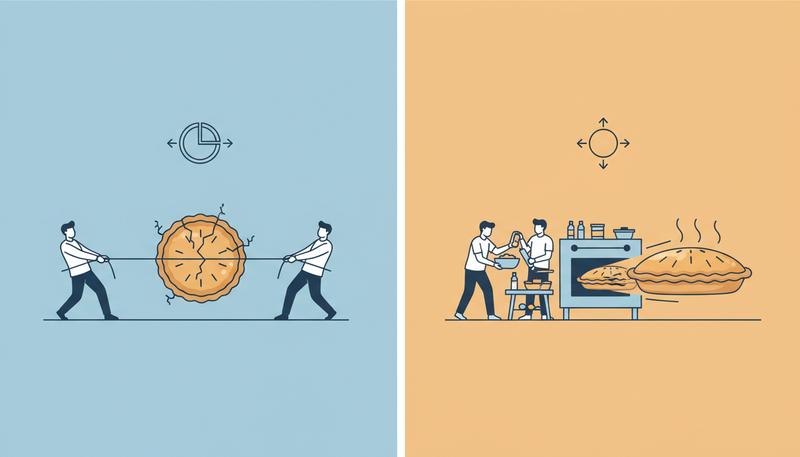

Imagine two kids splitting a pizza. If there are 8 slices and one kid gets 5, the other gets 3. Every slice one kid gains is a slice the other kid loses. The total is always 8. This is a zero-sum game: one player's gain is exactly another player's loss.

Chess is zero-sum. Poker (among the players, ignoring the house) is zero-sum. Rock-Paper-Scissors is zero-sum. Any pure competition where one side winning means the other side losing by exactly the same amount is zero-sum.

Game theory textbooks describe it this way: "you win as much as I lose, or I lose as much as you win... In such a game, our interests are diametrically opposed." And because interests are diametrically opposed, cooperation is structurally impossible — there's nothing to cooperate about. The only valid strategies are competitive ones.

Now imagine those same two kids agree to wash the car together. If they both work hard, they finish in an hour and each gets $10. If only one works hard, it takes forever, they do a bad job, and they each get $2. If they cooperate, they both win more. If they both slack, they both win less. The total is not fixed — it changes based on what they do together. This is a non-zero-sum game.

Most of real life is non-zero-sum. Trade, marriage, team sports, business partnerships, international diplomacy — all non-zero-sum situations where cooperation can create value that didn't exist before, and mutual defection can destroy value that did. Britannica puts it well: "Variable-sum games can be further distinguished as being either cooperative or noncooperative. In cooperative games players can communicate and, most important, make binding agreements; in noncooperative games players cannot."

Here's why this matters so much for game design: the zero-sum or non-zero-sum structure of your game determines the entire emotional texture of playing it.

A zero-sum game (like Chess, Go, or most competitive card games) creates pure rivalry. There's a winner and a loser, and every decision sharpens that edge. These games feel tense, adversarial, often deeply satisfying when you win and stinging when you lose.

A non-zero-sum game opens up space for alliance, negotiation, and collective strategy. Games like Pandemic (pure cooperation, everyone wins or everyone loses together), Diplomacy (alliances and betrayals), or Settlers of Catan (competitive but with mutual-gain trading) all have the non-zero-sum structure baked into their design. The emotional range is totally different — you feel more things, more complicated things.

Neither is better. But knowing which one you're building is essential. You can't accidentally make a cooperative game feel cooperative if the underlying payoff structure is zero-sum.

Why Game Theory Matters Beyond Games

Here's where the misleading name does the most damage. The word "game" makes the whole field sound like a parlor trick — cute but optional. In reality, game theory has been one of the most influential intellectual frameworks of the 20th century.

In economics: Game theory transformed how economists model markets. Companies don't set prices in isolation — they watch competitors. Game theory explains why airlines all change their fares within hours of each other, why stores have sales at the same time, and why companies in an oligopoly (a market with a few dominant players) tend toward particular pricing behaviors. The Nobel Prize in Economics has been awarded to game theorists six times, including Nash himself in 1994.

In politics and international relations: Arms races, nuclear deterrence, trade negotiations, coalition formation — all of these are game-theoretic situations. The doctrine of Mutually Assured Destruction (MAD) during the Cold War was essentially applied game theory: if both superpowers knew that starting a nuclear war meant their own destruction, neither had an incentive to start one. Game theory resources like gametheory101.com connect game theory explicitly to international relations for this reason — the parallels are rich and important.

In biology: This one surprises people. Evolution doesn't think, but evolutionary dynamics are game-theoretic. Animals in a population are effectively "playing strategies" that get selected for or against over generations. Why do male deer grow antlers that are almost too heavy to carry? Because in a competition for mates, the "grow big antlers" strategy beats the "stay light and fast" strategy, even if it makes you worse at literally everything else. Biologist John Maynard Smith formalized this as evolutionary game theory in the 1970s, and it's now central to evolutionary biology.

In everyday life: You negotiate game-theoretically every time you make an offer on a house, every time you decide whether to go first or let someone else speak up in a meeting, every time you choose whether to merge aggressively into traffic or wait your turn. The theory won't tell you exactly what to do in these situations — life is too complicated — but it gives you a framework for thinking about why the situation is structured the way it is.

The Relationship Between Game Theory and Game Design

Now let's zoom back in, because this is the thread we're going to follow for the whole course.

Game theory and game design are not the same thing. Game theory is a mathematical discipline; game design is a craft. But they're in constant conversation, and understanding one makes you dramatically better at the other.

When a game designer sets up the rules of their game, they're essentially constructing a formal game-theoretic situation. They're defining the players, the available strategies, and the payoff structure. And then players — sometimes thousands of them, sometimes just two — actually play that situation. They explore the strategy space. They find dominant strategies (moves that are always better than alternatives), they discover equilibria (stable states where no one wants to change), they create emergent social dynamics that the designer may never have anticipated.

Here's a concrete example of how immediate this connection is. Imagine a real-time strategy game where a defensive bunker costs 50 resources to build and a basic attacking unit costs 10 resources. In that world, rushing your opponent with five cheap units costs the same as building one bunker — so aggressive early attacks are viable, and your opponent has to respect the threat. The strategic landscape has multiple valid openings. Now a designer "balances" the bunker by dropping its cost to 20 resources. Suddenly, the defensive structure costs twice what an attacking unit does on a per-unit basis — and it completely negates five attackers at once. The math has shifted so that building a bunker is always better than rushing early, regardless of what your opponent does. The rush strategy evaporates overnight. Players who invested hundreds of hours learning aggressive early-game timing attacks find their entire skill set obsolete — not because anyone played badly, but because one number changed the payoff table and collapsed the strategic ecosystem.

This isn't hypothetical. Designers who work on competitive games like StarCraft, Age of Empires, or Clash Royale track exactly these kinds of shifts with each patch. They know that a small tweak to resource costs, cooldown timers, or unit health values doesn't just "adjust one thing" — it reshapes which strategies are dominant, which become viable, and which disappear from the metagame entirely.

This is why we're covering game theory before we dive deep into game design mechanics. The theory is the foundation. Once you understand how rational agents behave in strategic situations, you can engineer the strategic situations that produce the player behaviors you want.

Want players to negotiate? Build in non-zero-sum dynamics. Want players to feel genuine tension? Make sure no single strategy dominates. Want players to face interesting moral tradeoffs? Structure the payoffs so that the individually rational choice and the collectively good choice diverge — which, as we'll see in the next section, is exactly what the Prisoner's Dilemma does.

What 'Rational' Really Means (And Why Breaking It Is Interesting)

We need to address the elephant in the room: game theory assumes rationality, but humans are famously irrational. So what's the point?

First, let's be precise about what "rational" means here. In game theory, a rational player is one who:

- Has consistent preferences (if you prefer A over B and B over C, you prefer A over C)

- Seeks to maximize their own payoff

- Uses all available information to make the best decision they can

This is a much narrower definition than "logical" or "wise" or "good at decisions." A person can be fully rational in the game-theoretic sense while doing things that look completely bizarre from the outside — as long as those things maximize their utility given their preferences.

Here's the thing: even this thin definition of rationality gets violated constantly. People have inconsistent preferences (I'll gladly pay you Tuesday for a hamburger today, even though I know I'll regret it). People make decisions that hurt their own stated goals (New Year's resolutions). People are systematically affected by framing, anchoring, loss aversion, and a hundred other cognitive biases catalogued by behavioral economists like Daniel Kahneman and Amos Tversky.

But here's why the rationality assumption is still enormously useful: it gives you a baseline prediction to deviate from.

If you know how a rational player would behave in your game, and real players are not behaving that way, you've learned something interesting. Maybe the payoffs are poorly communicated. Maybe players are loss-averse and treating losses as much worse than equivalent gains (which is real and documented). Maybe there's a social norm overriding self-interest — players would rather accept a worse outcome than let someone else "win too much."

All of these deviations from rationality are features, not bugs — for game designers. They're the human texture that makes games interesting. A game where all players always play optimally would be solved by computers and forgotten by humans.

This is something von Neumann himself recognized: the poker question that launched his whole inquiry wasn't about optimal robots playing optimally — it was about the gloriously irrational, intuition-driven, bluff-reading, pattern-seeking, socially motivated creatures that play poker in real casino card rooms. Chess had perfect information; poker had hidden cards, probability, and psychology. That gap between the rational model and the messy human reality? That's where all the interesting games live.

A Quick Map of Where We're Going

Before we move on, let's take a breath and look at the terrain ahead.

In this section, you've gotten the foundation: what game theory is, where it came from, and who the main figures are — von Neumann and Morgenstern laying the formal groundwork in 1944, Nash extending it just six years later into something that could handle the full complexity of multi-player, mixed-interest situations. You've also picked up the core vocabulary: players, strategies, payoffs, zero-sum, non-zero-sum, perfect information, uncertainty, and rationality.

In Section 4, we're going to go deeper into one of the most important and disturbing results in all of game theory: the Prisoner's Dilemma, and the Nash Equilibrium that makes it so frustrating. It's a situation where two perfectly rational players end up with a terrible outcome — and understanding why will tell you something profound about cooperation, competition, and how to design games that produce interesting choices rather than foregone conclusions.

Everything after that — all the stuff about mechanics, loops, systems, balance, playtesting — it all builds on this. The game theory isn't a detour. It's the whole reason the design decisions make sense.

Grab another cup of coffee. The good stuff is just getting started.

Only visible to you

Sign in to take notes.