How to Use AI to Improve Memory and Learning Retention

Memory, Retention, and AI: Making Sure Learning Actually Sticks

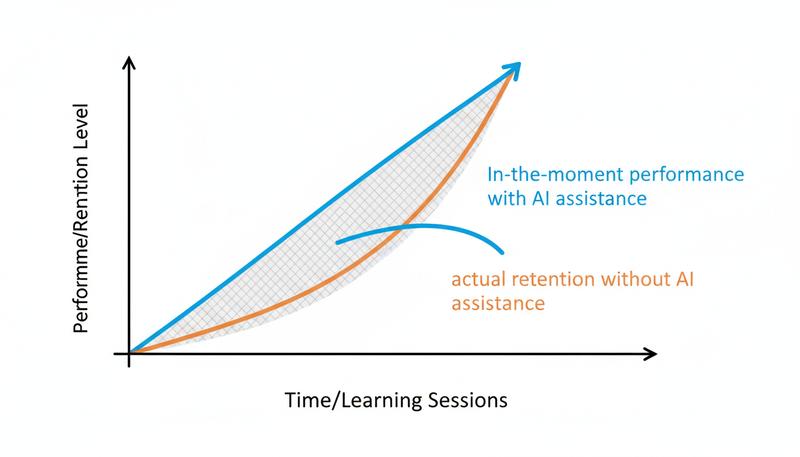

You might be thinking: if I use the metacognitive prompts from the previous section — the confusion-first prompts, the teach-back prompts, the uncertainty-surfacing ones — won't that solve the learning problem entirely? You'll stay engaged, you'll protect your thinking, and the knowledge will stick. Unfortunately, it's not quite that simple. Engagement and metacognitive rigor are necessary for retention, but they're not sufficient on their own. Here's why: there's a critical difference between thinking actively about something in the moment and encoding that thinking into a form your brain will actually remember later.

Consider a scenario that will feel uncomfortably familiar if you've been using AI tools for any length of time. You spend an hour working through a complex topic with ChatGPT — back and forth, clarifying questions, great explanations, the works. You're using all the right prompts. You close the laptop feeling genuinely smart and confident you've learned. Two weeks later, you're trying to explain that topic to a colleague and... nothing. A fog. You remember that you understood it, but the understanding itself has evaporated. This isn't a failure of the metacognitive techniques from the previous section. It's a predictable consequence of how memory works — and how even well-structured AI conversations, by default, tend to work against it.

The thinking was valuable. The metacognitive engagement was real. But thinking actively and remembering durably are not the same thing, and that gap is one of the most important things to understand about learning with AI. This section is about closing that gap. We'll dig into the cognitive science of how memories actually form and stick, identify the specific ways even well-designed AI learning sessions can shortchange retention, and then build a practical toolkit for using AI in ways that make knowledge genuinely, durably yours.

The question at the heart of all this is whether the thinking leaves traces.

How Memory Actually Works: The Two-Part Story

Before we can fix the problem, we need a quick tour of what memory formation actually requires. Not the oversimplified version — the version that actually helps you make decisions.

Memory consolidation happens in two broad stages. Encoding is the initial processing of new information — the moment when something registers. Consolidation is the process by which that encoded information becomes stable, interconnected with other memories, and resistant to forgetting. Most AI-assisted learning sessions are reasonably good at encoding. They're terrible at supporting consolidation.

Consolidation happens through two main mechanisms that decades of memory research have identified as the most robust findings in the field:

The spacing effect (also called distributed practice) is almost absurdly simple: information reviewed multiple times with gaps in between is retained far better than the same amount of review crammed into a single session. Your brain needs time between exposures — time during which it partially forgets, and then has to work to retrieve the information again. That retrieval effort is what strengthens the memory trace. Research dating back to Ebbinghaus in the 1880s and replicated in hundreds of modern studies shows that spacing can double or triple long-term retention compared to massed practice. The weird part? Most people still cram.

Retrieval practice (sometimes called the testing effect) says that the act of recalling information from memory — not just reading it, but actually pulling it back out — is one of the most powerful things you can do for long-term retention. It's not just a way to check whether you've learned something; it is the learning. Studies by Roediger and Karpicke showed that students who practiced retrieving information retained 50% more a week later than students who spent the same time re-studying. Think about that gap. It's not marginal.

Here's the uncomfortable truth: a typical AI learning session works against both of these principles. You get all your information in one sitting (no spacing). And the AI retrieves everything for you (no retrieval practice on your part). You're essentially watching someone else do the very cognitive work that would build your memory.

Remember: Retrieval practice isn't a way to test learning — it IS the learning. Every time you struggle to pull something from memory, you're strengthening the memory trace. Every time the AI pulls it for you, you're missing that opportunity.

Why AI Can Undermine Retrieval Practice — and How to Fix It

Let's be specific about the mechanism. Retrieval practice works because memory retrieval is itself a form of reconsolidation — each time you successfully recall something, the memory is briefly made malleable and then re-stored in a slightly stronger, more integrated form. The effortful nature of the retrieval is crucial; easy retrieval produces much smaller benefits than difficult retrieval. This is called desirable difficulty, and it's one of the most counterintuitive findings in learning science.

AI is, by design, an engine for eliminating effortful retrieval. Ask it a question and it answers immediately. Ask it to explain a concept and it explains fluently. There's no struggle, no productive confusion, no cognitive effort required on your part. This is wonderful for getting things done. It's the opposite of what you want for building durable memory.

The fix isn't to use AI less — it's to use it differently. Specifically, you can use AI to create retrieval practice instead of replacing it.

graph TD

A[AI Learning Session] --> B{Default Mode}

A --> C{Retention Mode}

B --> D[AI generates explanations]

B --> E[You read and feel understanding]

B --> F[Memory: weak encoding only]

C --> G[AI generates questions & prompts]

C --> H[You retrieve, struggle, produce]

C --> I[Memory: encoding + consolidation]

Here's how this looks in practice. Instead of asking "Explain the spacing effect to me," you might:

- Read about the spacing effect from another source first

- Close that source and ask AI: "Give me 5 questions about the spacing effect that I should be able to answer if I understand it well — but don't answer them yet"

- Answer the questions yourself, in writing, without looking anything up

- Then ask the AI to evaluate your answers and fill in gaps

This flips the AI's role. Instead of being the source of retrieved information, it becomes the architect of retrieval challenges. It's a subtle shift with a large effect.

Scott Young's synthesis of learning strategies with ChatGPT captures this well: the most powerful use of AI for learning isn't getting AI to explain things to you — it's using AI to create the conditions under which you have to explain things back. The Socratic approach, the quiz-generation approach, the elaboration-prompting approach — all of these work because they put cognitive effort back on the learner's side of the equation.

The Generation Effect: Why Producing Beats Consuming

Related to retrieval practice is a phenomenon called the generation effect: information that you actively generate — produce, construct, create — is remembered substantially better than information you passively receive, even when the semantic content is identical.

In classic experiments, participants who saw word pairs like "hot — ____" and had to generate "cold" remembered the word better than participants who simply read "hot — cold." The act of production creates a richer, more distinctive memory trace. The generation effect has been replicated across dozens of studies and it scales up from simple word pairs to complex conceptual material.

For AI-assisted learning, the implication is clear: you should be producing more than you're consuming. Here are some concrete ways to build generation into your AI sessions:

Explain-before-you-ask: Before asking AI to explain a concept, write your best current understanding of it. Then ask AI to respond specifically to what you wrote — correcting errors, filling gaps, building on what you got right. You've activated generation before the explanation arrives.

Reconstruct from skeleton: Ask AI to give you just the key terms or section headers for a topic. Then write what you think goes under each one before asking for the full treatment.

Teach it back: After learning something from AI, close the conversation and write (or dictate, or draw) a complete explanation as if teaching it to someone else. Then open a new AI session and paste your explanation, asking: "What have I gotten wrong or left out?" This is the Feynman technique with an AI-powered feedback loop.

Create your own examples: Ask AI to teach you a principle. Then, before asking for examples, generate your own. Then ask AI to evaluate whether your examples actually illustrate the principle correctly.

Tip: The slight discomfort of not knowing exactly what to write before you've read the explanation is the feeling of desirable difficulty. Lean into it. That friction is the sensation of a memory being formed.

Personalized Retrieval Practice: Using AI as Your Quiz Master

One of the genuinely exciting applications of AI for memory is its ability to generate customized, adaptive retrieval practice at scale. Traditional flashcard systems like Anki are powerful but require you to create the cards — which is time-consuming and often gets skipped. AI can dramatically lower the friction.

Here's a workflow that makes this concrete:

The Post-Session Quiz Protocol: At the end of any significant AI learning session or after reading a new chapter or article, paste a summary (or the text itself) into your AI tool with this prompt: "Based on this material, generate 10 quiz questions that test conceptual understanding, not just fact recall. Vary the format — some should ask me to explain in my own words, some should present a scenario and ask me to apply the concept, and some should ask me to identify the principle at work in an example. Don't include the answers yet."

You then answer the questions in writing. Then you ask AI to evaluate your responses. The whole cycle takes 15-20 minutes and converts a passive reading session into an active retrieval session.

The Spacing Reminder System: AI can also help you schedule retrieval practice. After a learning session, ask: "Can you generate a review schedule for this material following spaced repetition principles? Suggest specific questions I should revisit at 1 day, 3 days, 1 week, 2 weeks, and 1 month, and give me the questions now so I can save them." This combines spacing and retrieval practice into a single lightweight system.

Adaptive Difficulty: Unlike static flashcards, AI-generated quizzes can be adapted based on your performance. If you're consistently getting concept X right, ask AI to stop asking about it and focus on the areas where your answers are weakest. This is rudimentary adaptive learning, but it works.

Elaborative Interrogation: Asking "Why" as a Memory Strategy

One of the most robust techniques for deep encoding is elaborative interrogation — the practice of asking "why is this true?" about every fact or principle you're trying to learn. Rather than encoding information as an isolated data point, you force it into a causal web of related knowledge. Causal explanations are far more memorable than bare facts because they connect to the broader network of things you already know.

This is a technique where AI is genuinely excellent as a learning partner. Here's the basic protocol:

- Encounter a fact or principle you want to remember

- Ask AI: "Why is this true? What's the underlying mechanism?"

- When AI explains, ask: "Why does that work the way it does?"

- Go 2-3 levels deep, until you hit bedrock principles or you genuinely understand the causal chain

- Then close the conversation and try to reconstruct the causal chain yourself from memory

The power of this technique is that it transforms isolated knowledge into a network. And networks are sticky — pulling on any one node activates the whole structure. When you next need to recall the original fact, you have multiple paths to it rather than one brittle connection.

Practically, this might look like this: You learn that sleep is important for memory consolidation. Instead of just noting "sleep helps memory," you interrogate:

- Why does sleep help memory consolidation? → Because during sleep, especially deep sleep, the hippocampus replays recent experiences and transfers memories to the neocortex for long-term storage.

- Why does the hippocampus need to replay them? → Because the hippocampus has limited capacity and is a temporary storage area; the replay process strengthens and integrates the memories into the broader cortical network where they become stable.

- Why is the neocortex better for long-term storage? → It has a distributed architecture that allows for integration with existing knowledge networks and is more resistant to disruption...

After three levels of "why," you don't just know that sleep helps memory — you understand the whole system. And that understanding is dramatically more memorable than the original fact.

Interleaving: Mixing Topics for Better Long-Term Retention

Here's a finding that violates most people's intuitions about studying: interleaving — mixing different topics or problem types within a single study session — produces better long-term retention than blocking (studying one topic exhaustively before moving to the next), even though blocking feels more productive in the moment.

The reason is similar to the desirable difficulty argument: when you switch between topics, your brain has to work harder to retrieve the relevant framework for each new problem. That extra work is uncomfortable, but it's exactly the kind of cognitive effort that builds durable memories and flexible knowledge that transfers to new situations. Research by Kornell and Bjork showed that interleaved practice produced dramatically better performance on a final test, even though students who'd done blocked practice felt they'd learned more. Felt more confident. Didn't retain it better.

The implication for AI learning sessions is counterintuitive: don't spend an entire session going deep on one topic. Deliberately mix. Here's a practical structure:

The Interleaved Session Design: When planning a learning session, identify 3-4 related but distinct topics or problem types. Design your AI interactions to rotate between them every 20-30 minutes rather than exhausting one before moving on. After covering Topic A for 20 minutes, switch to Topic B, then C, then briefly revisit A with fresh eyes. The slight confusion of switching is a feature, not a bug.

Cross-Topic Connections: Ask AI to help you find the connections between interleaved topics explicitly: "I've been learning about retrieval practice and about the generation effect today. How are these related? What's the underlying principle they share?" Forcing yourself to articulate connections between topics deepens encoding for both and builds the integrated knowledge networks that characterize expertise.

Warning: Interleaving feels less productive than blocking during the session itself. You'll cover less ground on any single topic. This is normal and correct. The metric you want isn't "how much did I cover today" but "how much will I retain in three weeks." Those are different targets and they require different strategies.

Building Bridges: Connecting New to Known

Another powerful encoding strategy is what cognitive scientists call elaborative encoding — connecting new information to things you already know well. The brain stores information in associative networks; new information that connects to existing networks is easier to encode, easier to retrieve, and easier to generalize.

This is an area where AI's breadth of knowledge is particularly useful. AI can help you find unexpected connections between what you're learning and what you already know, generating the kind of "aha, this is like that!" moments that create robust memories.

Try this prompt: "I'm learning about [new concept]. What analogies or connections to [domain I know well] would help me understand this? Find at least three points where these two domains intersect or where the principles are similar." If you're a software engineer learning statistics, connections to algorithmic thinking. If you're a musician learning physics, connections to acoustics and wave behavior. If you're a marketer learning psychology, connections to persuasion and attention.

The more specific and personal these connections are — the more they link to your existing knowledge rather than some generic example — the more memorable they'll be. A connection that surprises you, that makes you say "I never thought about it that way," is doing something deeper than a textbook analogy.

You can also run this in reverse: "I understand [concept I know well] pretty deeply. What aspects of [new concept I'm learning] are structurally similar? Help me build on what I already know." This is sometimes called analogical scaffolding, and it's one of the most efficient routes to understanding genuinely new territory.

The 24-Hour Review: Consolidating Before You Forget

The forgetting curve — Ebbinghaus's famous finding that we forget roughly 50% of new information within an hour of learning it, and about 70% within 24 hours — is one of the most sobering graphs in all of cognitive science. But it has a bright side: intervening with a review session within 24 hours of initial learning can dramatically reset the curve and extend retention.

This is where an AI-assisted consolidation session becomes high-leverage. The protocol is simple:

The Next-Day Consolidation Session: Within 24 hours of a significant learning session, open a fresh AI conversation and try to reconstruct what you learned — not by looking at notes, but from memory. Write out the key ideas, the main points, what surprised you, what confused you, and any connections you noticed. Then ask AI to respond to your reconstruction: "Here's what I remember from yesterday's session on [topic]. What have I gotten wrong, what have I missed, and what connections have I failed to make?"

This does several things simultaneously. It provides retrieval practice (strengthening memory traces). It exposes gaps you didn't know you had (calibrating your self-knowledge). And it resets the forgetting curve, giving the material a much better chance of making it to long-term memory.

The reconstruction doesn't need to be perfect — in fact, an imperfect reconstruction that surfaces your actual gaps is more useful than a perfect one you produced by consulting your notes. The point is the effortful retrieval, not the accuracy.

graph LR

A[Initial Learning Session] --> B[Forgetting begins immediately]

B --> C{24-hour mark}

C -->|No review| D[~70% forgotten]

C -->|AI consolidation session| E[Forgetting curve reset]

E --> F[Next review at 1 week]

F --> G[Strong long-term retention]

Knowledge Management Systems: Reinforcing vs. Replacing Memory

A word about knowledge management systems — tools like Notion, Obsidian, Roam Research, and the various AI-enhanced PKM (personal knowledge management) tools that have exploded in popularity. These tools are genuinely useful for organizing information, finding connections across notes, and managing the complexity of what you know. But they come with a trap that matters deeply for retention.

The trap is this: externalizing knowledge can replace the need to internalize it. When you know you can retrieve a fact from your perfectly organized notes with a search, the brain's calculus changes — why bother encoding this to memory if the external store is reliably accessible? This isn't irrational; it's the normal operation of what researchers call cognitive offloading, and in moderate amounts it's fine. But it becomes a problem when the result is that you've built an impressive-looking note system that contains knowledge you don't actually have.

The practical implication isn't to abandon knowledge management systems — they're genuinely valuable for genuine knowledge work. It's to use them in a way that reinforces rather than replaces memory:

Write notes in your own words, never just copy-paste. The act of reformulating forces encoding. Copy-pasting is digital transcription, not learning.

Review notes by covering them and reconstructing them, then checking. Don't just read through a note to feel satisfied — treat every review as a retrieval practice opportunity.

Use AI to quiz you on your notes, not just to search them. Rather than asking "What did I write about X?", ask "Without looking at my notes, what do I remember about X? Can you ask me questions about it?"

Distinguish between reference and memory. Some information genuinely belongs in a reference system — phone numbers, specific data points, complex specifications — rather than in your head. Other information is foundational enough that you need to know it without looking it up. Be intentional about which category each piece of information falls into, and use your AI sessions to build genuine memory for the foundational stuff.

The Role of Sleep (and Why You Need AI-Free Time)

No section on memory consolidation would be complete without the neuroscience that most people wish would go away: sleep is not optional for memory. It is the mechanism by which the day's experiences are processed, organized, and transferred to long-term storage. During slow-wave sleep, the hippocampus replays recent memories, identifying patterns and compressing information. During REM sleep, the brain makes associative connections between new material and existing knowledge. Both stages are essential.

This has a direct implication for how you design AI learning sessions: don't schedule your most important learning sessions late at night when you're about to get too little sleep. The encoding happens during waking hours, but the consolidation happens during sleep. A full night of sleep following a learning session is worth more for retention than an equal amount of additional study time.

But there's a subtler point here that's specific to AI use: you need deliberate AI-free consolidation time between learning sessions. Not because AI is bad, but because memory consolidation requires mind-wandering — the kind of loose, associative, internally-directed thinking that happens when you're not engaged with a screen. Research on the brain's default mode network suggests that this seemingly idle mental activity is when much of the cross-referencing and integration of new memories actually happens.

If you use AI in the evening for learning and then continue engaging with AI or other digital stimulation right up until sleep, you're potentially shortchanging the mind-wandering consolidation window. Consider building in 30-60 minutes of screen-free time between learning and sleep — not because this is folk wisdom, but because the neuroscience of memory consolidation actually supports it.

Tip: The walks, showers, and staring-out-the-window moments that feel like wasted time are often when your brain is doing its most important learning work. Protect them, especially after a heavy AI learning session.

Building a Weekly AI Learning Rhythm

Let's put all of this together into a practical weekly structure that optimizes for retention rather than just coverage. This isn't a rigid prescription — adapt it to your actual schedule and learning goals. But it reflects the principles we've covered: spacing, retrieval practice, interleaving, generation, elaboration, and consolidation.

Day 1 — New Content + Generation:

- Learn new material through a combination of primary sources and AI explanations

- End the session with 10 minutes of generation: write key ideas in your own words before checking them

- Ask AI to generate quiz questions — save them for Day 2

Day 2 — Retrieval + Consolidation:

- Before any new material, answer yesterday's quiz questions from memory

- Use AI to evaluate your answers and identify gaps

- Brief new material session, followed by another generation round

- Schedule Day 5 review

Day 3 — Interleaving:

- Deliberately mix today's new topic with material from Day 1

- Use elaborative interrogation: ask "why is this true?" three levels deep on at least one concept

- Use AI to find cross-topic connections between what you've learned this week

Day 4 — Application:

- No new conceptual material — focus on applying what you've learned

- Explain key concepts to AI as if teaching them (protégé effect, see Section 9)

- Identify where your understanding breaks down under application pressure

Day 5 — Spaced Review:

- Return to Day 1 material without looking at notes first

- Reconstruct from memory, then verify with AI

- Generate new questions that probe the edges of your understanding

Weekend — Integration:

- Longer consolidation session connecting the week's material to prior knowledge

- Ask AI to find bridges between this week's material and things you learned in previous weeks

- Brief review of 2-4 weeks ago material (spaced retrieval practice)

- Plan next week's learning with interleaving built in from the start

This rhythm sounds demanding written out in full, but most of the individual elements take 10-20 minutes. The total additional time commitment over a passive AI-interaction approach is perhaps 30-60 minutes per week — and the retention gains are not marginal. We're talking about the difference between remembering 20% of what you learned after a month versus 60-80%.

The key insight is that AI makes it easy to cover a lot of ground. But covering ground isn't learning. What you want is to cover ground and leave footprints — to have the terrain become genuinely familiar so you can navigate it again without a guide. That requires effortful processing, spacing, retrieval, sleep, and time. AI can support all of those things if you structure your sessions with that goal in mind.

The intelligence you build through deliberate practice with AI — the understanding that's been retrieved, elaborated, connected, and consolidated — is yours in a way that AI-retrieved-in-the-moment information simply isn't. It's there when the power goes out, when you're in the meeting room, when you're staring at a problem at two in the morning with nothing but your own mind to work with. That's the version of learning worth building.

Only visible to you

Sign in to take notes.