How Metacognition Determines If AI Helps or Hurts You

Metacognition: The Skill That Determines Whether AI Helps or Hurts You

Here's what we need to address immediately: recognizing the warning signs of hollowed thinking — knowledge gaps, decreased tolerance for ambiguity, outputs that improve while your thinking stalls — isn't enough. Catching these signals is the first step, but it only works if you have the skill to act on them. That skill is metacognition. It's the difference between seeing the problem and actually solving it. It's what transforms vague awareness into precise, actionable change.

Consider this scenario. You're working through a difficult problem — maybe drafting a complex analysis, or trying to understand a new concept for a project. You pull up ChatGPT, have a productive back-and-forth, and end up with something genuinely good. You feel sharp. Capable. Like you've really gotten a handle on this. Then a colleague asks you to explain the concept in your own words, without looking anything up. And you realize, with a creeping discomfort, that you can't quite do it. The words come out fuzzy. The logical steps you felt so certain about a few minutes ago have somehow dissolved. This isn't an intelligence failure. It's a metacognitive one — a failure of the very monitoring skill that would have protected you from the hollowed thinking we discussed in the last section. And it might be the single most important problem to understand if you want AI to actually make you smarter.

Why? Because metacognition is the skill that transforms intentional patterns from good idea into sustainable practice. It's what lets you notice when you're outsourcing thinking rather than augmenting it. It's the internal compass that keeps you on the side of "do the work with AI" rather than slipping into "have AI do the work for you." Without it, even the best techniques and strategies will fail you. You're building on sand.

The Uncomfortable Research Finding

In 2024, a group of researchers published a study with a title that's worth reading twice: AI Makes You Smarter, But None The Wiser. It's exactly as unsettling as it sounds.

The study examined 246 participants who used AI assistance to solve logical problems drawn from the Law School Admission Test — one of the more demanding standardized tests out there, designed to measure analytical reasoning. Here's what they found:

Compared to a control population, AI-assisted participants solved three more problems than average. Real, measurable improvement. The AI was doing what we hope AI does — boosting performance on difficult tasks.

But then the researchers asked participants to assess their own performance. And this is where it gets interesting. Participants didn't overestimate by three points, which would have been accurate self-awareness. They overestimated by four points — meaning they thought they'd done even better than they actually had. arXiv:2409.16708v1 Their self-assessment accuracy degraded as their performance improved.

Even stranger: participants with higher AI literacy — people who understood how AI systems actually work — showed worse self-assessment accuracy. More technical knowledge led to more confidence and less precision in self-judgment. Expertise in the tool made people worse at evaluating what the tool-plus-them had actually accomplished.

Think about what this means practically. You're getting better results. You feel like you're doing great. And you're simultaneously becoming less accurate about what you actually know. It's a confidence boost built on sand — and it feels identical to earned confidence built on rock.

The Disappearing Dunning-Kruger Effect

You've probably heard of the Dunning-Kruger effect — the finding that people with low ability in a domain tend to dramatically overestimate their competence (because they lack the knowledge to recognize their own mistakes), while people with high ability tend to slightly underestimate theirs. It's one of the most replicated findings in social psychology, and it shows up reliably on the LSAT tasks used in this study — when humans tackle them alone.

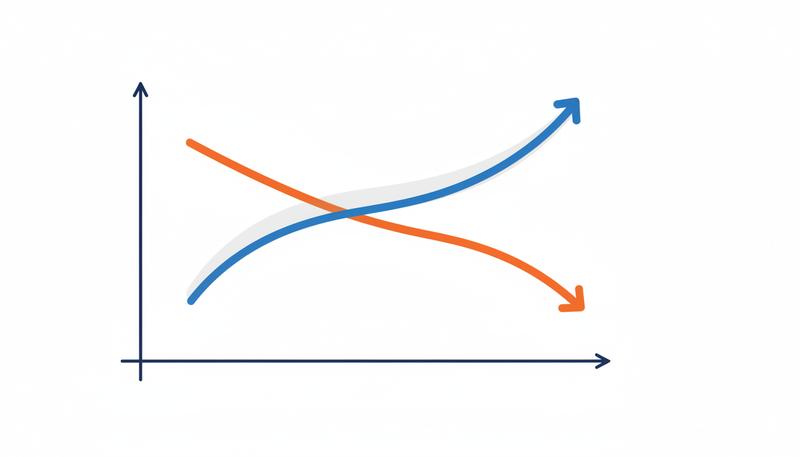

But here's what the researchers found with AI assistance: the Dunning-Kruger effect disappeared. The characteristic pattern — low performers overconfident, high performers appropriately humble — simply ceased to exist. Everyone's self-assessment converged toward inaccurate overconfidence.

What AI seems to do is level people's metacognitive performance, but in the wrong direction. It doesn't raise everyone's self-awareness to the level of the most accurate self-assessors. It drags everyone toward the same overconfident middle. The low performers who were already overconfident stay overconfident. The high performers who had calibrated, accurate self-assessments become overconfident too.

This is a genuinely novel finding, and it matters a lot. Accurate metacognitive monitoring isn't just nice to have — it's what enables you to know when to trust AI output and when to question it, when to keep working on understanding something and when you've actually learned it, when to rely on your own judgment and when to seek help. If AI systematically degrades that capacity across the board, we have a serious problem that no amount of prompting technique will solve on its own.

graph TD

A[AI Assistance Applied] --> B[Task Performance Improves]

A --> C[Self-Assessment Accuracy Degrades]

B --> D[You feel competent]

C --> D

D --> E[Overconfident Self-Assessment]

E --> F[Stop seeking genuine understanding]

F --> G[Performance-Understanding Gap Widens]

The Fluency Illusion: When Smooth Feels Like Smart

There's a cognitive phenomenon that predates AI but gets dramatically amplified by it: the fluency illusion. When information is easy to read, process, or recall, our brains mistake that ease for understanding. We feel like we've grasped something when really we've just encountered it smoothly.

This is why rereading your notes feels more productive than it actually is — the words flow familiarly, so you feel like you know them. It's why well-formatted, clearly-written text seems more credible than information that's harder to parse. Ease of processing masquerades as depth of understanding.

AI output is extraordinarily fluent. ChatGPT doesn't write haltingly. It doesn't make the kind of small errors and self-corrections that human explanation contains. It presents information in polished, confident prose that's easy to read and feels authoritative. And your brain, running its fluency heuristic, registers all that smoothness as comprehension.

This is subtly different from the performance-understanding gap, though the two are related. The fluency illusion is what happens while you're reading AI output — the sense of "yes, yes, I follow this, I get it" that evaporates when you try to do something with the knowledge. The performance-understanding gap is the downstream consequence: you completed the task, but you didn't actually learn.

Warning: If reading an AI explanation feels unusually easy and clear, that's not necessarily a sign you're learning well. It might mean you're experiencing fluency without comprehension. The test isn't how smooth it feels — it's whether you can produce it independently.

Daniel Kahneman's work on System 1 and System 2 thinking is useful here. Your System 1 — fast, automatic, intuitive — is what interprets the fluency of AI output as understanding. Your System 2 — slow, deliberate, analytical — is what would actually check whether you've understood something by trying to reconstruct it. The problem is that fluent AI output feels like it doesn't require System 2 engagement. The hard work seems already done.

The Calibration Problem

All of this converges on what researchers call calibration — the alignment between your confidence in your knowledge and the actual accuracy of that knowledge. A perfectly calibrated person, when they say they're 80% sure of something, is right about 80% of the time. Perfect calibration is rare, but getting reasonably close is the goal.

AI assistance seems to miscalibrate people systematically. The research is clear that users overestimate their performance and understanding when AI is involved — not because they're foolish, but because the cues they normally use to calibrate (did this feel hard? how many times did I get stuck? could I have generated this myself?) are disrupted when AI handles the difficult parts.

Here's a concrete way to think about the calibration problem. Normally, when you struggle through a difficult problem and eventually solve it, the struggle itself is calibrating information. The effort tells you: this is hard for me, I had gaps, I should review this. When AI handles the struggle seamlessly and hands you a solution, that calibrating signal disappears. You didn't experience the difficulty, so you don't know it existed.

It's like doing a workout on a machine that compensates perfectly for your weak spots — you complete the reps, you feel good, but you haven't actually strengthened anything. And you don't know which areas need work because the machine never let them fail.

The solution isn't to abandon AI — it's to build deliberate calibration practices that replace the signal you've lost. More on those shortly.

Metacognitive Monitoring: A Before-During-After Framework

The most practical way to maintain metacognitive awareness while using AI is to build checkpoints into your workflow — before, during, and after AI interactions. Think of these as tiny acts of intellectual honesty that keep your self-assessment accurate.

Before you engage AI:

Before you pull up ChatGPT or Claude, spend sixty seconds asking yourself what you actually know about this topic. Not what you vaguely feel you know — what you could explain. Try to articulate the core concept or problem in a sentence or two, without looking anything up. This does two things: it activates your existing knowledge (which helps you evaluate AI output more critically) and it creates a baseline against which you can measure what you actually learned from the interaction.

A simple prompt to yourself: What do I already know about this, and where exactly am I stuck? If you can't answer the second part specifically, you're not ready to use AI effectively yet — you'll just accept whatever it gives you.

During the AI interaction:

As you read AI output, practice what cognitive scientists call active monitoring — pausing periodically to check whether you're actually following the reasoning or just processing the words. Specific questions to ask yourself:

- Could I explain what I just read to someone else?

- Does this make sense given what I already know?

- Where are the steps in this reasoning that I'd want to verify?

- Is there anything here that seems surprising? (Surprising claims deserve extra scrutiny.)

The goal isn't skepticism for its own sake. It's staying awake to your own comprehension rather than passively absorbing.

After the interaction:

This is where the most important metacognitive work happens. And this is where most people skip it entirely, assuming the session is done.

The Close-the-Laptop Test

Here's the most reliable metacognitive tool I know: close the laptop and try to explain what you just learned.

Not write about it with the AI output in front of you. Not read back over your notes. Close everything, pick up a pen, and try to reconstruct the key ideas from memory. If you were working on a problem, try to solve a similar one without assistance. If you were learning a concept, try to explain it as if to a smart friend who knows nothing about it.

This is uncomfortable in a specific, productive way. The gaps become immediately obvious. The fuzzy parts — the places where you thought you understood but were actually just following the AI's lead — reveal themselves quickly when there's no scaffold to lean on. And that discomfort is valuable information. It's your metacognitive system working correctly.

The close-the-laptop test is essentially a forced retrieval practice, and retrieval practice is one of the most well-established learning techniques in cognitive science — far more effective than rereading or reviewing. But it also functions as a metacognitive calibration tool. The experience of trying and partially failing updates your self-assessment in a way that reading and feeling confident simply doesn't.

Make this a habit. Even spending five minutes at the end of an AI-assisted work session doing this kind of retrieval will dramatically improve your calibration over time.

Self-Explanation as a Metacognitive Mirror

Self-explanation — the practice of explaining AI output in your own words — is related to the close-the-laptop test but serves a slightly different function. Where the laptop test reveals gaps, self-explanation reveals what you actually understand about the reasoning behind a conclusion.

Here's how it works in practice. When you get output from an AI — an explanation, a solution, an argument — instead of accepting it and moving on, try to rephrase each key claim in your own language. Not paraphrase in the sense of replacing words while keeping the structure identical. Actually translate the ideas through your own understanding, and if you find a step you can't translate, mark it.

The research on "learning by teaching" and the Protégé Effect consistently shows that the act of explanation compels you to structure knowledge in ways that passive reading doesn't. When you try to explain something, your own misconceptions become objects you can see and work with — rather than invisible gaps hidden beneath a fluent surface.

The metacognitive function of self-explanation is that it distinguishes between recognition (yes, that makes sense when I read it) and generation (I can produce this reasoning myself). These are different levels of understanding, and only generation is transferable to new problems. Self-explanation is how you check which one you actually have.

Tip: After reading AI output on a complex topic, try the "rubber duck" technique — explain the concept out loud to an imaginary listener who knows nothing about it. The places where you stumble or reach for the AI window are exactly where you should spend more time working.

A practical variation: after an AI interaction, open a blank document and write a brief "translation" of what you learned — not a summary of what the AI said, but your account of what it means and why it matters. This forces genuine processing and leaves you with a record that's actually yours.

AI Literacy Makes It Worse (And What That Means for You)

Let's return to one of the more counterintuitive findings from the metacognition research: people with higher AI literacy showed worse self-assessment accuracy, not better. This deserves unpacking because it has direct implications for the kind of person likely to be reading this course.

The likely explanation is a form of expert overconfidence. People who understand AI well have more faith in its outputs — they know what good AI reasoning looks like, they can identify when an AI is being appropriately nuanced, and they tend to trust the system more. That trust is often warranted on specific outputs, but it bleeds into an unjustified confidence about their own understanding of the domain the AI is discussing.

In other words: knowing a lot about the tool increases your trust in the tool, which decreases your vigilance about whether you actually understand what the tool produced.

This is a humbling finding for anyone who considers themselves technically sophisticated. Knowing more about AI doesn't protect you from its metacognitive hazards — if anything, it may expose you to a subtler version of them. The protection comes not from AI literacy but from metacognitive practice: the deliberate habits of checking your own understanding regardless of how trustworthy the tool is.

Building Your Personal Metacognitive Practice

Metacognition isn't a technique you deploy once — it's a practice that needs to become habitual before it becomes automatic. Here are the concrete habits worth building.

The daily knowledge audit. At the end of any day where you used AI significantly, spend five minutes asking: What did I actually learn today versus what did AI generate for me? What could I explain or reproduce without assistance? This isn't about self-criticism — it's about accurate accounting. Think of it like a financial audit for your own knowledge.

The confidence-accuracy log. When you're about to use AI to check or extend your knowledge on something, write down your confidence level first (0-100%). After the interaction, check your accuracy. Over time, you'll develop a realistic picture of where you tend to be over- or under-confident, and you'll build the calibration that AI interactions erode.

Regular "AI-free" sessions. Schedule time to work through problems without AI assistance — not because AI is bad, but because the struggle is doing metacognitive work. Your sense of what you actually know and don't know comes largely from encountering your limits. If AI always prevents you from encountering them, you stop knowing where they are.

The "source or synthesis?" question. When you use AI output in your work, ask yourself: Am I using this because I understand it and it's correct, or am I using it because it sounds right and I trust the tool? These feel similar from the inside but have very different implications. If you can't defend a claim the AI generated without referencing the AI, that's a sign you don't own that knowledge yet.

Teach it to someone else. The Protégé Effect research confirms what teachers have always known: explaining something to someone else is the highest-fidelity test of whether you understand it. This doesn't have to be formal. Explaining a concept you just learned to a friend, a colleague, or even just in a voice memo forces the kind of organization and verification that passive reading never does.

Remember: The goal of metacognitive practice isn't to be hard on yourself or to reject AI assistance. It's to stay honest about what you actually know — because that honesty is what lets you grow, rather than just feel like you're growing.

The Deeper Point

There's something almost paradoxical about metacognition and AI. The better AI gets at producing competent outputs, the more important your own metacognitive skills become — because the external signals that normally calibrate your self-knowledge (struggle, error, effort) are increasingly handled by the machine.

This means the people who thrive in an AI-augmented cognitive environment won't be the ones who use AI most fluently. They'll be the ones who maintain the sharpest sense of what they personally understand versus what they're borrowing from the machine — and who use that distinction to drive genuine learning rather than merely efficient output.

That's a harder thing to build than a good prompt template. But it's also more durable, more transferable, and more genuinely yours. The chapters ahead will give you specific techniques for using AI as a real learning accelerator. But all of them depend on the metacognitive foundation you build here — the habit of staying honest about what you actually know.

The laptop closing, the rubber duck explaining, the confidence logging — these aren't busywork. They're the practices that keep your thinking sharp even as you delegate more of it to machines. Think of them as the cognitive equivalent of staying in shape while commuting by car: the car gets you there faster, but only if you can still walk.

Only visible to you

Sign in to take notes.