How Tools Extend Your Thinking and Memory

The Extended Mind: You Have Always Thought with Tools

In the previous section, I suggested that AI is fundamentally a mirror for your thinking — or a vending machine. The difference between those two outcomes depends on how you use it. But before we can understand how to use AI as a cognitive amplifier, we need to get honest about what "your thinking" actually is, and where it happens.

Here's a question worth sitting with for a moment: Where does your mind end? Most people, if pressed, would gesture somewhere around their skull. Your mind is the thing inside your head — the electrochemical symphony playing out across roughly 86 billion neurons. Everything else — your phone, your notebook, your bookmarked tabs, your to-do list pinned to the fridge — is external. Tools. Aids. Crutches, maybe.

This intuition feels obvious. It also happens to be wrong. Or at least, it's far less obviously right than it seems. And understanding why matters enormously for how we think about AI. Over the past few decades, philosophers and cognitive scientists have built a surprisingly robust case that human cognition has never been a solo act performed inside a sealed biological container. We have always thought with our environment — offloading, extending, and distributing cognitive work across bodies, objects, and other people. This insight reframes the entire question: whether using AI represents some dramatic departure from "real" human thinking turns out to rest on a premise about how minds work that doesn't survive scrutiny.

The technical term for this is extended mind theory, and here's the core claim: your mind doesn't end where your skull does. Cognition is something that happens between you and your environment. When you think, you're not just using your brain — you're reaching out and recruiting the world around you as part of the process. Your brain and your tools are dynamically coupled systems that reach out into and recruit the environment as part of the cognitive process itself.

Now, extended mind theory doesn't say that everything in your environment automatically becomes part of your mind. The coupling has to be tight, reliable, and functional. You have to actually use the tool as a cognitive partner, not just have it available. And it doesn't claim that external resources are identical to biological ones in every respect — only that the line between "inside the head" and "outside the head" is a much less useful boundary than we typically imagine.

Remember: Extended mind theory isn't saying your tools make you smarter as a metaphor. It's a literal philosophical claim: the boundary of cognition doesn't stop at the skull. Human thinking has always extended into and recruited the world around it.

A Brief History of Cognitive Tools (And the Panics They Caused)

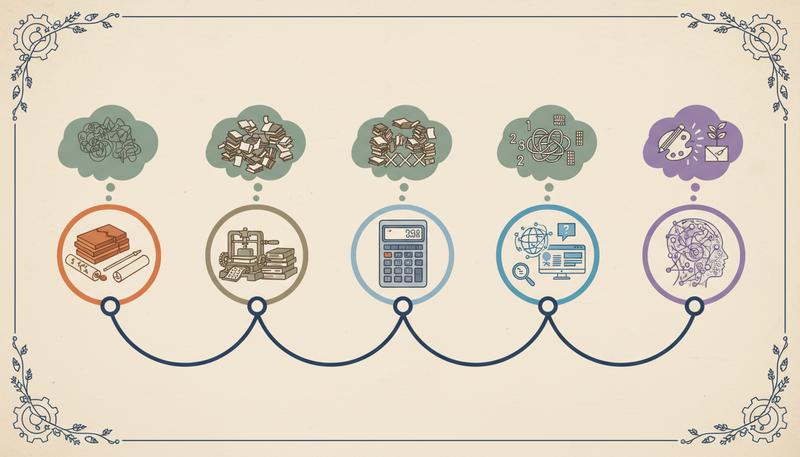

If extended mind theory is correct, then human cognitive history isn't really a story of naked brains doing increasingly impressive things. It's a story of increasingly powerful cognitive partnerships — with clay, paper, presses, and now code.

And here's the pattern: every major cognitive technology, without exception, triggered roughly the same anxious response. This new tool will make us lazy, shallow, and dependent. The worry is as old as literacy itself.

As Clark's Nature Communications piece notes, in Plato's Phaedrus — written around 370 BC — we find Socrates warning that writing will have catastrophic effects on human memory. If people can write things down and look them up, they'll stop actually knowing things. They'll have "the show of wisdom without the reality." The fear was genuine: Socrates believed that true knowledge required internalized understanding, not access to external records.

From our vantage point, this fear looks almost quaint. Writing didn't destroy cognition. It extended what cognition could accomplish, dramatically, in ways that turned out to be incalculably beneficial. Literacy enabled complex legal systems, accumulated science, philosophy, literature, long-distance coordination. The things we can think because writing exists vastly outweigh whatever we might have lost in raw mnemonic muscle.

The pattern repeated itself, reliably. The printing press, which made writing vastly more accessible in the 15th century, triggered anxieties about cognitive overload, loss of careful reading, and the collapse of authoritative knowledge. (Sound familiar?) The calculator, when it arrived in classrooms in the 1970s, prompted serious debates about whether students would lose the ability to reason numerically. The internet brought a new round of panic: Nicholas Carr's influential 2008 article "Is Google Making Us Stupid?" became a cultural flashpoint, later expanded into The Shallows, which argued that constant hyperlinked reading was rewiring our brains for distraction and away from deep focus.

Each of these technologies changed cognition. None of them, by any honest accounting, impoverished it overall. What they did was shift the landscape — making some things less necessary to hold in biological memory while opening up vast new territories of thinking that simply weren't accessible before.

Tip: When you feel the impulse to say "AI is different — this time the concern is real," hold that thought. It is different in important ways (we'll get there). But so was every previous cognitive technology, and the baseline fear has been wrong before. Get precise about what is different before deciding whether the worry is warranted.

Three Case Studies in Cognitive Outsourcing

Rather than staying abstract, let's look at three tools that illustrate the spectrum of cognitive extension — and what the research actually shows about their effects.

The Notebook

The humble notebook is the purest example of cognitive extension without much controversy. When you write something down, you're externalizing memory — offloading the storage burden so that biological working memory can focus elsewhere. But notebooks do more than store. The act of writing, for many people, clarifies thinking. You don't write down what you already know clearly; you write to figure out what you think. The page becomes a thinking partner.

Research on note-taking bears this out in surprisingly specific ways. Studies comparing handwriting to typing find that typing allows faster transcription but handwriting tends to produce better comprehension and retention — likely because when you can't write fast enough to transcribe verbatim, you have to process and paraphrase, which is itself a cognitive act. The constraint of the slower tool forces more engagement.

This is an early hint at a pattern we'll return to throughout this course: cognitive tools don't automatically enhance thinking. The how matters enormously. A notebook used mindlessly for verbatim transcription is cognitively different from a notebook used as a thinking canvas.

The Calculator

Calculators became a genuine flashpoint in education. The worry was specific and reasonable: if students use calculators, they won't develop numerical fluency. They'll be dependent on a device that can fail, run out of battery, or be unavailable when needed.

Some of this worry held up in the research. There's decent evidence that calculator use, when introduced too early, can interfere with developing basic number sense and arithmetic fluency. But there's also evidence that when calculators free students from computational drudgery, they can tackle more complex mathematical reasoning — building conceptual understanding that might otherwise get swamped by procedural demands.

The lesson isn't "calculators good" or "calculators bad." It's that the cognitive effect depends on what is being offloaded, to whom, at what stage of learning. Offload the wrong thing, at the wrong time, and you undermine skill development. Offload procedural overhead at the right time, and you free up capacity for higher-order thinking.

The GPS

GPS navigation is the most frequently cited contemporary example of potentially harmful cognitive offloading. Research has shown that people who rely heavily on GPS navigation show reduced ability to build accurate cognitive maps — mental models of spatial relationships that would allow them to navigate without the device. One widely cited study found that London taxi drivers, who must memorize thousands of routes for a notoriously demanding licensing exam, showed enlarged hippocampi compared to non-taxi drivers — implying that extensive navigation practice produces measurable brain changes.

But before you throw your phone into the sea, consider what GPS actually enables. For most people, navigation is not a skill they want to develop to professional levels. The cognitive resources freed by GPS can go elsewhere. The real question is whether there's a skill you actually need that GPS is eroding — and for most people, most of the time, there isn't.

graph TD

A[Cognitive Tool Introduced] --> B{What type of cognitive work is offloaded?}

B --> C[Procedural / rote / retrieval]

B --> D[Integrative reasoning / judgment / creativity]

C --> E[Usually beneficial: frees higher-order thinking]

D --> F[Higher risk: may prevent essential skill development]

E --> G[Net cognitive gain if higher-order work actively engaged]

F --> H[Net cognitive loss if higher-order work not exercised]

The GPS case is instructive precisely because it shows that the concern isn't entirely unfounded. Cognitive tools can atrophy specific skills. The real question is always: which skills, how important are they to you, and is something else valuable being gained in their place?

Why "It's Cheating" Is Philosophically Confused

One of the most common intuitions about AI assistance — especially in educational contexts — is that using it is somehow cheating. That doing something with AI help isn't really you doing it.

This intuition has a real kernel worth taking seriously (more on that in a moment), but as a philosophical claim it runs into serious problems.

Consider: Is using a word processor cheating? Is spell-check cheating? Is looking up a word in a dictionary cheating, versus knowing it off the top of your head? If you work through a math problem using a whiteboard rather than purely in your head, does the whiteboard get credit for the answer?

These questions sound silly, but that's the point. The "cheating" frame assumes there is some pure, tool-free version of cognition that represents the real you, and anything external is contaminating it. But if extended mind theory is right — if human cognition has always been a coupled system of biological and external resources — then this pure tool-free baseline is a fiction. There is no unaugmented you being displaced by AI. There is only you, with different tools, doing different cognitive work.

Research on AI and cognitive enhancement frames this exactly right: using AI as a cognitive extension is philosophically continuous with what humans have always done. The anxieties it generates are recognizable as the same pattern that attended every previous cognitive technology.

The more productive framing isn't "is this cheating?" but "is this helping me develop the capacities I actually need?" Those are very different questions. The first is about authenticity, built on a questionable premise. The second is about effectiveness and growth.

Warning: Dismissing the "cheating" worry entirely is a mistake, though. The intuition is pointing at something real — it's just not pinpointing it accurately. What matters isn't whether AI is involved, but whether you're building genuine understanding or creating a convincing simulacrum of it. We'll spend much of this course learning to tell the difference.

The Critical Distinction: Extension vs. Replacement

Here is where the extended mind framework does its most important work for our purposes.

There's a crucial difference between cognitive extension — using tools to expand what your thinking can accomplish — and cognitive replacement — having the tool do the thinking instead of you. The distinction sounds simple, but it's genuinely difficult to maintain in practice, and AI makes it harder than any previous tool.

When Otto writes in his notebook, the notebook stores information that he then reasons about. The retrieval is automated; the reasoning isn't. When you use a calculator, the computation is automated; the problem-setup, interpretation, and judgment remain yours. When you use GPS, route-finding is automated; destination-choosing, context-reading, and decision-making remain yours.

At each step, there's a clear demarcation: here's what the tool does, here's what I do.

Generative AI complicates this in a way that earlier tools didn't. As the research published in Nature Communications highlights, LLMs don't just automate discrete procedures or information retrieval — they can perform integrative reasoning, synthesize across domains, generate arguments, and produce coherent prose on demand. The tool's reach extends much further into the cognitive territory that we'd previously reserved for humans alone.

This is what makes the question "is AI cognitively extending or replacing me?" genuinely hard to answer in any given moment. Ask an earlier tool to write an essay for you and you'd get nothing. Ask a language model, and you get something plausible. The apparent output looks the same whether you've been cognitively active or entirely passive. That opacity is new, and it matters.

The research on AI and education introduces a useful framing here: the distinction between offloading procedural or retrieval-based cognitive work (generally a reasonable trade-off, analogous to what earlier tools did) versus offloading integrative reasoning (where the risks are substantially higher, because that's the cognitive work that builds the durable understanding we actually need).

The framework that emerges from putting these ideas together looks something like this:

-

Cognitive extension: AI handles what it's good at (retrieval, synthesis of large information sets, pattern recognition across huge corpora, rapid prototyping) while you handle what you're good at (judgment, values, contextual reasoning, the things that require genuine understanding). The tool amplifies your reach without replacing your agency.

-

Cognitive replacement: AI does the integrative work that would otherwise develop your capacities, and you become a passive consumer of its outputs. The tool substitutes for your thinking rather than extending it.

The central practical goal of this course is to help you stay firmly in the first category while using AI that is powerful enough to pull you toward the second.

What Makes AI Genuinely Different (And Why It Matters)

Having established that AI is philosophically continuous with previous cognitive tools, it's important not to overcorrect into complacency. AI is different. Meaningfully so.

Every previous cognitive tool extended one or a few specific cognitive functions. Writing extended memory and communication. Calculators automated numerical computation. Search engines automated information retrieval. GPS automated spatial navigation. Each was powerful, but each was narrow. You couldn't ask a calculator to draft a legal argument or ask a search engine to explain its reasoning.

Large language models are something qualitatively different: they're broadly capable across an enormous range of cognitive domains. They can draft, reason, explain, translate, code, critique, summarize, argue, and generate. They're not narrow — they're general-purpose. And they're available to anyone with an internet connection, right now, for free or nearly so.

This generality is what makes AI both more exciting and more dangerous than previous cognitive tools. More exciting because the potential for genuine cognitive extension is vastly larger than anything we've had before. More dangerous because the surface area for cognitive replacement is also vastly larger. An AI that can do almost anything cognitive is also one that can invisibly take over almost any cognitive task, leaving you with the comfortable illusion that you thought when you didn't.

There's also the question of interaction design. Earlier cognitive tools had certain frictions built in — you had to do something to engage them productively. Writing takes effort. Calculators require you to set up the problem. Search requires you to formulate a query and evaluate results. Generative AI, by contrast, rewards the path of least resistance: ask vague questions, get plausible-sounding answers, move on. The tool is so capable that it can produce outputs that feel satisfying even when you've barely engaged with the problem at all.

This is why the extended mind framework is necessary but not sufficient. It establishes that using AI as a cognitive partner is not philosophically suspect — it's a natural extension of what humans do. But it doesn't tell you how to use AI as a genuine extension rather than a replacement. That's what the rest of this course is for.

Reframing the Right Questions

If you've absorbed the extended mind framework, you're now holding a different map of the terrain. And different maps suggest different questions.

Instead of asking "Is it okay to use AI?" — a question that smuggles in a problematic model of pure, unaugmented intelligence — the better questions are:

- What cognitive work am I doing, and what is the AI doing? Is there genuine division of cognitive labor, or am I a passive consumer?

- What capacities am I building or maintaining? Is this use of AI developing skills I need, or am I avoiding the productive struggle that builds them?

- What am I actually contributing? Is my judgment, context, and understanding genuinely guiding the process — or is the AI's output driving it?

- Could I do this without the AI, at least at some level? Not as a purity test, but as a check on whether genuine understanding is present.

These questions point toward something we'll develop throughout this course: a deliberate, metacognitive approach to AI use that keeps you in the cognitive driver's seat even when the AI is doing a lot of the work.

The ancient anxiety — that tools will make us lazy and shallow — isn't entirely unfounded. It identified a real risk. What it got wrong was the conclusion: the answer to that risk isn't to refuse the tools. It's to use them wisely. Socrates was wrong about writing, but he wasn't wrong that genuine understanding matters and that the appearance of knowledge can be confused for the real thing.

Plato, ironically, wrote everything down.

We are natural-born cyborgs who have been extending our minds with tools since before recorded history. AI is the latest — and most powerful — tool in that lineage. The question has never been whether to extend the mind. The question has always been how to extend it well.

That's what the rest of this course is about.

Only visible to you

Sign in to take notes.