Why AI Works Better for Experts Than Beginners

The Expertise Paradox: Why AI Helps Experts More Than Beginners (and What to Do About It)

You've got a concrete system for learning with AI now. But there's one more thing that changes everything: where you actually are in your learning journey. The spacing schedules, the retrieval practice, the protégé effect we covered in Section 9 — they all work differently depending on how much you already know when you start. And this is where the research gets genuinely counterintuitive.

Here's the thing that stops people: the people who benefit most from AI are the ones who need it least. This isn't a dig at AI. It's a crucial clue about how to use it wisely. Researchers have consistently found that expertise level is one of the strongest predictors of whether AI augments your thinking or quietly undermines the learning systems we just mapped out in Section 11. Experts use AI and get faster, sharper, more productive. Novices use the same tool for the same tasks and — this is the uncomfortable part — often end up knowing less, understanding less deeply, and less capable of independent thought than if they'd actually struggled through the spacing and retrieval cycles we outlined earlier. This is the expertise paradox, and it might be the single most important thing you understand before structuring your AI-augmented learning path.

What the Research Actually Shows

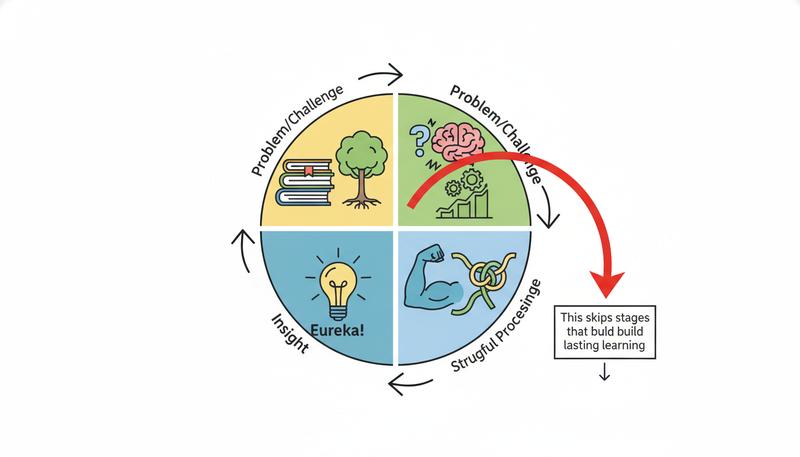

When you can't immediately solve a problem — when you're reaching for a word in a foreign language, trying to remember a theorem, working through a logical chain that keeps slipping away — your brain isn't just retrieving information. It's strengthening retrieval pathways, building associations, and slowly constructing the kind of rich, interconnected knowledge that experts have.

Cognitive scientists call this desirable difficulty. The friction is the whole point. When you struggle to recall something and then succeed, that memory encodes more durably than if you'd just read the answer. When you work through a math problem with several false starts, you're not just solving one problem — you're building a schema for that problem type that you'll instinctively recognize next time.

Here's what careless AI use does to that process: it removes the struggle before the struggle can do its job.

Ask an AI to explain a concept you're wrestling with, and the friction vanishes — but so does the learning signal. Your brain doesn't need to search, fail, reconstruct, or find connections. It just receives a clean answer. Knowledge arrives without being earned, and unearned knowledge is fragile. It looks like understanding when it's sitting in your working memory. It evaporates within days.

Experts aren't immune to this — they can certainly outsource too much and let specific skills atrophy. But they have something novices don't: a rich schema already built. When an expert asks AI to explain how a new Python library works, they're not building programming understanding from zero. They're adding a new room to a well-constructed house. The foundation is already there. The novice, meanwhile, is still pouring concrete — and AI is offering to skip that part entirely.

What Actually Counts as "Expert"?

The problem with "expert" and "novice" is that they're slippery, and the expertise paradox gets weird because it doesn't operate on a global level. You're not simply an expert or a novice across everything. You're a mosaic of different competency levels.

A cardiologist with thirty years of practice is a genuine expert in cardiac medicine. She's a complete novice in contract law. A software engineer who's shipped ten production systems is an expert in backend architecture — and an absolute beginner in watercolor painting. This should be obvious, but people forget it constantly when they pick up an AI tool, treating their general intelligence (which is real) as a substitute for domain-specific expertise (which takes real time to build).

What actually makes someone an expert in a given domain? A few markers worth noticing:

Pattern recognition speed. Experts see problem types almost instantly — they recognize "this is a dynamic programming problem" or "this patient has signs of an immune response, not an infection" before they've consciously analyzed all the details. Novices see an undifferentiated mass of information.

Mental models. Experts have rich internal representations of how the domain works — causal relationships, common failure modes, exception cases, the history of why things are the way they are. These models let them evaluate new information critically. Novices are still building these models.

Productive uncertainty. Paradoxically, experts are often more comfortable saying "I don't know" precisely because they know enough to recognize where their knowledge ends. Novices frequently don't know what they don't know, which is exactly why they're vulnerable to AI overconfidence.

Transfer. Experts can apply principles from one context to a new, unfamiliar context with reasonable accuracy. Novices struggle because they haven't yet abstracted the underlying principles from the specific examples they've learned.

Remember: You're an expert-novice mosaic, not one or the other. The question isn't "am I an expert?" — it's "what is my expertise level in this specific domain, at this level of specificity?" A novelist might be an expert at scene-level prose but a genuine novice at plotting — and those require different AI strategies.

Mapping Your Expertise Landscape

Before you use AI for any learning goal, do a brief but honest audit of where you actually stand. Here's a simple framework:

Level 0 — Blank Slate: You have essentially no prior knowledge. You don't know the vocabulary, the basic structure, or what questions are even worth asking. You can't evaluate whether information you receive is accurate.

Level 1 — Orientation: You understand the general shape of the domain. You know the major subdivisions, some key terminology, and could have a basic conversation. But you don't yet have mental models, and you can't evaluate nuanced claims.

Level 2 — Functional: You can do basic tasks with effort. You understand enough to know when something seems wrong. You have working knowledge that transfers in limited ways. You know some gaps; you probably don't know others.

Level 3 — Competent: You can handle a wide range of problems independently. Your mental models are reasonably accurate. You know your gaps. You can evaluate new information with reasonable reliability.

Level 4 — Expert: You see patterns others miss. Your mental models are rich and tested. You understand the history and debates in the field. You can mentor others effectively. You know the field's failure modes.

Take five minutes right now and map yourself across three to five domains or skills you're actively working on. Don't just pick the label you want — ask yourself the diagnostic questions: Can I catch errors in this domain? Do I have working mental models? Can I evaluate sources critically?

graph TD

A[New Learning Goal] --> B{Expertise Level?}

B -->|Level 0-1: Blank/Orientation| C[Sparingly Use AI]

B -->|Level 2: Functional| D[Selective AI Use]

B -->|Level 3-4: Competent/Expert| E[Leverage AI Freely]

C --> F[Focus: Build mental models manually]

C --> G[AI Role: Explain, don't solve]

D --> H[AI Role: Scaffolded challenge]

D --> I[Reduce AI on core skills]

E --> J[AI Role: Speed, scale, synthesis]

E --> K[Watch for atrophy in sub-skills]

The Deliberate Struggle Principle

Once you know your expertise level in a domain, you can apply what I'd call the deliberate struggle principle: identify which cognitive efforts are actually load-bearing for your development, and protect them from AI assistance — even when the shortcut is tempting.

This requires specificity. "Don't use AI when learning" is too blunt. The real question is: which particular struggle is doing the learning work right now?

For a beginning language learner, the load-bearing struggle is vocabulary retrieval — reaching for the word in Spanish, sitting with the discomfort of not having it, making an attempt, checking, and repeating. An AI that gives you the word instantly every time you hesitate has just turned a learning moment into a lookup operation.

For a beginning programmer, the load-bearing struggle is debugging — reading error messages, forming hypotheses about what went wrong, testing them, updating your mental model of how the code works. An AI that fixes your bugs on demand removes the exact process that teaches you how bugs happen and how code actually thinks. You end up with code that runs but understanding that doesn't.

For a medical student, the load-bearing struggle is differential diagnosis — holding multiple possibilities in mind simultaneously, weighing evidence, considering what would rule each one in or out. An AI that generates the differential for you skips exactly the cognitive exercise that builds clinical reasoning.

In each case, the thing you're tempted to outsource is the thing you most need to do yourself.

Warning: The clearest signal that you're about to outsource a load-bearing struggle is feeling stuck and uncomfortable. Discomfort isn't evidence that you need AI help. It's often evidence that the learning is working. Reach for AI assistance only after you've genuinely engaged with the problem — not as your first instinct.

This doesn't mean struggling forever on dead ends. It means giving yourself time to engage before reaching for help, and when you do use AI, using it to illuminate your error rather than erase it. "Here's what I tried and where I got stuck — help me understand what I'm missing" is infinitely more valuable than "solve this for me."

The Scaffolding Dial: A Dynamic System

The expertise paradox isn't static — it shifts as you develop. The right level of AI assistance at Level 0 is different from Level 2, which is different from Level 4. Think of it as a dial that gradually turns from "low AI reliance" toward "high AI leverage" as your competence builds.

This is actually how good human teachers work. When you apprentice with a master craftsperson, they don't hand you the finished product and say "watch how I did it." They give you progressively harder tasks, withdraw scaffolding as you develop, and let you fail in ways that teach without breaking you. As you grow more capable, they give you more autonomy and trust your judgment more.

You can design the same dynamic with AI:

Early phase (Levels 0-1): Use AI primarily for orientation — getting the lay of the land, understanding vocabulary, generating initial questions. Avoid using AI to solve problems you haven't attempted. When you do use AI, ask it to explain reasoning, not just provide answers. Spend significant time with primary sources, human teachers, and direct practice.

Middle phase (Level 2): Use AI to check your work after you've done it independently. Use it to generate more practice problems. Use it to explain why your approach was wrong when you were wrong — not to give you the right approach before you've tried. Start using AI to explore edge cases and exceptions you wouldn't have found on your own.

Later phase (Levels 3-4): Now you can lean on AI much more freely. Your mental models are solid enough to evaluate AI output critically. You can use AI to accelerate research, synthesize information at scale, stress-test your thinking, and explore adjacent domains. The risk of atrophy is real but manageable if you maintain deliberate practice of the core skills that matter most.

Tip: A simple heuristic for any learning situation: attempt the problem or task yourself first, for a defined time (five to fifteen minutes, depending on complexity). Then, if you use AI, don't just accept the answer — compare it to what you came up with. The comparison is where the learning actually happens.

Domain-Specific Guidelines

Different domains have different shapes of expertise development, which means the scaffolding dial needs different calibration depending on what you're learning.

Writing: The load-bearing struggle in writing is ideation and drafting — finding the words, structuring the argument, making choices about emphasis and omission. Using AI to generate first drafts when you're developing as a writer is like using a wheelchair when you're in physical therapy learning to walk. The exercise is the whole point. Experienced writers with established voices can use AI productively for brainstorming, editing passes, and structural feedback without threatening their core skill.

Programming: For beginners, the critical struggles are understanding what errors mean, debugging independently, and developing a mental model of how code executes. Using AI to fix bugs you haven't diagnosed is deeply counterproductive. For experienced engineers, AI is a force multiplier — it handles boilerplate, surfaces library functions, accelerates code review — without threatening core architectural thinking.

Mathematics: The entire value of math education, at almost every level, is developing problem-solving schema — learning to look at an unfamiliar problem and recognize what approach it requires. AI can skip all of that. Use it only after genuine attempt, and use it to show you where your reasoning broke down, not to generate the solution.

Languages: Vocabulary building, grammar intuition, and pronunciation all require repetitive struggle with discomfort. AI tutoring can be excellent for conversation practice at intermediate levels — it gives you the low-stakes, high-volume exposure that accelerates fluency. At beginner levels, be careful that AI isn't removing the retrieval practice that builds vocabulary into long-term memory.

Creative fields (music, visual art, design): The load-bearing struggles in creative development are aesthetic judgment and craft iteration — making choices, seeing what doesn't work, developing a personal sensibility through failure. AI assistance is tricky at early levels. Use it to expand your exposure to examples and styles, but be cautious about using AI to generate creative output you claim as your own development.

The Apprenticeship Model: Compressing Expert Feedback Loops

Here's where things get genuinely exciting for learners at Level 2 and beyond: AI can dramatically compress the feedback loops that historically took years in traditional apprenticeship.

Historically, developing expertise meant access to an expert who could watch you work and give specific, rapid feedback. That expert was expensive, rare, and often unavailable. Most learners got feedback that was slow (once a week in class), generic ("good work, try harder"), and disconnected from the actual moment of error.

AI changes this. At your current level of competence, you can now submit your work to an AI tutor that can give specific, immediate, detailed feedback on what you did and why it worked or didn't. The catch — and this circles back to everything we've discussed — is that you need to be far enough along in your expertise to ask good questions and evaluate the feedback you receive.

Research on human cognition suggests that human minds are "better than Bayesian" in important ways — they can detect exceptions, make intuitive leaps, and reason analogically in ways AI cannot. The apprenticeship model works best when you're using AI to supplement the human reasoning you're developing, not replace it.

A productive apprenticeship use of AI at Level 2+ might look like this: You write a paragraph arguing a position. You share it with an AI and ask: "What assumptions am I making that I haven't made explicit? Where would a smart critic push back on my reasoning? What counterargument would you find most compelling?" Now you're using AI to sharpen your thinking rather than produce it. The output is yours. The AI is the grindstone.

Using AI to Diagnose Your Own Expertise Level

Here's a metacognitive trick worth having: you can actually use AI to figure out where you are on the expertise spectrum in a new domain — if you do it carefully.

The approach is a structured diagnostic conversation rather than a request for information. It goes something like this:

Start by asking the AI to give you a brief conceptual overview of the domain — not a textbook summary, but a map of the major questions, debates, and subdivisions. Then ask it to give you a handful of problems or scenarios at different levels of difficulty. Work through them on your own, without AI help, and see where you start to struggle. Then bring your attempts back to the AI and ask: "Based on my approach to these problems, where would you say I am in my understanding? What are the most significant gaps I'm likely to have at this level?"

This is a genuinely useful diagnostic — not because the AI can read your mind, but because the process of attempting the problems and comparing your approach to what the AI explains will surface your gaps clearly. You're using AI as a mirror for your own competence rather than as a source of competence you borrow.

One caution here: research on AI and metacognition shows that people consistently overestimate how well they're doing when working with AI. The comfort of having AI around — its fluid, confident explanations, its lack of judgment — can make you feel more capable than you are. Build in skepticism. After any AI-assisted learning session, close the AI and test yourself on what you think you learned. That self-testing is the reality check your overconfident brain needs.

Failure as a Feature

We've talked a lot about the costs of avoiding struggle. Let's be direct about the flip side: making mistakes — real mistakes, without AI catching them — is often exactly the right pedagogical experience.

This sounds strange in a world where error correction is instant and embarrassment is optional. But cognitive science has consistently found that errors followed by corrective feedback produce stronger memory encoding than getting things right the first time. The emotional signal of "I thought I knew this and I didn't" is a powerful learning trigger. It's called the hypercorrection effect, and it's why the problems you get wrong on an exam tend to be the ones you remember best.

When AI catches your errors before you fully commit to them — before you've submitted, explained to someone else, or discovered through consequences that you were wrong — you lose the hypercorrection signal. The mistake never lands with enough weight to be memorable.

This doesn't mean you should wallow in ignorance or repeat the same errors endlessly. It means designing your learning so that errors have stakes and consequences before AI rescues you. Write your code and run it before asking AI if it's correct. Draft your analysis before asking AI to check it. Try to explain a concept out loud (or in writing) before asking AI to explain it. Commit to an answer, then check.

The feeling of being wrong, updating your understanding, and knowing why you were wrong — that's the whole sequence. AI should help you understand your errors, not prevent you from making them.

Putting It Together: A Decision Framework

When you sit down to use AI for any learning purpose, run through a quick mental checklist:

First: What is my expertise level in this specific domain, at this level of specificity? (Be honest. "I watch a lot of cooking shows" is not the same as knowing how to develop a sauce.)

Second: What is the load-bearing cognitive struggle in what I'm trying to learn right now? What mental work, if I skip it, means I haven't actually learned anything?

Third: Have I attempted this problem or task with genuine effort before reaching for AI? If not, set a timer and try first.

Fourth: Am I using AI to produce an output, or to understand a concept more deeply? (The former is often fine; the latter is what learning actually requires.)

Fifth: After this session, will I be able to do this without AI? If the honest answer is no, recalibrate.

This isn't about making AI harder to use. It's about using it in ways that actually deliver what you want: not just outputs, but genuine capability — the kind that stays with you when the internet is down, when you're in a meeting and need to think on your feet, when the problem is genuinely new and there's no chatbot that has seen it before.

The expertise paradox isn't a reason to avoid AI. It's a reason to be strategic — to know exactly where you are, to protect the struggles that build you, and to use AI's tremendous power at the moments when it genuinely accelerates rather than replaces your development.

The goal, after all, isn't to have access to a very smart tool. It's to become a very smart person who also has access to a very smart tool. Those two things feel similar in the moment and diverge dramatically over time.

Only visible to you

Sign in to take notes.