How to Write Better AI Prompts for Learning

Prompt Engineering for Learners: Getting the AI to Do What You Actually Need

You've now learned how to use the Cognitive Mirror — how to teach the AI what you know, spaced over time, with increasing difficulty. But there's a prerequisite skill you need to make that work: the ability to write prompts that support your thinking rather than bypass it.

There's a version of this section that would teach you how to write prompts that extract maximum information from AI. That's not what this is. What we're actually after is how to write prompts that make the teach-back loop more effective — prompts that expose gaps in your understanding, prompts that force you to be precise about what you're trying to learn, prompts that turn the AI into genuine scaffolding for your own thinking rather than a shortcut around it. An output-optimized prompt tries to get the most impressive response. A learning-optimized prompt, by contrast, sometimes deliberately asks the AI to do less so that you have to do more.

Most prompt engineering guides are written for developers, productivity hackers, or people who want to automate tasks. This section is for learners who want to use prompts as a tool for deeper understanding. The stakes are different: when you're building knowledge and skill through the teach-back method, the quality of your prompts doesn't just affect what the AI says. It affects whether each iteration of your teach-back cycle makes you smarter or just makes you feel smarter — which, as we've established, are two very different things.

Here's the key insight: writing a good prompt isn't overhead. It is the learning. The act of formulating a precise question forces you to audit your own understanding before the AI has said a word.

The Anatomy of an Effective Learning Prompt

The most useful frame I've found is to think of every learning prompt as having four components: role, context, constraint, and format. You don't need all four every time, but knowing which ones you're omitting — and why — helps you write better prompts deliberately rather than accidentally.

graph TD

A[Your Learning Goal] --> B[Role: Who should the AI be?]

A --> C[Context: What do you already know?]

A --> D[Constraint: What limits should apply?]

A --> E[Format: How should the response look?]

B --> F[Effective Learning Prompt]

C --> F

D --> F

E --> F

F --> G[Active, Critical Engagement]

Role means telling the AI what kind of interlocutor you need. "You are a skeptical peer reviewer" produces a very different response than "you are a patient tutor" — even with identical questions. The role shapes not just the tone but the epistemic stance. A skeptical reviewer looks for holes. A tutor looks for clarity. A Socratic questioner resists giving direct answers. Match the role to your actual learning need.

Context means giving the AI enough information about where you are so it can meet you there. What do you already understand? What have you tried? What course or book are you working from? Without this, the AI has to guess your level, and it often guesses wrong — either patronizing you or assuming background knowledge you don't have. Cognitive science research on tutoring consistently shows that the most effective instruction is adaptive: it starts from the learner's current state, not a generic "beginner" or "advanced" category.

Constraint is the secret ingredient most learners skip. We'll dig into this more in a moment, but the short version is: adding restrictions to your prompt often produces better AI responses for learning purposes, not worse ones. "Explain this without using any analogies" forces the AI to engage with the actual structure of the concept. "Limit your answer to three sentences" prevents you from getting a wall of text you'll passively absorb instead of actively process.

Format means specifying how you want the information structured. Should it give you a numbered list? A dialogue? A series of questions for you to answer? A comparison table? Format matters more than most people realize because it determines how you'll interact with the response — whether you'll read it passively or engage with it actively.

Compare these two prompts about the same topic:

Weak prompt: "Explain sunk cost fallacy."

Strong prompt: "I'm studying cognitive biases and I understand the basics of loss aversion, but I'm fuzzy on how sunk cost fallacy specifically relates to future decision-making versus past investment. Act as a skeptical professor. Don't give me a definition — instead, give me two contrasting scenarios where a smart person would make different choices based on whether they understand the sunk cost fallacy or not. Then ask me which choice is correct and why."

The second prompt takes thirty seconds longer to write. It will teach you dramatically more, and here's why: it forces you to engage with the material before you've even seen the answer.

Common Prompt Mistakes That Turn AI Into an Answer Machine

Every learner falls into certain bad prompt patterns. Recognizing them is the first step to breaking free.

The open-ended summons. "Tell me about X." "Explain Y to me." "What is Z?" These put you in passive reception mode before you've even started. They signal: AI, you drive. And the AI will drive — straight into a confident, comprehensive overview that slides right past your brain like water off a windshield.

The verification request. "Is my understanding of X correct?" — stated without actually giving your understanding. This sounds like active engagement but often isn't. It's really just an invitation for the AI to validate you with a "yes, and here's some more context" or correct you with an explanation that's no better than anything you could have found yourself. Better approach: State your understanding in detail first, then ask the AI to critique it specifically.

The summary trap. Asking AI to summarize something you haven't read yet. As Scott Young notes in his ChatGPT learning guide, summaries are one of the things AI genuinely does well — which is exactly why leaning on them too early is dangerous. Summaries are most valuable when you have a prior mental model to hang them on. Otherwise, you're just collecting someone else's compression of ideas you haven't yet formed your own relationship with.

The confirmation loop. Asking the AI to "help you understand" something when what you really want is to be told you're right. This one's subtle and often unconscious. Watch for it when you notice yourself feeling satisfied with AI responses without ever having your thinking challenged.

The completeness request. "Give me everything I need to know about X." This almost guarantees passive consumption. There's no question being answered here — just a content firehose. And because AI completeness looks like expertise, you'll feel like you've covered the ground even when you've only skimmed the surface.

Warning: The prompts that feel easiest to write are usually the ones least likely to make you think. "Explain this to me" is cognitively cheaper than "Here's my current understanding — tell me specifically where I'm wrong." But the second prompt is where the actual learning lives.

The Specificity Principle in Practice

Specificity is the single highest-leverage variable in learning prompts. Not because specific questions get more accurate answers (though they do), but because specific questions require you to think clearly enough to ask them.

Here's a test you can run right now. Before your next AI conversation about a learning topic, write down your question in plain language. Then ask yourself: Could this question be answered by five different people in five completely different ways, all legitimately? If yes, the question is underspecified.

Watch what happens when you revise:

- "What's wrong with my business model?" → "I'm building a subscription business targeting small law firms. The value prop is time savings on contract review. My current concern is whether my customer acquisition cost will ever allow a positive LTV ratio given the sales cycle length. What are the three most important variables I should be modeling?"

- "Help me understand thermodynamics." → "I understand that entropy relates to disorder, but I don't understand why entropy always increases rather than decreasing back toward order — specifically, what stops molecules from accidentally returning to a low-entropy state?"

- "Explain the French Revolution." → "I'm trying to understand why the Estates-General failed to solve France's fiscal crisis in 1789 — specifically, why the First and Second Estates refused compromises that would have preserved more of their power than they ultimately kept."

Notice what each revision requires: you have to know enough to know what you don't know. This is the specificity principle's hidden benefit — it forces you into a metacognitive loop before you even send the prompt. The thinking that goes into writing the prompt is where the learning starts.

Constraint Prompts: How Restrictions Make AI More Useful for Learning

This is one of the more counterintuitive ideas in this section, so let's sit with it for a moment.

Adding constraints to your prompts doesn't limit the AI's usefulness — it redirects it. Specifically, it shifts the AI from "give me the comprehensive answer" mode into "help me engage with the edges and tensions of this idea" mode. The comprehensive answer is often the least educational one, because it's designed to terminate your inquiry, not continue it.

Here are constraint types that consistently produce better learning responses:

Length constraints. "Explain this in no more than three sentences" forces both you and the AI to identify what actually matters. Then you can ask "what got left out and why?" — which is often where the most interesting learning lives.

Analogy bans. "Explain this without using any analogies or metaphors." Analogies are pedagogically powerful, but they're also a way of avoiding the actual structure of an idea. Forcing a non-analogical explanation reveals whether you (and the AI) understand the mechanism, not just the metaphor.

No-jargon constraints. "Explain this as if neither of us knows the technical vocabulary." This is different from "explain it simply" — it forces the AI to construct an explanation from first principles rather than importing specialist language.

Perspective constraints. "Explain this from the perspective of someone who thinks this idea is completely wrong." This forces engagement with the strongest version of the opposing view — the steel-manning technique in prompt form.

Single-variable constraints. "Don't explain the whole system — just explain why [specific component X] works the way it does, treating everything else as a black box." Isolating variables is how experts actually diagnose complex systems, and this constraint pattern trains that habit of mind.

Assumption-surfacing constraints. "List the assumptions you're making to answer this question before you answer it." This one is powerful because it makes the AI's epistemic commitments visible, which is where you should be directing your critical attention.

The Perspective-Taking Prompt

One of the most versatile prompt patterns for learners is what I call the perspective-taking prompt. The structure is simple: you ask the AI to explain, evaluate, or respond to something through the lens of a specific perspective — and that perspective is the constraint that generates insight.

The most useful perspective frames for learning:

The skeptic. "Respond to this argument as a rigorous skeptic who has seen many similar claims fail. What would you challenge first?" This is particularly useful when you're evaluating something you're inclined to agree with — it's a tool for intentionally introducing friction into your thinking.

The expert in an adjacent field. "How would a statistician evaluate this claim made in an economics paper?" or "How would a historian respond to this political science argument?" Cross-domain perspectives surface assumptions that specialists inside a field can no longer see.

The novice. "Explain what a complete beginner would find confusing or counterintuitive about this concept." This is the teaching equivalent of asking someone to proofread their own writing — fresh eyes find gaps that expertise has made invisible.

The historical practitioner. "How would someone working in this field in 1950 have understood this problem differently, and what did they get right that we've since forgotten?" This temporally displaced perspective is surprisingly useful for cutting through accumulated assumptions in any field.

The devil's advocate. "You are committed to arguing the opposite position of whatever I say next, with the strongest possible reasoning." Having the AI argue against you is uncomfortable, which is exactly why it works — discomfort is where learning happens.

These aren't just clever tricks. MIT's analysis of critical thinking and AI tools suggests that effective critical thinking requires the application of specific frameworks to novel situations — and perspective-taking prompts force exactly that kind of structured epistemic exercise.

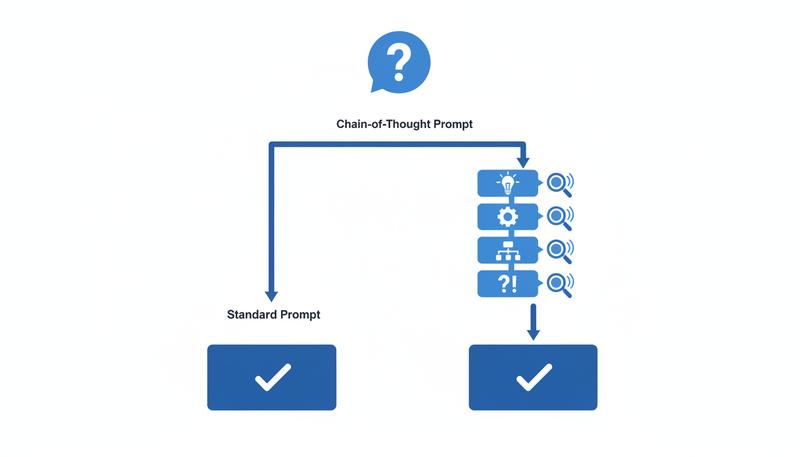

Chain-of-Thought Prompting: Making Reasoning Visible

Standard AI responses give you conclusions. Chain-of-thought prompting gives you the reasoning path — and the reasoning path is where the educational value lives.

The basic technique is simple: instead of asking the AI what the answer is, ask it to think through the problem step by step before arriving at an answer. In practice, this means adding phrases like:

- "Think through this step by step before giving me your conclusion."

- "Walk me through your reasoning, including any assumptions you're making at each step."

- "Before answering, explain what a careful thinker would need to consider to answer this well."

Why does this matter for learners? Because when you can see the AI's reasoning chain, you can evaluate it. You can identify where it makes a logical leap you're not sure you follow, where it imports an assumption you'd want to question, or where there might be an alternative path it didn't consider. The response becomes a thinking artifact you can examine, not just a conclusion you have to accept or reject wholesale.

There's a practical bonus: chain-of-thought responses often reveal when the AI's reasoning doesn't actually support its conclusion — a kind of error that's invisible when you're only shown the bottom line. This trains you to read AI output with the same scrutiny you'd apply to a student essay: does the conclusion actually follow from the argument?

You can extend this by asking the AI to make its uncertainty visible: "When you reach steps in your reasoning where you're less confident, flag them explicitly." This is metacognitive prompting — you're asking the AI to model the kind of epistemic humility that good thinkers maintain throughout a reasoning process, not just at the end.

The "What Am I Missing?" Prompt Family

This is perhaps the highest-leverage prompt pattern in this entire section, and it's criminally underused. The core insight is simple: the most important thing an AI conversation can tell you is not what you asked, but what you didn't know to ask.

The "what am I missing?" family of prompts is specifically designed to surface your blind spots. Here are the main variants:

The direct version. "Based on what I've told you about my understanding of X, what important aspects haven't I asked about or mentioned?" This requires you to give your current understanding first, which is itself useful — the act of articulating what you know reveals what you don't.

The assumption audit. "What assumptions am I making that I might not realize I'm making?" Best used after you've presented an argument or plan. This prompt treats the AI as an outside perspective that can see your scaffolding.

The adjacent territory prompt. "What do experts in this field spend a lot of time thinking about that a well-read non-expert would probably underestimate or miss?" This surfaces the implicit knowledge that separates expert understanding from layperson understanding of a domain.

The counterintuitive question prompt. "What's a question about this topic that would surprise most people who think they understand it?" This is a way of discovering the parts of your mental map that have edges where the territory is more complex than the map suggests.

The consensus-edge prompt. "Where is there genuine disagreement among experts on this topic, and what's at stake in those disagreements?" This combats the AI's tendency to present contested questions as if they have clear answers, and it orients you toward the live intellectual action in a field rather than its settled consensus.

The "what am I missing?" family works because it inverts the usual dynamic of AI interaction. Instead of asking for information the AI has that you want, you're asking the AI to help you find the edges of your own ignorance — which is precisely the metacognitive capacity that distinguishes good learners from passive information consumers.

Tip: Start any new learning session by telling the AI what you already know and asking what you're probably missing. You'll often get more educational value from that single exchange than from an hour of standard Q&A.

Iterative Refinement: Conversations, Not Queries

Most people treat AI conversations as a series of independent queries: ask a question, get an answer, ask another question. This is the least effective way to use AI for learning. What you want instead is iterative refinement — treating the conversation as a collaborative draft that you're building toward a better understanding together.

Iterative refinement means:

Following threads rather than pivoting. When an AI response opens up an interesting sub-question, stay with it. "You mentioned X — can we go deeper into that specifically before moving on?" This builds depth rather than breadth.

Pushing back on your satisfaction. When you feel like you understand something, that's precisely when to apply pressure. "I feel like I understand your explanation — but could you give me a case where the principle breaks down or fails to predict correctly?" Comfortable comprehension is often the first sign that you've learned the shape of an idea without its substance.

Building on previous exchanges explicitly. "Earlier you said X, and now you're saying Y — can you help me reconcile these?" AI has a tendency to be inconsistent across long conversations, and tracking those inconsistencies is both a way to improve the AI's output and a way to deepen your own understanding.

Introducing your own reasoning into the conversation. "My intuition is that Z is the case, and here's why — what's wrong with this reasoning?" This flips the typical dynamic: instead of asking the AI to think so you can absorb its thinking, you're thinking out loud and asking the AI to engage with your reasoning.

Escalating toward application. The endpoint of a learning conversation shouldn't be "I understand the concept." It should be "I can apply this concept to a novel situation I haven't seen before." Use the iterative structure to work toward application: "Now that I understand the principle, give me three cases I haven't seen and ask me to predict the outcome before you tell me if I'm right."

This approach turns AI from an encyclopedia you consult into something closer to what research on effective tutoring consistently identifies as the key ingredient of human tutoring: adaptive, responsive engagement with your actual current understanding, not a generic presentation of material.

Memory and Context Management

Here's a practical problem that every serious AI learner runs into: conversations get long, and AI has limited context windows. More subtly, even within a conversation, the AI's sense of what's been established can drift, leading to responses that subtly contradict earlier agreements or ignore context you provided.

A few practices that help:

Front-load your context. At the start of any learning session with a specific goal, spend two to three sentences establishing who you are (for this conversation), what you're trying to learn, and what you already know. This isn't redundant — it creates a context frame the AI will (imperfectly) hold onto throughout the session.

Summarize periodically. After a substantial exchange, ask the AI to summarize what's been established: "Given our conversation so far, what are the key points we've agreed on or clarified?" This serves two purposes: it tests whether the AI is maintaining coherent context, and it gives you a comprehension check of your own.

Use headings and structure in your prompts. Particularly for complex topics, structuring your prompt with explicit labels ("My current understanding: ... | What I'm confused about: ... | What I want to explore: ...") helps the AI parse your intent and helps you organize your own thinking.

Create external memory. For extended learning projects, keep a running document of insights, questions, and disagreements that emerge from your AI conversations. This is your cognitive scaffolding across sessions. At the start of a new session, paste a summary of what you've established so far — it dramatically improves continuity.

Name your constraints. If you've established a particular framing ("for this conversation, you're a skeptical statistician"), explicitly invoke it when you change topics: "Still speaking as the skeptical statistician, what do you think about..."

None of these are magic solutions — AI context management is a genuine technical limitation, not just a prompt design problem. But working around that limitation consciously is better than being unknowingly affected by it.

Building Your Personal Prompt Library

Here's something concrete you can implement today. Over time, you'll develop a small set of high-leverage prompts that you use repeatedly across different learning contexts. Keeping these in an accessible document — your prompt library — means you don't have to reinvent effective prompts from scratch every session.

Your prompt library for learning purposes should include at minimum:

Your "new topic" onboarding prompt. Something like: "I'm beginning to learn about [X]. My background is [Y]. I want to understand [specific goal]. Start by asking me three questions that will help you understand what I already know and where I'm most likely to have misconceptions."

Your "go deeper" prompt. "The explanation you gave makes sense on the surface, but I want to understand the mechanism, not just the pattern. Explain [specific aspect] in terms of underlying causes, not just observed effects."

Your "challenge me" prompt. "I think I understand [concept]. Ask me three progressively harder questions that would reveal whether I actually understand it or just think I do."

Your "find my blind spots" prompt. "Based on what I've said, what important questions haven't I asked? What would an expert consider that I seem to be overlooking?"

Your "skeptic review" prompt. "Here's my current thinking about [topic/argument/plan]. Take the position of a rigorous skeptic and identify the three weakest points in my reasoning."

Your "explain the disagreement" prompt. "What do the people who disagree with [mainstream view X] actually believe, and what's the strongest version of their argument? Don't just summarize the fringe — steelman the opposition."

These aren't scripts — they're starting templates. Personalize them to your learning style and subject matter. The act of building and refining your own prompt library is itself a metacognitive practice: it requires you to think about how you think, and what kinds of intellectual engagement actually move you forward.

Prompts That Protect Metacognition

We've come back again and again throughout this course to the central risk of AI-assisted learning: the feeling of understanding replacing actual understanding. Passive consumption dressed as active engagement. The challenge isn't knowing this intellectually — it's building prompts that structurally prevent it.

Here are prompt patterns specifically designed to keep your metacognition active:

The pre-commitment prompt. Before asking a question, write down your best current guess at the answer, then include it in your prompt: "My current best guess is X because Y — now help me figure out whether this is right and what I'm missing." This forces you to activate your prior knowledge before receiving new information, which is how retrieval practice works.

The confusion-first prompt. "I'm confused about X, specifically [be precise]. Don't explain X from scratch — explain just the part I've identified as confusing, and check whether my confusion makes sense or whether I've misunderstood something upstream."

The summary-before prompt. At the end of a learning exchange, before the AI summarizes, you summarize: "Let me tell you what I think we've established, and you tell me if I've captured it accurately or missed anything important." This is retrieval practice built into the conversation structure.

The "teach back" prompt. "I'm going to explain this concept back to you as if you don't know it. Listen for gaps, errors, or oversimplifications in my explanation and correct them." Then explain it. This is the protégé effect (which we covered in detail in the previous section) applied in real time.

The uncertainty-surfacing prompt. "On a scale of confidence, how certain are you about each of the main claims you just made? Flag anything where experts actually disagree or where you might be wrong."

These prompts do something important: they make the AI's role clearly auxiliary to your own thinking. You're not asking the AI to understand something so you can observe the understanding. You're using the AI as a mirror, a pressure-tester, and a sparring partner while you do the actual cognitive work.

That distinction — AI as instrument of your thinking, not replacement for it — is the entire thesis of this course made operational. And it turns out prompt engineering, done right, is one of the most powerful ways to maintain it in practice.

The goal isn't to become a "prompt engineering expert" in the sense that phrase usually implies: someone who knows clever tricks for extracting impressive outputs. The goal is to become the kind of learner who knows how to structure a productive thinking conversation — with an AI, yes, but also with a mentor, a textbook, a colleague, or your own notes. The discipline of writing a good learning prompt is the discipline of knowing what you're trying to think through and how you want to engage with it.

That's just good thinking. The AI is just the sparring partner.

Only visible to you

Sign in to take notes.